ESA will pay an Italian company nearly $50 million to design a mini-Starship

The European Space Agency signed a contract Monday with Avio, the Italian company behind the small Vega rocket, to begin designing a reusable upper stage capable of flying into orbit, returning to Earth, and launching again.

This is a feat more difficult than recovering and reusing a rocket’s booster stage, something European industry has also yet to accomplish. SpaceX’s workhorse Falcon 9 rocket has a recoverable booster, and several companies in the United States, China, and Europe are trying to replicate SpaceX’s success with the partially reusable Falcon 9.

While other rocket companies try to catch up with the Falcon 9, SpaceX has turned its research and development dollars toward Starship, an enormous fully reusable rocket more than 400 feet (120 meters) tall. Even SpaceX, buttressed by the deep pockets of one of the world’s richest persons, has had trouble perfecting all the technologies required to make Starship work.

But SpaceX is making progress with Starship, so it’s no surprise some other rocket builders want to copy it. The European Space Agency’s contract with Avio is the latest example.

Preliminary design

ESA and Avio signed the deal, worth 40 million euros ($47 million), on the sidelines of the International Astronautical Congress in Sydney. In a statement, Avio said it will “define the requirements, system design, and enabling technologies needed to develop a demonstrator capable of safely returning to Earth and being reused in future missions.”

At the end of the two-year contract, Avio will deliver a preliminary design for the reusable upper stage and the ground infrastructure needed to make it a reality. The preliminary design review is a milestone in the early phases of an aerospace project, typically occurring many years before completion. For example, Europe’s flagship Ariane 6 rocket passed its preliminary design review in 2016, eight years before its first launch.

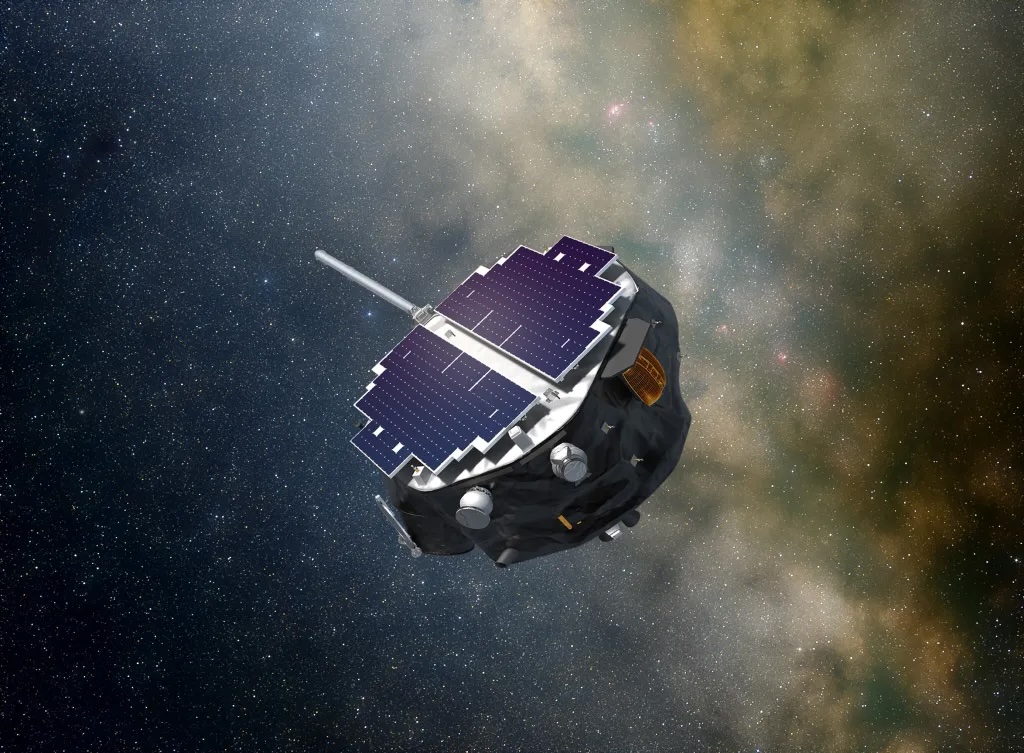

An artist’s concept released by Avio and ESA shows what the reusable upper stage might look like. The vehicle bears an uncanny resemblance to SpaceX’s Starship, with four flaps affixed to the top and the bottom. The reusable upper stage is mounted atop a booster stage akin to Avio’s solid-fueled Vega rocket. Avio and ESA did not release any specifications on the size or performance of the launcher.

ESA will pay an Italian company nearly $50 million to design a mini-Starship Read More »