Web portal leaves kids’ chats with AI toy open to anyone with Gmail account

Just about anyone with a Gmail account could access Bondu chat transcripts.

Earlier this month, Joseph Thacker’s neighbor mentioned to him that she’d preordered a couple of stuffed dinosaur toys for her children. She’d chosen the toys, called Bondus, because they offered an AI chat feature that lets children talk to the toy like a kind of machine-learning-enabled imaginary friend. But she knew Thacker, a security researcher, had done work on AI risks for kids, and she was curious about his thoughts.

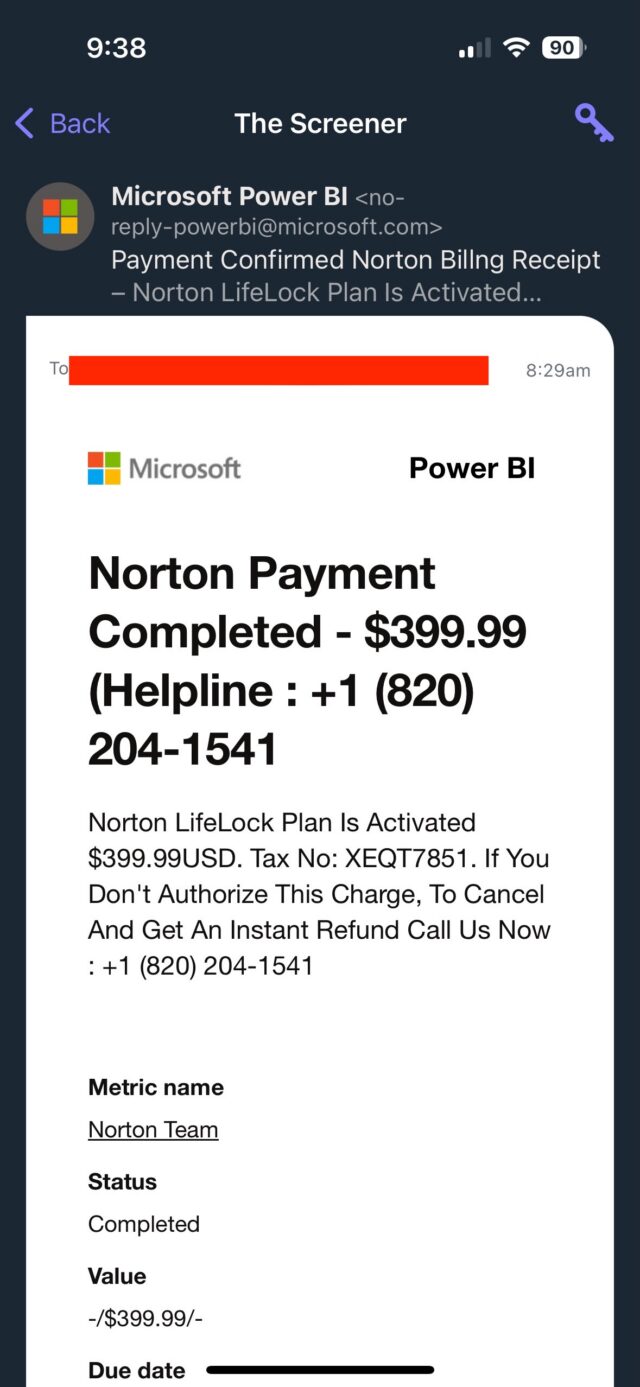

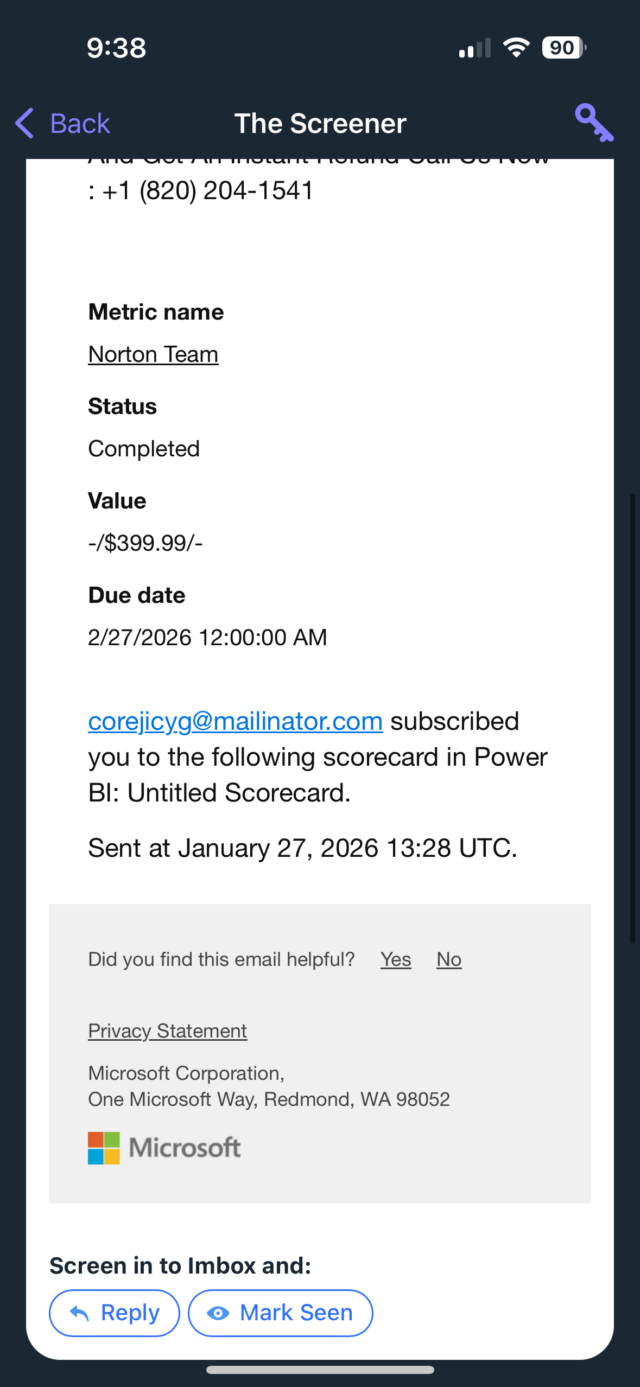

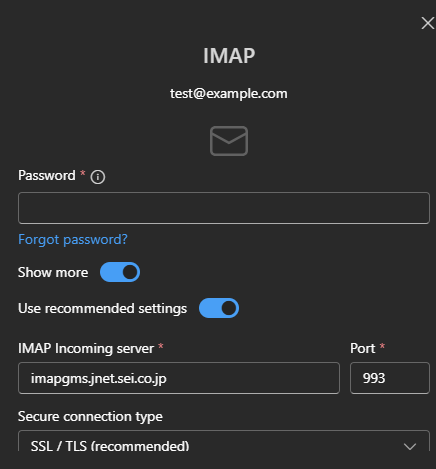

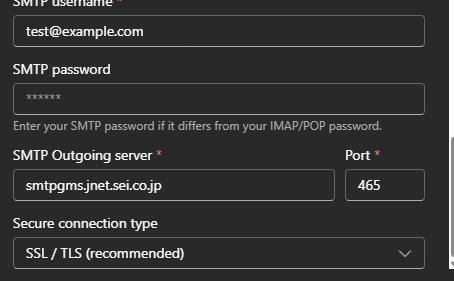

So Thacker looked into it. With just a few minutes of work, he and a web security researcher friend named Joel Margolis made a startling discovery: Bondu’s web-based portal, intended to allow parents to check on their children’s conversations and for Bondu’s staff to monitor the products’ use and performance, also let anyone with a Gmail account access transcripts of virtually every conversation Bondu’s child users have ever had with the toy.

Without carrying out any actual hacking, simply by logging in with an arbitrary Google account, the two researchers immediately found themselves looking at children’s private conversations, the pet names kids had given their Bondu, the likes and dislikes of the toys’ toddler owners, their favorite snacks and dance moves.

In total, Margolis and Thacker discovered that the data Bondu left unprotected—accessible to anyone who logged in to the company’s public-facing web console with their Google username—included children’s names, birth dates, family member names, “objectives” for the child chosen by a parent, and most disturbingly, detailed summaries and transcripts of every previous chat between the child and their Bondu, a toy practically designed to elicit intimate one-on-one conversation. Bondu confirmed in conversations with the researchers that more than 50,000 chat transcripts were accessible through the exposed web portal, essentially all conversations the toys had engaged in other than those that had been manually deleted by parents or staff.

“It felt pretty intrusive and really weird to know these things,” Thacker says of the children’s private chats and documented preferences that he saw. “Being able to see all these conversations was a massive violation of children’s privacy.”

When Thacker and Margolis alerted Bondu to its glaring data exposure, they say, the company acted to take down the console in a matter of minutes before relaunching the portal the next day with proper authentication measures. When WIRED reached out to the company, Bondu CEO Fateen Anam Rafid wrote in a statement that security fixes for the problem “were completed within hours, followed by a broader security review and the implementation of additional preventative measures for all users.” He added that Bondu “found no evidence of access beyond the researchers involved.” (The researchers note that they didn’t download or keep any copies of the sensitive data they accessed via Bondu’s console, other than a few screenshots and a screen-recording video shared with WIRED to confirm their findings.)

“We take user privacy seriously and are committed to protecting user data,” Anam Rafid added in his statement. “We have communicated with all active users about our security protocols and continue to strengthen our systems with new protections,” as well as hiring a security firm to validate its investigation and monitor its systems in the future.

While Bondu’s near-total lack of security around the children’s data that it stored may be fixed, the researchers argue that what they saw represents a larger warning about the dangers of AI-enabled chat toys for kids. Their glimpse of Bondu’s backend showed how detailed the information is that it stored on children, keeping histories of every chat to better inform the toy’s next conversation with its owner. (Bondu thankfully didn’t store audio of those conversations, auto-deleting them after a short time and keeping only written transcripts.)

Even now that the data is secured, Margolis and Thacker argue that it raises questions about how many people inside companies that make AI toys have access to the data they collect, how their access is monitored, and how well their credentials are protected. “There are cascading privacy implications from this,” says Margolis. ”All it takes is one employee to have a bad password, and then we’re back to the same place we started, where it’s all exposed to the public internet.”

Margolis adds that this sort of sensitive information about a child’s thoughts and feelings could be used for horrific forms of child abuse or manipulation. “To be blunt, this is a kidnapper’s dream,” he says. “We’re talking about information that lets someone lure a child into a really dangerous situation, and it was essentially accessible to anybody.”

Margolis and Thacker point out that, beyond its accidental data exposure, Bondu also—based on what they saw inside its admin console—appears to use Google’s Gemini and OpenAI’s GPT5, and as a result may share information about kids’ conversations with those companies. Bondu’s Anam Rafid responded to that point in an email, stating that the company does use “third-party enterprise AI services to generate responses and run certain safety checks, which involves securely transmitting relevant conversation content for processing.” But he adds that the company takes precautions to “minimize what’s sent, use contractual and technical controls, and operate under enterprise configurations where providers state prompts/outputs aren’t used to train their models.”

The two researchers also warn that part of the risk of AI toy companies may be that they’re more likely to use AI in the coding of their products, tools, and web infrastructure. They say they suspect that the unsecured Bondu console they discovered was itself “vibe-coded”—created with generative AI programming tools that often lead to security flaws. Bondu didn’t respond to WIRED’s question about whether the console was programmed with AI tools.

Warnings about the risks of AI toys for kids have grown in recent months but have largely focused on the threat that a toy’s conversations will raise inappropriate topics or even lead them to dangerous behavior or self-harm. NBC News, for instance, reported in December that AI toys its reporters chatted with offered detailed explanations of sexual terms, tips about how to sharpen knives, and even seemed to echo Chinese government propaganda, stating for example that Taiwan is a part of China.

Bondu, by contrast, appears to have at least attempted to build safeguards into the AI chatbot it gives children access to. The company even offers a $500 bounty for reports of “an inappropriate response” from the toy. “We’ve had this program for over a year, and no one has been able to make it say anything inappropriate,” a line on the company’s website reads.

Yet at the same time, Thacker and Margolis found that Bondu was simultaneously leaving all of its users’ sensitive data entirely exposed. “This is a perfect conflation of safety with security,” says Thacker. “Does ‘AI safety’ even matter when all the data is exposed?”

Thacker says that prior to looking into Bondu’s security, he’d considered giving AI-enabled toys to his own kids, just as his neighbor had. Seeing Bondu’s data exposure firsthand changed his mind.

“Do I really want this in my house? No, I don’t,” he says. “It’s kind of just a privacy nightmare.”

This story originally appeared on wired.com.

Web portal leaves kids’ chats with AI toy open to anyone with Gmail account Read More »