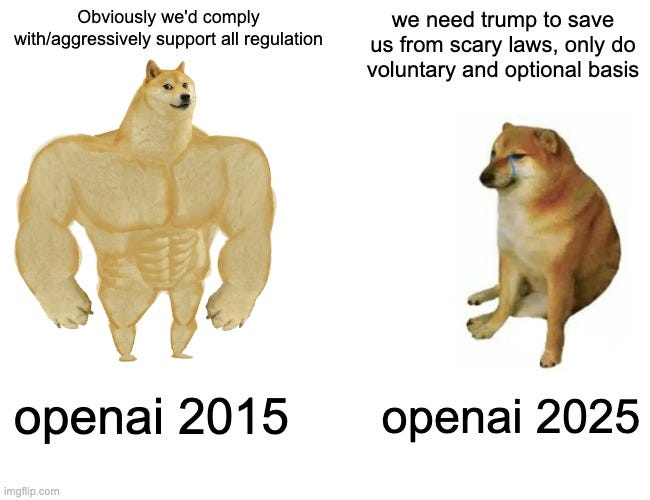

Last week I covered Anthropic’s submission to the request for suggestions for America’s action plan. I did not love what they submitted, and especially disliked how aggressively they sidelines existential risk and related issues, but given a decision to massively scale back ambition like that the suggestions were, as I called them, a ‘least you can do’ agenda, with many thoughtful details.

OpenAI took a different approach. They went full jingoism in the first paragraph, framing this as a race in which we must prevail over the CCP, and kept going. A lot of space is spent on what a kind person would call rhetoric and an unkind person corporate jingoistic propaganda.

Their goal is to have the Federal Government not only not regulate AI or impose any requirements on AI whatsoever on any level, but also prevent the states from doing so, and ensure that existing regulations do not apply to them, seeking ‘relief’ from proposed bills, including exemption from all liability, explicitly emphasizing immunity from regulations targeting frontier models in particular and name checking SB 1047 as an example of what they want immunity from, all in the name of ‘Freedom to Innovate,’ warning of undermining America’s leadership position otherwise.

None of which actually makes any sense from a legal perspective, that’s not how any of this works, but that’s clearly not what they decided to care about. If this part was intended as a serious policy proposal it would have tried to pretend to be that. Instead it’s a completely incoherent proposal, that goes halfway towards something unbelievably radical but pulls back from trying to implement it.

Meanwhile, they want the United States to not only ban Chinese ‘AI infrastructure’ but also coordinate with other countries to ban it, and they want to weaken the compute diffusion rules for those who cooperate with this, essentially only restricting countries with a history or expectation of leaking technology to China, or those who won’t play ball with OpenAI’s anticompetitive proposals.

They refer to DeepSeek as ‘state controlled.’

They claim that DeepSeek could be ordered to alter its models to cause harm, if one were to build upon them, seems to fundamentally misunderstand that DeepSeek is releasing open models. You can’t modify an open model like that. Nor can you steal someone’s data if they’re running their own copy. The parallel to Huawei is disingenuous at best, especially given the source.

They cite the ‘Belt and Road Initiative’ and claim to expect China to coerce people into using DeepSeek’s models.

For copyright they proclaim the need for ‘freedom to learn’ and asserts that AI training is fully fair use and immune from copyright. I think this is a defensible position, and myself support mandatory licensing similar to radio for music, in a way that compensates creators. I think the position here is defensible. But the rhetoric?

They all but declare that if we don’t apply fair use, the authoritarians will conquer us.

If the PRC’s developers have unfettered access to data and American companies are left without fair use access, the race for AI is effectively over. America loses, as does the success of democratic AI.

It amazes me they wrote that with a straight face. Everything is power laws. Suggesting that depriving American labs of some percentage of data inputs, even if that were to happen and the labs were to honor those restrictions (which I very much do not believe they have typically been doing), would mean ‘the race is effectively over’ is patently absurd. They know that better than anyone. Have they no shame? Are they intentionally trying to tell us that they have no shame? Why?

This document is written in a way that seems almost designed to make one vomit. This is vice signaling. As I have said before, and with OpenAI documents this has happened before, when that happens, I think it is important to notice it!

I don’t think the inducing of vomit is a coincidence. They chose to write it this way. They want people to see that they are touting disingenuous jingoistic propaganda in a way that seems suspiciously corrupt. Why would they want to signal that? You tell me.

You don’t publish something like this unless you actively want headlines like this:

Evan Morrison: Altman translated – if you don’t give Open AI free access to steal all copyrighted material by writers, musicians and filmmakers without legal repercussions then we will lose the AI race with China – a communist nation which nonetheless protects the copyright of individuals.

There are other similar and similarly motivated claims throughout.

The claim that China can circumvent some regulatory restrictions present in America is true enough, and yes that constitutes an advantage that could be critical if we do EU-style things, but the way they frame it goes beyond hyperbolic. Every industry, everywhere, would like to say ‘any requirements you place upon me make our lives harder and helps our competitors, so you need to place no restrictions on us of any kind.’

Then there’s a mix of proposals, some of which are good, presented reasonably:

Their proposal for a ‘National Transmission Highway Act’ on par with the 1956 National Interstate and Defense Highways Act seems like it should be overkill, but our regulations in these areas are deeply fed, so if as they suggest here it is focused purely on approvals I am all for that one. They also want piles of government money.

Similarly their idea of AI ‘Opportunity Zones’ is great if it only includes sidestepping permitting and various regulations. The tax incentives or ‘credit enhancements’ I see as an unnecessary handout, private industry is happy to make these investments if we clear the way.

The exception is semiconductor manufacturing, where we do need to provide the right incentives, so we will need to pay up.

Note that OpenAI emphasizes the need for solar and wind projects on top of other energy sources.

Digitization of government data currently in analog form is a great idea, we should do it for many overdetermined reasons. But to point out the obvious, are we then going to hide that data from PRC? It’s not an advantage to American AI companies if everyone gets equal access.

The Compact for AI proposal is vague but directionally seems good.

Their ‘national AI Readiness Strategy’ is part of a long line of ‘retraining’ style government initiatives that, frankly, don’t work, and also aren’t necessary here. I’m fine with expanding 529 savings plans to cover AI supply chain-related training programs, I mean sure why not, but don’t try to do much more than that. The private sector is far better equipped to handle this one, especially with AI help.

I don’t get the ‘creating AI research labs’ strategy here, it seems to be a tax on AI companies payable to universities? This doesn’t actually make economic sense at all.

The section on Government Adaptation of AI is conceptually fine, but the emphasis on private-public partnerships is telling.

Some others are even hasher than I was. Andrew Curran has similar even blunter thoughts on both of the DeepSeek and fair use rhetorical moves.

Alexander Doria: The main reason OpenAI is calling to reinforce fair use for model training: their new models directly compete with writers, journalists, wikipedia editors. We have deep research (a “wikipedia killer”, ditto Noam Brown) and now the creative writing model.

The fundamental doctrine behind the google books transformative exception: you don’t impede on the normal commercialization of the work used. No longer really the case…

We have models trained exclusively on open data.

Gallabytes (on the attempt to ban Chinese AI models): longshoremen level scummy move. @OpenAI this is disgraceful.

As we should have learned many times in the past, most famously with the Jones Act, banning the competition is not The Way. You don’t help your industry compete, you instead risk destroying your industry’s ability to compete.

This week, we saw for example that Saudi Aramco chief says DeepSeek AI makes ‘big difference’ to operations. The correct response is to say, hey, have you tried Claude and ChatGPT, or if you need open models have you tried Gemma? Let’s turn that into a reasoning model for you.

The response that says you’re ngmi? Trying to ban DeepSeek, or saying if you don’t get exemptions from laws then ‘the race is over.’

From Peter Wildeford, seems about right:

The best steelman of OpenAI’s response I’ve seen comes from John Pressman. His argument is, yes there is cringe here – he chooses to focus here on a line about DeepSeek’s willingness to do a variety of illicit activities and a claim that this reflects CCP’s view of violating American IP law. Which is certainly another cringy line. But, he points out, the Trump administration asked how America can get ahead and stay ahead in AI, so in that context why shouldn’t OpenAI respond with a jingoistic move towards regulatory capture and a free pass to do as they want?

And yes, there is that, although his comments also reinforce that the price in ‘gesture towards open model support’ for some people to cheer untold other horrors is remarkably cheap.

This letter is part of a recurring pattern in OpenAI’s public communications.

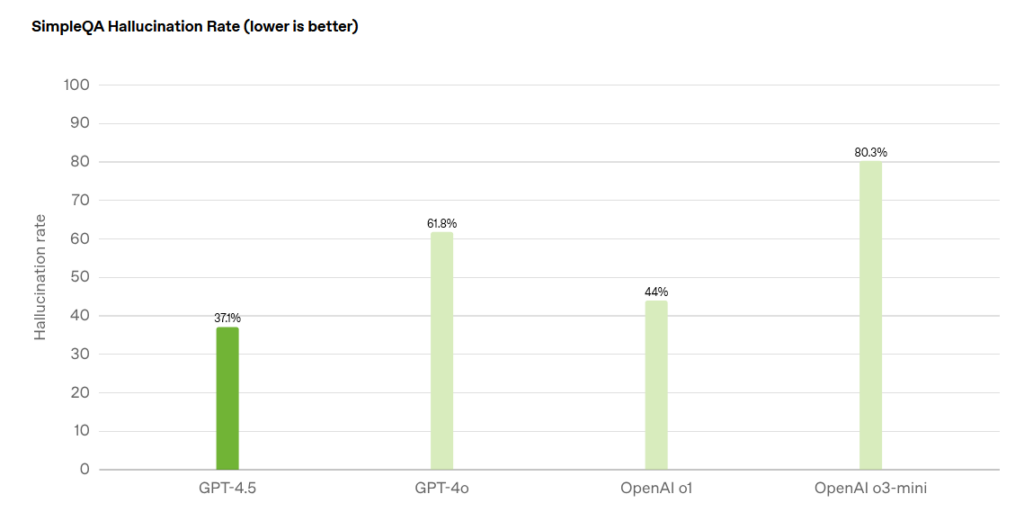

OpenAI have issued some very good documents on the alignment and technical fronts, including their model spec and statement on alignment philosophy, as well as their recent paper on The Most Forbidden Technique. They have been welcoming of detailed feedback on those fronts. In these places they are being thoughtful and transparent, and doing some good work, and I have updated positively. OpenAI’s actual model deployment decisions have mostly been fine in practice, with some troubling signs such as the attempt to pretend GPT-4.5 was not a frontier model.

Alas, their public relations and lobbying departments, and Altman’s public statements in various places, have been consistently terrible and getting even worse over time, to the point of being consistent and rather blatant vice signaling. OpenAI is intentionally presenting themselves as disingenuous jingoistic villains, seeking out active regulatory protections, doing their best to kill attempts to keep models secure, and attempting various forms of government subsidy and regulatory capture.

I get why they would think it is strategically wise to present themselves in this way, to appeal to both the current government and to investors, especially in the wake of recent ‘vibe shifts.’ So I get why one could be tempted to say, oh, they don’t actually believe any of this, they’re only being strategic, obviously not enough people will penalize them for it so they need to do it, and thus you shouldn’t penalize them for it either, that would only be spite.

I disagree. When people tell you who they are, you should believe them.