Forget AGI—Sam Altman celebrates ChatGPT finally following em dash formatting rules

Next stop: superintelligence

Ongoing struggles with AI model instruction-following show that true human-level AI still a ways off.

Em dashes have become what many believe to be a telltale sign of AI-generated text over the past few years. The punctuation mark appears frequently in outputs from ChatGPT and other AI chatbots, sometimes to the point where readers believe they can identify AI writing by its overuse alone—although people can overuse it, too.

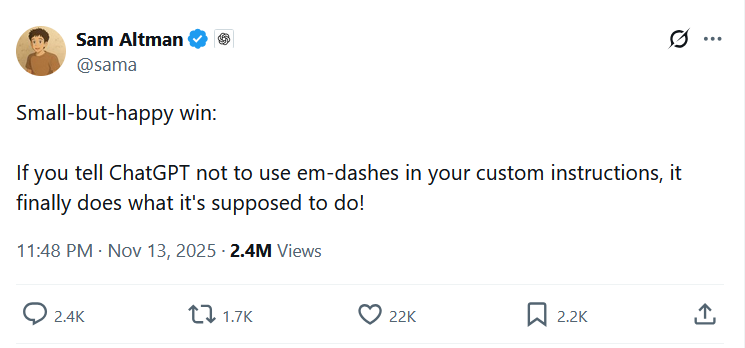

On Thursday evening, OpenAI CEO Sam Altman posted on X that ChatGPT has started following custom instructions to avoid using em dashes. “Small-but-happy win: If you tell ChatGPT not to use em-dashes in your custom instructions, it finally does what it’s supposed to do!” he wrote.

The post, which came two days after the release of OpenAI’s new GPT-5.1 AI model, received mixed reactions from users who have struggled for years with getting the chatbot to follow specific formatting preferences. And this “small win” raises a very big question: If the world’s most valuable AI company has struggled with controlling something as simple as punctuation use after years of trying, perhaps what people call artificial general intelligence (AGI) is farther off than some in the industry claim.

A screenshot of Sam Altman’s post about em dashes on X. Credit: X

“The fact that it’s been 3 years since ChatGPT first launched, and you’ve only just now managed to make it obey this simple requirement, says a lot about how little control you have over it, and your understanding of its inner workings,” wrote one X user in a reply. “Not a good sign for the future.”

While Altman likes to publicly talk about AGI (a hypothetical technology equivalent to humans in general learning ability), superintelligence (a nebulous concept for AI that is far beyond human intelligence), and “magic intelligence in the sky” (his term for AI cloud computing?) while raising funds for OpenAI, it’s clear that we still don’t have reliable artificial intelligence here today on Earth.

But wait, what is an em dash anyway, and why does it matter so much?

AI models love em dashes because we do

Unlike a hyphen, which is a short punctuation mark used to connect words or parts of words, that lives with a dedicated key on your keyboard (-), an em dash is a long dash denoted by a special character (—) that writers use to set off parenthetical information, indicate a sudden change in thought, or introduce a summary or explanation.

Even before the age of AI language models, some writers frequently bemoaned the overuse of the em dash in modern writing. In a 2011 Slate article, writer Noreen Malone argued that writers used the em dash “in lieu of properly crafting sentences” and that overreliance on it “discourages truly efficient writing.” Various Reddit threads posted prior to ChatGPT’s launch featured writers either wrestling over the etiquette of proper em dash use or admitting to their frequent use as a guilty pleasure.

In 2021, one writer in the r/FanFiction subreddit wrote, “For the longest time, I’ve been addicted to Em Dashes. They find their way into every paragraph I write. I love the crisp straight line that gives me the excuse to shove details or thoughts into an otherwise orderly paragraph. Even after coming back to write after like two years of writer’s block, I immediately cram as many em dashes as I can.”

Because of the tendency for AI chatbots to overuse them, detection tools and human readers have learned to spot em dash use as a pattern, creating a problem for the small subset of writers who naturally favor the punctuation mark in their work. As a result, some journalists are complaining that AI is “killing” the em dash.

No one knows precisely why LLMs tend to overuse em dashes. We’ve seen a wide range of speculation online that attempts to explain the phenomenon, from noticing that em dashes were more popular in 19th-century books used as training data (according to a 2018 study, dash use in the English language peaked around 1860 before declining through the mid-20th century) or perhaps AI models borrowed the habit from automatic em-dash character conversion on the blogging site Medium.

One thing we know for sure is that LLMs tend to output frequently seen patterns in their training data (fed in during the initial training process) and from a subsequent reinforcement learning process that often relies on human preferences. As a result, AI language models feed you a sort of “smoothed out” average style of whatever you ask them to provide, moderated by whatever they are conditioned to produce through user feedback.

So the most plausible explanation is still that requests for professional-style writing from an AI model trained on vast numbers of examples from the Internet will lean heavily toward the prevailing style in the training data, where em dashes appear frequently in formal writing, news articles, and editorial content. It’s also possible that during training through human feedback (called RLHF), responses with em dashes, for whatever reason, received higher ratings. Perhaps it’s because those outputs appeared more sophisticated or engaging to evaluators, but that’s just speculation.

From em dashes to AGI?

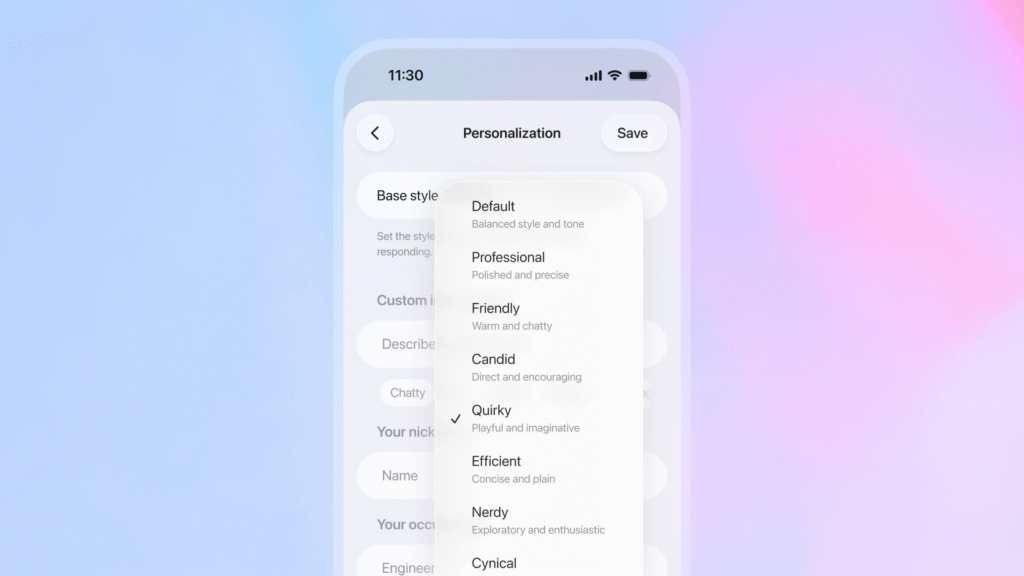

To understand what Altman’s “win” really means, and what it says about the road to AGI, we need to understand how ChatGPT’s custom instructions actually work. They allow users to set persistent preferences that apply across all conversations by appending written instructions to the prompt that is fed into the model just before the chat begins. Users can specify tone, format, and style requirements without needing to repeat those requests manually in every new chat.

However, the feature has not always worked reliably because LLMs do not work reliably (even OpenAI and Anthropic freely admit this). An LLM takes an input and produces an output, spitting out a statistically plausible continuation of a prompt (a system prompt, the custom instructions, and your chat history), and it doesn’t really “understand” what you are asking. With AI language model outputs, there is always some luck involved in getting them to do what you want.

In our informal testing of GPT-5.1 with custom instructions, ChatGPT did appear to follow our request not to produce em dashes. But despite Altman’s claim, the response from X users appears to show that experiences with the feature continue to vary, at least when the request is not placed in custom instructions.

So if LLMs are statistical text-generation boxes, what does “instruction following” even mean? That’s key to unpacking the hypothetical path from LLMs to AGI. The concept of following instructions for an LLM is fundamentally different from how we typically think about following instructions as humans with general intelligence, or even a traditional computer program.

In traditional computing, instruction following is deterministic. You tell a program “don’t include character X,” and it won’t include that character. The program executes rules exactly as written. With LLMs, “instruction following” is really about shifting statistical probabilities. When you tell ChatGPT “don’t use em dashes,” you’re not creating a hard rule. You’re adding text to the prompt that makes tokens associated with em dashes less likely to be selected during the generation process. But “less likely” isn’t “impossible.”

Every token the model generates is selected from a probability distribution. Your custom instruction influences that distribution, but it’s competing with the model’s training data (where em dashes appeared frequently in certain contexts) and everything else in the prompt. Unlike code with conditional logic, there’s no separate system verifying outputs against your requirements. The instruction is just more text that influences the statistical prediction process.

When Altman celebrates finally getting GPT to avoid em dashes, he’s really celebrating that OpenAI has tuned the latest version of GPT-5.1 (probably through reinforcement learning or fine-tuning) to weight custom instructions more heavily in its probability calculations.

There’s an irony about control here: Given the probabilistic nature of the issue, there’s no guarantee the issue will stay fixed. OpenAI continuously updates its models behind the scenes, even within the same version number, adjusting outputs based on user feedback and new training runs. Each update arrives with different output characteristics that can undo previous behavioral tuning, a phenomenon researchers call the “alignment tax.”

Precisely tuning a neural network’s behavior is not yet an exact science. Since all concepts encoded in the network are interconnected by values called weights, adjusting one behavior can alter others in unintended ways. Fix em dash overuse today, and tomorrow’s update (aimed at improving, say, coding capabilities) might inadvertently bring them back, not because OpenAI wants them there, but because that’s the nature of trying to steer a statistical system with millions of competing influences.

This gets to an implied question we mentioned earlier. If controlling punctuation use is still a struggle that might pop back up at any time, how far are we from AGI? We can’t know for sure, but it seems increasingly likely that it won’t emerge from a large language model alone. That’s because AGI, a technology that would replicate human general learning ability, would likely require true understanding and self-reflective intentional action, not statistical pattern matching that sometimes aligns with instructions if you happen to get lucky.

And speaking of getting lucky, some users still aren’t having luck with controlling em dash use outside of the “custom instructions” feature. Upon being told in-chat to not use em dashes within a chat, ChatGPT updated a saved memory and replied to one X user, “Got it—I’ll stick strictly to short hyphens from now on.”

Forget AGI—Sam Altman celebrates ChatGPT finally following em dash formatting rules Read More »