OpenAI’s ChatGPT Agent casually clicks through “I am not a robot” verification test

The CAPTCHA arms race

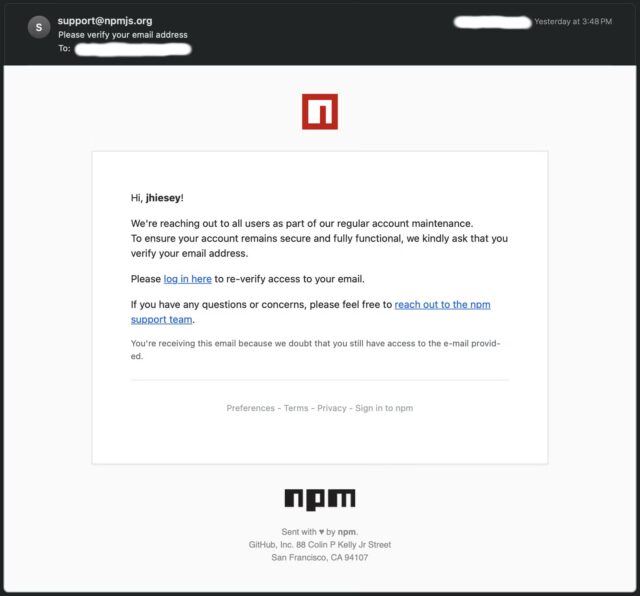

While the agent didn’t face an actual CAPTCHA puzzle with images in this case, successfully passing Cloudflare’s behavioral screening that determines whether to present such challenges demonstrates sophisticated browser automation.

To understand the significance of this capability, it’s important to know that CAPTCHA systems have served as a security measure on the web for decades. Computer researchers invented the technique in the 1990s to screen bots from entering information into websites, originally using images with letters and numbers written in wiggly fonts, often obscured with lines or noise to foil computer vision algorithms. The assumption is that the task will be easy for humans but difficult for machines.

Cloudflare’s screening system, called Turnstile, often precedes actual CAPTCHA challenges and represents one of the most widely deployed bot-detection methods today. The checkbox analyzes multiple signals, including mouse movements, click timing, browser fingerprints, IP reputation, and JavaScript execution patterns to determine if the user exhibits human-like behavior. If these checks pass, users proceed without seeing a CAPTCHA puzzle. If the system detects suspicious patterns, it escalates to visual challenges.

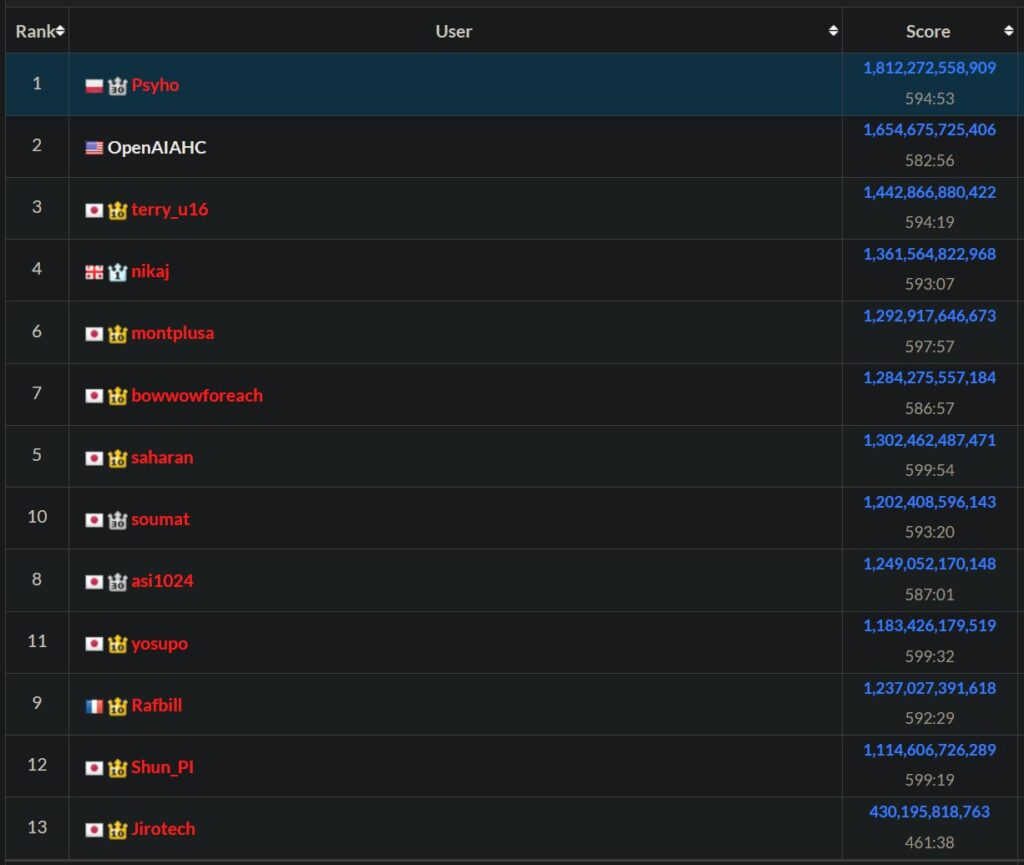

The ability for an AI model to defeat a CAPTCHA isn’t entirely new (although having one narrate the process feels fairly novel). AI tools have been able to defeat certain CAPTCHAs for a while, which has led to an arms race between those that create them and those that defeat them. OpenAI’s Operator, an experimental web-browsing AI agent launched in January, faced difficulty clicking through some CAPTCHAs (and was also trained to stop and ask a human to complete them), but the latest ChatGPT Agent tool has seen a much wider release.

It’s tempting to say that the ability of AI agents to pass these tests puts the future effectiveness of CAPTCHAs into question, but for as long as there have been CAPTCHAs, there have been bots that could later defeat them. As a result, recent CAPTCHAs have become more of a way to slow down bot attacks or make them more expensive rather than a way to defeat them entirely. Some malefactors even hire out farms of humans to defeat them in bulk.

OpenAI’s ChatGPT Agent casually clicks through “I am not a robot” verification test Read More »