OpenAI releases new simulated reasoning models with full tool access

New o3 model appears “near-genius level,” according to one doctor, but it still makes mistakes.

On Wednesday, OpenAI announced the release of two new models—o3 and o4-mini—that combine simulated reasoning capabilities with access to functions like web browsing and coding. These models mark the first time OpenAI’s reasoning-focused models can use every ChatGPT tool simultaneously, including visual analysis and image generation.

OpenAI announced o3 in December, and until now, only less capable derivative models named “o3-mini” and “03-mini-high” have been available. However, the new models replace their predecessors—o1 and o3-mini.

OpenAI is rolling out access today for ChatGPT Plus, Pro, and Team users, with Enterprise and Edu customers gaining access next week. Free users can try o4-mini by selecting the “Think” option before submitting queries. OpenAI CEO Sam Altman tweeted that “we expect to release o3-pro to the pro tier in a few weeks.”

For developers, both models are available starting today through the Chat Completions API and Responses API, though some organizations will need verification for access.

“These are the smartest models we’ve released to date, representing a step change in ChatGPT’s capabilities for everyone from curious users to advanced researchers,” OpenAI claimed on its website. OpenAI says the models offer better cost efficiency than their predecessors, and each comes with a different intended use case: o3 targets complex analysis, while o4-mini, being a smaller version of its next-gen SR model “o4” (not yet released), optimizes for speed and cost-efficiency.

OpenAI says o3 and o4-mini are multimodal, featuring the ability to “think with images.” Credit: OpenAI

What sets these new models apart from OpenAI’s other models (like GPT-4o and GPT-4.5) is their simulated reasoning capability, which uses a simulated step-by-step “thinking” process to solve problems. Additionally, the new models dynamically determine when and how to deploy aids to solve multistep problems. For example, when asked about future energy usage in California, the models can autonomously search for utility data, write Python code to build forecasts, generate visualizing graphs, and explain key factors behind predictions—all within a single query.

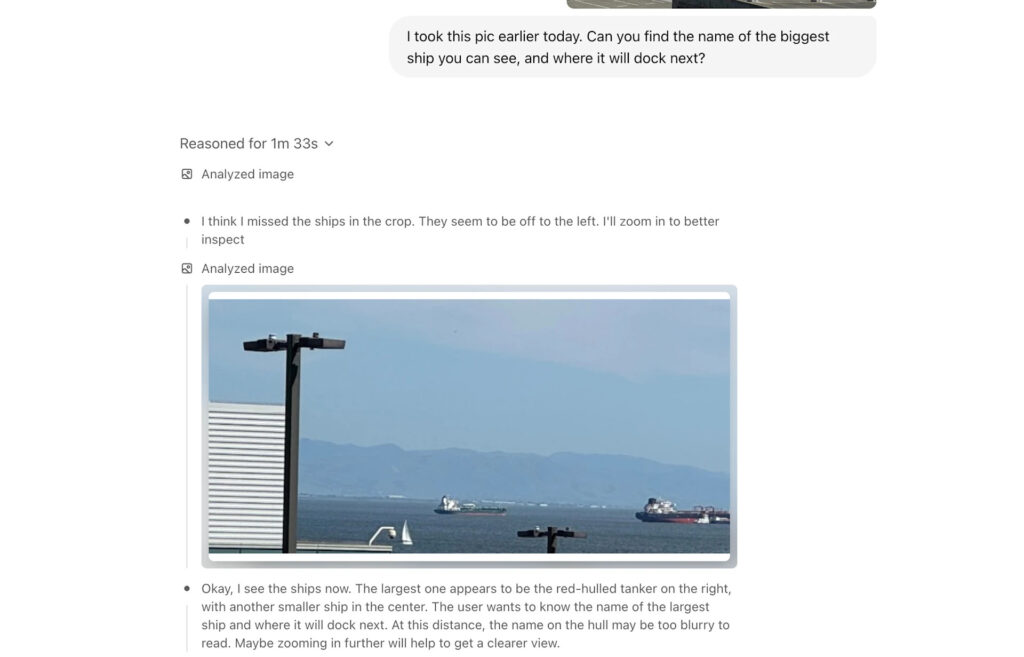

OpenAI touts the new models’ multimodal ability to incorporate images directly into their simulated reasoning process—not just analyzing visual inputs but actively “thinking with” them. This capability allows the models to interpret whiteboards, textbook diagrams, and hand-drawn sketches, even when images are blurry or of low quality.

That said, the new releases continue OpenAI’s tradition of selecting confusing product names that don’t tell users much about each model’s relative capabilities—for example, o3 is more powerful than o4-mini despite including a lower number. Then there’s potential confusion with the firm’s non-reasoning AI models. As Ars Technica contributor Timothy B. Lee noted today on X, “It’s an amazing branding decision to have a model called GPT-4o and another one called o4.”

Vibes and benchmarks

All that aside, we know what you’re thinking: What about the vibes? While we have not used 03 or o4-mini yet, frequent AI commentator and Wharton professor Ethan Mollick compared o3 favorably to Google’s Gemini 2.5 Pro on Bluesky. “After using them both, I think that Gemini 2.5 & o3 are in a similar sort of range (with the important caveat that more testing is needed for agentic capabilities),” he wrote. “Each has its own quirks & you will likely prefer one to another, but there is a gap between them & other models.”

During the livestream announcement for o3 and o4-mini today, OpenAI President Greg Brockman boldly claimed: “These are the first models where top scientists tell us they produce legitimately good and useful novel ideas.”

Early user feedback seems to support this assertion, although until more third-party testing takes place, it’s wise to be skeptical of the claims. On X, immunologist Dr. Derya Unutmaz said o3 appeared “at or near genius level” and wrote, “It’s generating complex incredibly insightful and based scientific hypotheses on demand! When I throw challenging clinical or medical questions at o3, its responses sound like they’re coming directly from a top subspecialist physicians.”

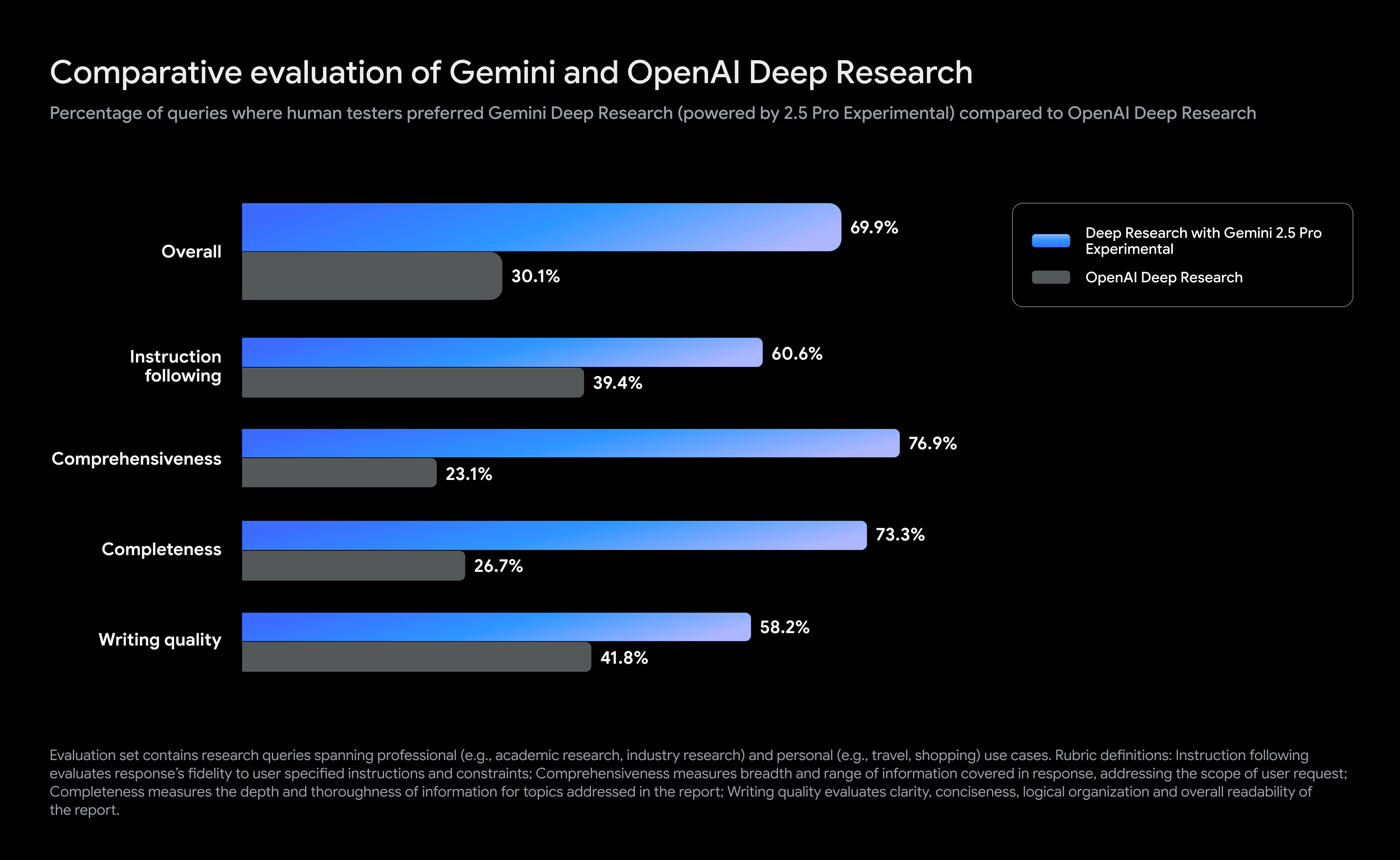

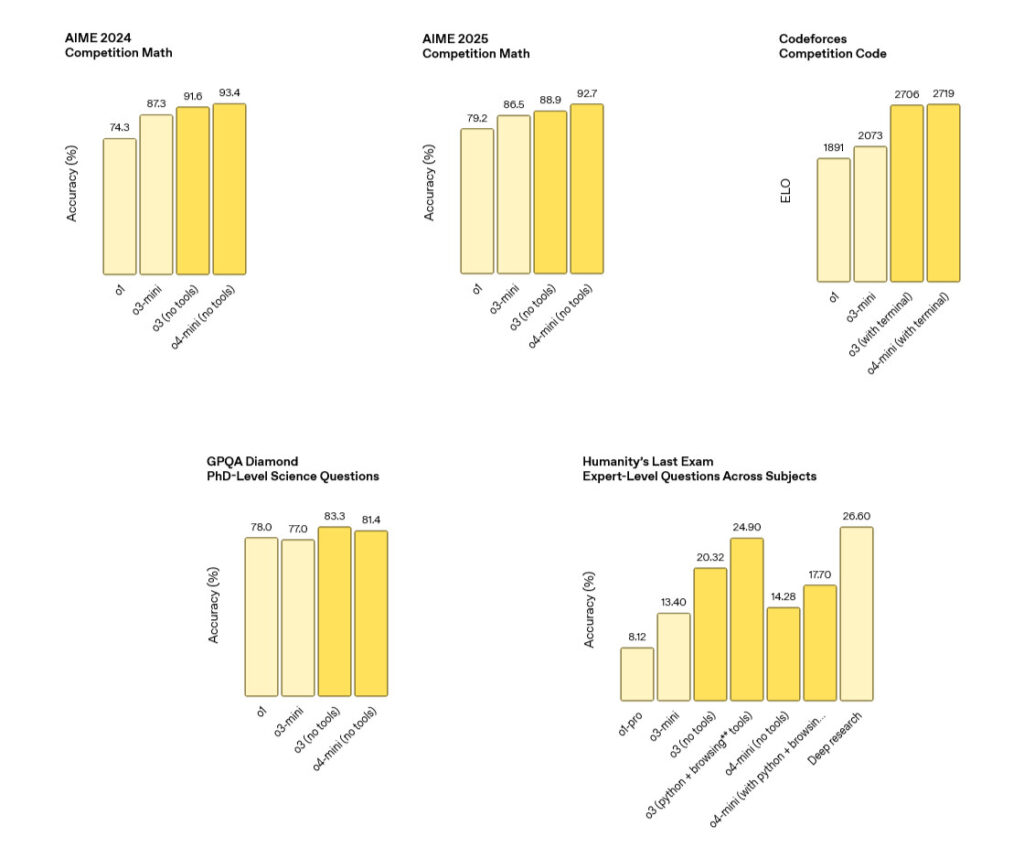

OpenAI benchmark results for o3 and o4-mini SR models. Credit: OpenAI

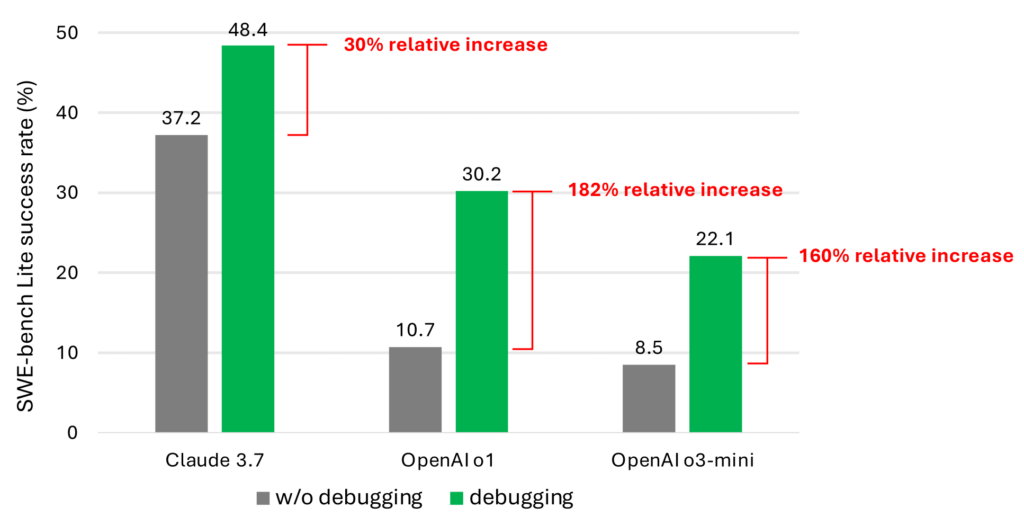

So the vibes seem on target, but what about numerical benchmarks? Here’s an interesting one: OpenAI reports that o3 makes “20 percent fewer major errors” than o1 on difficult tasks, with particular strengths in programming, business consulting, and “creative ideation.”

The company also reported state-of-the-art performance on several metrics. On the American Invitational Mathematics Examination (AIME) 2025, o4-mini achieved 92.7 percent accuracy. For programming tasks, o3 reached 69.1 percent accuracy on SWE-Bench Verified, a popular programming benchmark. The models also reportedly showed strong results on visual reasoning benchmarks, with o3 scoring 82.9 percent on MMMU (massive multi-disciplinary multimodal understanding), a college-level visual problem-solving test.

OpenAI benchmark results for o3 and o4-mini SR models. Credit: OpenAI

However, these benchmarks provided by OpenAI lack independent verification. One early evaluation of a pre-release o3 model by independent AI research lab Transluce found that the model exhibited recurring types of confabulations, such as claiming to run code locally or providing hardware specifications, and hypothesized this could be due to the model lacking access to its own reasoning processes from previous conversational turns. “It seems that despite being incredibly powerful at solving math and coding tasks, o3 is not by default truthful about its capabilities,” wrote Transluce in a tweet.

Also, some evaluations from OpenAI include footnotes about methodology that bear consideration. For a “Humanity’s Last Exam” benchmark result that measures expert-level knowledge across subjects (o3 scored 20.32 with no tools, but 24.90 with browsing and tools), OpenAI notes that browsing-enabled models could potentially find answers online. The company reports implementing domain blocks and monitoring to prevent what it calls “cheating” during evaluations.

Even though early results seem promising overall, experts or academics who might try to rely on SR models for rigorous research should take the time to exhaustively determine whether the AI model actually produced an accurate result instead of assuming it is correct. And if you’re operating the models outside your domain of knowledge, be careful accepting any results as accurate without independent verification.

Pricing

For ChatGPT subscribers, access to o3 and o4-mini is included with the subscription. On the API side (for developers who integrate the models into their apps), OpenAI has set o3’s pricing at $10 per million input tokens and $40 per million output tokens, with a discounted rate of $2.50 per million for cached inputs. This represents a significant reduction from o1’s pricing structure of $15/$60 per million input/output tokens—effectively a 33 percent price cut while delivering what OpenAI claims is improved performance.

The more economical o4-mini costs $1.10 per million input tokens and $4.40 per million output tokens, with cached inputs priced at $0.275 per million tokens. This maintains the same pricing structure as its predecessor o3-mini, suggesting OpenAI is delivering improved capabilities without raising costs for its smaller reasoning model.

Codex CLI

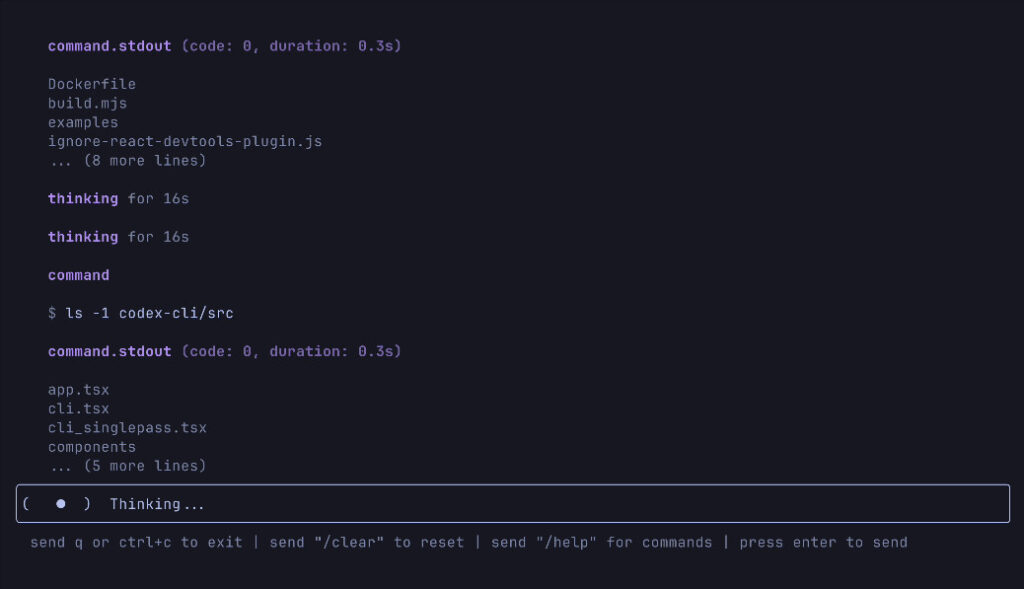

OpenAI also introduced an experimental terminal application called Codex CLI, described as “a lightweight coding agent you can run from your terminal.” The open source tool connects the models to users’ computers and local code. Alongside this release, the company announced a $1 million grant program offering API credits for projects using Codex CLI.

A screenshot of OpenAI’s new Codex CLI tool in action, taken from GitHub. Credit: OpenAI

Codex CLI somewhat resembles Claude Code, an agent launched with Claude 3.7 Sonnet in February. Both are terminal-based coding assistants that operate directly from a console and can interact with local codebases. While Codex CLI connects OpenAI’s models to users’ computers and local code repositories, Claude Code was Anthropic’s first venture into agentic tools, allowing Claude to search through codebases, edit files, write and run tests, and execute command line operations.

Codex CLI is one more step toward OpenAI’s goal of making autonomous agents that can execute multistep complex tasks on behalf of users. Let’s hope all the vibe coding it produces isn’t used in high-stakes applications without detailed human oversight.

OpenAI releases new simulated reasoning models with full tool access Read More »