Music labels will regret coming for the Internet Archive, sound historian says

But David Seubert, who manages sound collections at the University of California, Santa Barbara library, told Ars that he frequently used the project as an archive and not just to listen to the recordings.

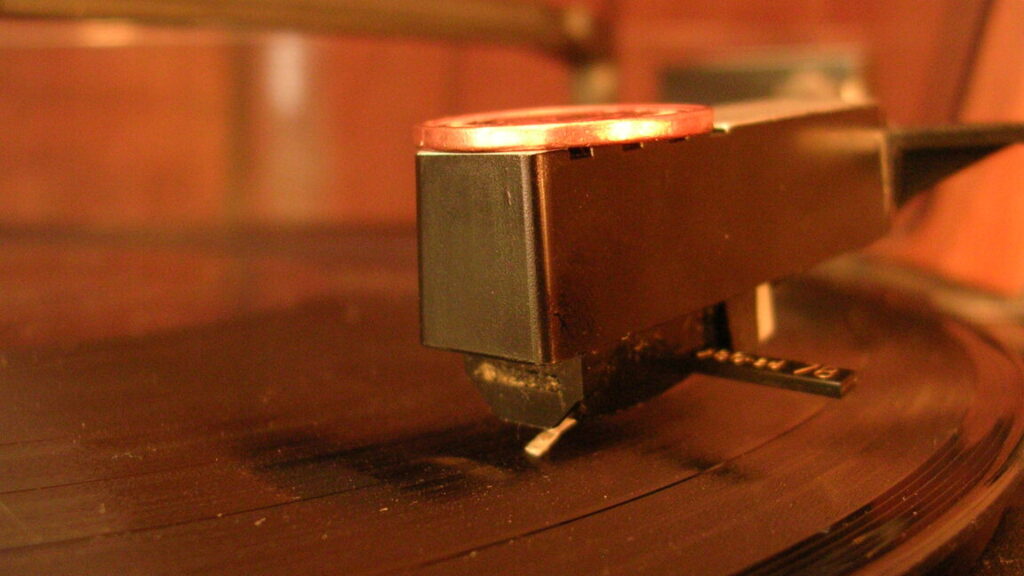

For Seubert, the videos that IA records of the 78 RPM albums capture more than audio of a certain era. Researchers like him want to look at the label, check out the copyright information, and note the catalogue numbers, he said.

“It has all this information there,” Seubert said. “I don’t even necessarily need to hear it,” he continued, adding, “just seeing the physicality of it, it’s like, ‘Okay, now I know more about this record.'”

Music publishers suing IA argue that all the songs included in their dispute—and likely many more, since the Great 78 Project spans 400,000 recordings—”are already available for streaming or downloading from numerous services.”

“These recordings face no danger of being lost, forgotten, or destroyed,” their filing claimed.

But Nathan Georgitis, the executive director of the Association for Recorded Sound Collections (ARSC), told Ars that you just don’t see 78 RPM records out in the world anymore. Even in record stores selling used vinyl, these recordings will be hidden “in a few boxes under the table behind the tablecloth,” Georgitis suggested. And in “many” cases, “the problem for libraries and archives is that those recordings aren’t necessarily commercially available for re-release.”

That “means that those recordings, those artists, the repertoire, the recorded sound history in itself—meaning the labels, the producers, the printings—all of that history kind of gets obscured from view,” Georgitis said.

Currently, libraries trying to preserve this history must control access to audio collections, Georgitis said. He sees IA’s work with the Great 78 Project as a legitimate archive in that, unlike a streaming service, where content may be inconsistently available, IA’s “mission is to preserve and provide access to content over time.”

Music labels will regret coming for the Internet Archive, sound historian says Read More »