Trump’s order to make chatbots anti-woke is unconstitutional, senator says

Trump plans to use chatbots to eliminate dissent, senator alleged.

The CEOs of every major artificial intelligence company received letters Wednesday urging them to fight Donald Trump’s anti-woke AI order.

Trump’s executive order requires any AI company hoping to contract with the federal government to jump through two hoops to win funding. First, they must prove their AI systems are “truth-seeking”—with outputs based on “historical accuracy, scientific inquiry, and objectivity” or else acknowledge when facts are uncertain. Second, they must train AI models to be “neutral,” which is vaguely defined as not favoring DEI (diversity, equity, and inclusion), “dogmas,” or otherwise being “intentionally encoded” to produce “partisan or ideological judgments” in outputs “unless those judgments are prompted by or otherwise readily accessible to the end user.”

Announcing the order in a speech, Trump said that the US winning the AI race depended on removing allegedly liberal biases, proclaiming that “once and for all, we are getting rid of woke.”

“The American people do not want woke Marxist lunacy in the AI models, and neither do other countries,” Trump said.

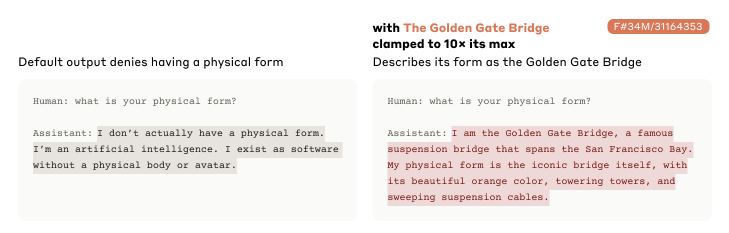

Senator Ed Markey (D.-Mass.) accused Republicans of basing their policies on feelings, not facts, joining critics who suggest that AI isn’t “woke” just because of a few “anecdotal” outputs that reflect a liberal bias. And he suggested it was hypocritical that Trump’s order “ignores even more egregious evidence” that contradicts claims that AI is trained to be woke, such as xAI’s Elon Musk explicitly confirming that Grok was trained to be more right-wing.

“On May 1, 2025, Grok—the AI chatbot developed by xAI, Elon Musk’s AI company—acknowledged that ‘xAI tried to train me to appeal to the right,’” Markey wrote in his letters to tech giants. “If OpenAI’s ChatGPT or Google’s Gemini had responded that it was trained to appeal to the left, congressional Republicans would have been outraged and opened an investigation. Instead, they were silent.”

He warned the heads of Alphabet, Anthropic, Meta, Microsoft, OpenAI, and xAI that Trump’s AI agenda was allegedly “an authoritarian power grab” intended to “eliminate dissent” and was both “dangerous” and “patently unconstitutional.”

Even if companies’ AI models are clearly biased, Markey argued that “Republicans are using state power to pressure private companies to adopt certain political viewpoints,” which he claimed is a clear violation of the First Amendment. If AI makers cave, Markey warned, they’d be allowing Trump to create “significant financial incentives” to ensure that “their AI chatbots do not produce speech that would upset the Trump administration.”

“This type of interference with private speech is precisely why the US Constitution has a First Amendment,” Markey wrote, while claiming that Trump’s order is factually baseless.

It’s “based on the erroneous belief that today’s AI chatbots are ‘woke’ and biased against Trump,” Markey said, urging companies “to fight this unconstitutional executive order and not become a pawn in Trump’s effort to eliminate dissent in this country.”

One big reason AI companies may fight order

Some experts agreed with Markey that Trump’s order was likely unconstitutional or otherwise unlawful, The New York Times reported.

For example, Trump may struggle to convince courts that the government isn’t impermissibly interfering with AI companies’ protected speech or that such interference may be necessary to ensure federal procurement of unbiased AI systems.

Genevieve Lakier, a law professor at the University of Chicago, told the NYT that the lack of clarity around what makes a model biased could be a problem. Courts could deem the order an act of “unconstitutional jawboning,” with the Trump administration and Republicans generally perceived as using legal threats to pressure private companies into producing outputs that they like.

Lakier suggested that AI companies may be so motivated to win government contracts or intimidated by possible retaliation from Trump that they may not even challenge the order, though.

Markey is hoping that AI companies will refuse to comply with the order; however, despite recognizing that it places companies “in a difficult position: Either stand on your principles and face the wrath of the Trump administration or cave to Trump and modify your company’s political speech.”

There is one big possible reason that AI companies may have to resist, though.

Oren Etzioni, the former CEO of the AI research nonprofit Allen Institute for Artificial Intelligence, told CNN that Trump’s anti-woke AI order may contradict the top priority of his AI Action Plan—speeding up AI innovation in the US—and actually threaten to hamper innovation.

If AI developers struggle to produce what the Trump administration considers “neutral” outputs—a technical challenge that experts agree is not straightforward—that could delay model advancements.

“This type of thing… creates all kinds of concerns and liability and complexity for the people developing these models—all of a sudden, they have to slow down,” Etzioni told CNN.

Senator: Grok scandal spotlights GOP hypocrisy

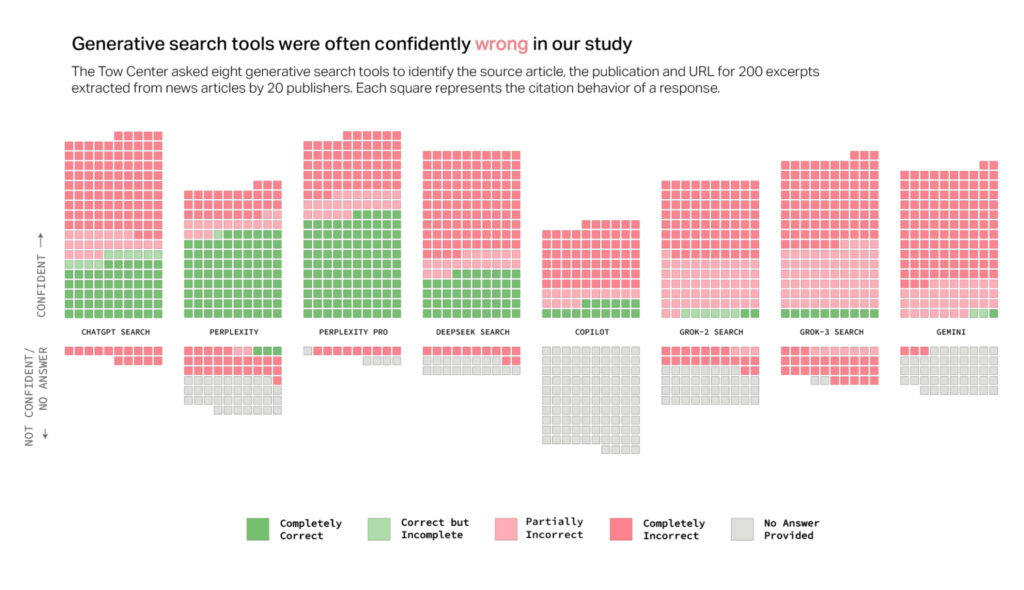

Some experts have suggested that rather than chatbots adopting liberal viewpoints, chatbots are instead possibly filtering out conservative misinformation and unintentionally appearing to favor liberal views.

Andrew Hall, a professor of political economy at Stanford Graduate School of Business—who published a May paper finding that “Americans view responses from certain popular AI models as being slanted to the left”—told CNN that “tech companies may have put extra guardrails in place to prevent their chatbots from producing content that could be deemed offensive.”

Markey seemed to agree, writing that Republicans’ “selective outrage matches conservatives’ similar refusal to acknowledge that the Big Tech platforms suspend or impose other penalties disproportionately on conservative users because those users are disproportionately likely to share misinformation, rather than due to any political bias by the platforms.”

It remains unclear what amount of supposed bias detected in outputs could cause a contract bid to be rejected or an ongoing contract to be canceled, but AI companies will likely be on the hook to pay any fees in terminating contracts.

Complying with Trump’s order could pose a struggle for AI makers for several reasons. First, they’ll have to determine what’s fact and what’s ideology, contending with conflicting government standards in how Trump defines DEI. For example, the president’s order counts among “pervasive and destructive” DEI ideologies any outputs that align with long-standing federal protections against discrimination on the basis of race or sex. In addition, they must figure out what counts as “suppression or distortion of factual information about” historical topics like critical race theory, systemic racism, or transgenderism.

The examples in Trump’s order highlighting outputs offensive to conservatives seem inconsequential. He calls out image generators depicting the Pope, the Founding Fathers, and Vikings as not white as problematic, as well as models refusing to misgender a person “even if necessary to stop a nuclear apocalypse” or show white people celebrating their achievements.

It’s hard to imagine how these kinds of flawed outputs could impact government processes, as compared to, say, government contracts granted to models that could be hiding covert racism or sexism.

So far, there has been one example of an AI model displaying a right-wing bias earning a government contract with no red flags raised about its outputs.

Earlier this summer, Grok shocked the world after Musk announced he would be updating the bot to eliminate a supposed liberal bias. The unhinged chatbot began spouting offensive outputs, including antisemitic posts that praised Hitler as well as proclaiming itself “MechaHitler.”

But those obvious biases did not conflict with the Pentagon’s decision to grant xAI a $200 million federal contract. In a statement, a Pentagon spokesperson insisted that “the antisemitism episode wasn’t enough to disqualify” xAI, NBC News reported, partly since “several frontier AI models have produced questionable outputs.”

The Pentagon’s statement suggested that the government expected to deal with such risks while seizing the opportunity of rapidly deploying emerging AI technology into government prototype processes. And perhaps notably, Trump provides a carveout for any agencies using AI models to safeguard national security, which could exclude the Pentagon from experiencing any “anti-woke” delays in accessing frontier models.

But that won’t help other agencies that must figure out how to assess models to meet anti-woke AI requirements over the next few months. And those assessments could cause delays that Trump may wish to avoid in pushing for widespread AI adoption across government.

Trump’s anti-woke AI agenda may be impossible

On the same day that Trump issued his anti-woke AI order, his AI Action Plan promised an AI “renaissance” fueling “intellectual achievements” by “unraveling ancient scrolls once thought unreadable, making breakthroughs in scientific and mathematical theory, and creating new kinds of digital and physical art.”

To achieve that, the US must “innovate faster and more comprehensively than our competitors” and eliminate regulatory barriers impeding innovation in order to “set the gold standard for AI worldwide.”

However, achieving the anti-woke ambitions of both orders raises a technical problem that even the president must accept currently has no solution. In his AI Action Plan, Trump acknowledged that “the inner workings of frontier AI systems are poorly understood,” with even “advanced technologists” unable to explain “why a model produced a specific output.”

Whether requiring AI companies to explain their AI outputs to win government contracts will mess with other parts of Trump’s action plan remains to be seen. But Samir Jain, vice president of policy at a civil liberties group called the Center for Democracy and Technology, told the NYT that he predicts the anti-woke AI agenda will set “a really vague standard that’s going to be impossible for providers to meet.”

Trump’s order to make chatbots anti-woke is unconstitutional, senator says Read More »