“IG is a drug”: Internal messages may doom Meta at social media addiction trial

Social media addiction test case

A loss could cost social media companies billions and force changes on platforms.

Mark Zuckerberg testifies during the US Senate Judiciary Committee hearing, “Big Tech and the Online Child Sexual Exploitation Crisis,” in 2024.

Anxiety, depression, eating disorders, and death. These can be the consequences for vulnerable kids who get addicted to social media, according to more than 1,000 personal injury lawsuits that seek to punish Meta and other platforms for allegedly prioritizing profits while downplaying child safety risks for years.

Social media companies have faced scrutiny before, with congressional hearings forcing CEOs to apologize, but until now, they’ve never had to convince a jury that they aren’t liable for harming kids.

This week, the first high-profile lawsuit—considered a “bellwether” case that could set meaningful precedent in the hundreds of other complaints—goes to trial. That lawsuit documents the case of a 19-year-old, K.G.M, who hopes the jury will agree that Meta and YouTube caused psychological harm by designing features like infinite scroll and autoplay to push her down a path that she alleged triggered depression, anxiety, self-harm, and suicidality.

TikTok and Snapchat were also targeted by the lawsuit, but both have settled. The Snapchat settlement came last week, while TikTok settled on Tuesday just hours before the trial started, Bloomberg reported.

For now, YouTube and Meta remain in the fight. K.G.M. allegedly started watching YouTube when she was 6 years old and joined Instagram by age 11. She’s fighting to claim untold damages—including potentially punitive damages—to help her family recoup losses from her pain and suffering and to punish social media companies and deter them from promoting harmful features to kids. She also wants the court to require prominent safety warnings on platforms to help parents be aware of the risks.

Platforms failed to blame mom for not reading TOS

A loss could cost social media companies billions, CNN reported.

To avoid that, platforms have alleged that other factors caused K.G.M.’s psychological harm—like school bullies and family troubles—while insisting that Section 230 and the First Amendment protect platforms from being blamed for any harmful content targeted to K.G.M.

They also argued that K.G.M.’s mom never read the terms of service and, therefore, supposedly would not have benefited from posted warnings. And ByteDance, before settling, seemingly tried to pass the buck by claiming that K.G.M. “already suffered mental health harms before she began using TikTok.”

But the judge, Carolyn B. Kuhl, wrote in a ruling denying all platforms’ motions for summary judgment that K.G.M. showed enough evidence that her claims don’t stem from content to go to trial.

Further, platforms can’t liken warnings buried in terms of service to prominently displayed warnings, Kuhl said, since K.G.M.’s mom testified she would have restricted the minor’s app usage if she were aware of the alleged risks.

Two platforms settling before the trial seems like a good sign for K.G.M. However, Snapchat has not settled other social media addiction lawsuits that it’s involved in, including one raised by school districts, and perhaps is waiting to see how K.G.M.’s case shakes out before taking further action.

To win, K.G.M.’s lawyers will need to “parcel out” how much harm is attributed to each platform, due to design features, not the content that was targeted to K.G.M., Clay Calvert, a technology policy expert and senior fellow at a think tank called the American Enterprise Institute, wrote. Internet law expert Eric Goldman told The Washington Post that detailing those harms will likely be K.G.M.’s biggest struggle, since social media addiction has yet to be legally recognized, and tracing who caused what harms may not be straightforward.

However, Matthew Bergman, founder of the Social Media Victims Law Center and one of K.G.M.’s lawyers, told the Post that K.G.M. is prepared to put up this fight.

“She is going to be able to explain in a very real sense what social media did to her over the course of her life and how in so many ways it robbed her of her childhood and her adolescence,” Bergman said.

Internal messages may be “smoking-gun evidence”

The research is unclear on whether social media is harmful for kids or whether social media addiction exists, Tamar Mendelson, a professor at Johns Hopkins Bloomberg School of Public Health, told the Post. And so far, research only shows a correlation between Internet use and mental health, Mendelson noted, which could doom K.G.M.’s case and others’.

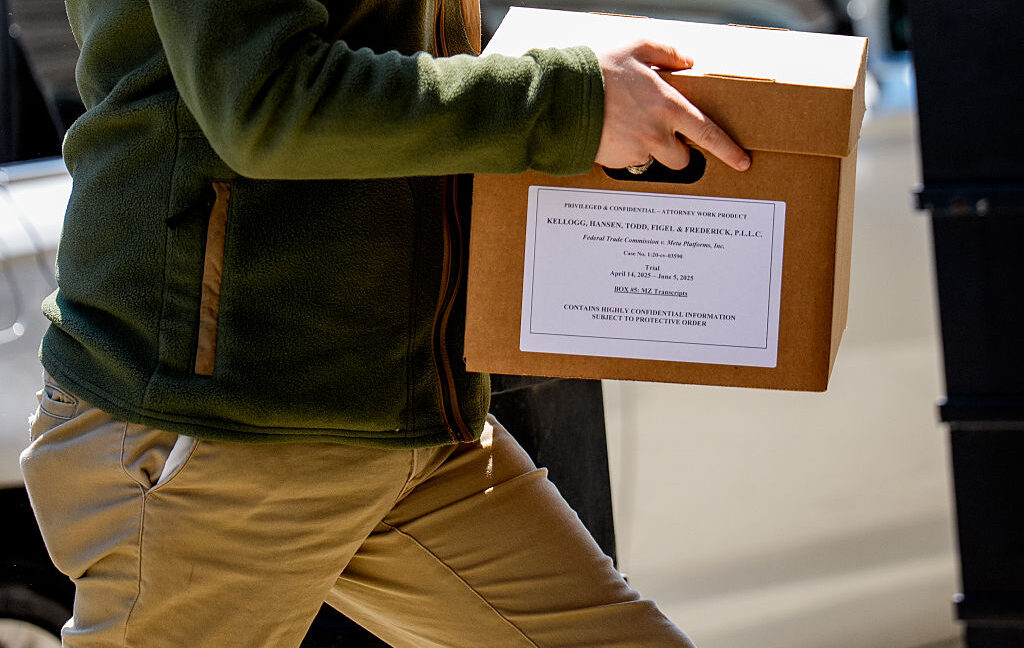

However, social media companies’ internal research might concern a jury, Bergman told the Post. On Monday, the Tech Oversight Project, a nonprofit working to rein in Big Tech, published a report analyzing recently unsealed documents in K.G.M.’s case that supposedly provide “smoking-gun evidence” that platforms “purposefully designed their social media products to addict children and teens with no regard for known harms to their wellbeing”—while putting increased engagement from young users at the center of their business models.

In the report, Sacha Haworth, executive director of The Tech Oversight Project, accused social media companies of “gaslighting and lying to the public for years.”

Most of the recently unsealed documents highlighted in the report came from Meta, which also faces a trial from dozens of state attorneys general on social media addiction this year.

Those documents included an email stating that Mark Zuckerberg—who is expected to testify at K.G.M.’s trial—decided that Meta’s top priority in 2017 was teens who must be locked in to using the company’s family of apps.

The next year, a Facebook internal document showed that the company pondered letting “tweens” access a private mode inspired by the popularity of fake Instagram accounts teens know as “finstas.” That document included an “internal discussion on how to counter the narrative that Facebook is bad for youth and admission that internal data shows that Facebook use is correlated with lower well-being (although it says the effect reverses longitudinally).”

Other allegedly damning documents showed Meta seemingly bragging that “teens can’t switch off from Instagram even if they want to” and an employee declaring, “oh my gosh yall IG is a drug,” likening all social media platforms to “pushers.”

Similarly, a 2020 Google document detailed the company’s plan to keep kids engaged “for life,” despite internal research showing young YouTube users were more likely to “disproportionately” suffer from “habitual heavy use, late night use, and unintentional use” deteriorating their “digital well-being.”

Shorts, YouTube’s feature that rivals TikTok, also is a concern for parents suing, and three years later, documents showed Google choosing to target teens with Shorts, despite research flagging that the “two biggest challenges for teen wellbeing on YouTube” were prominently linked to watching shorts. Those challenges included Shorts bombarding teens with “low quality content recommendations that can convey & normalize unhealthy beliefs or behaviors” and teens reporting that “prolonged unintentional use” was “displacing valuable activities like time with friends or sleep.”

Bergman told the Post that these documents will help the jury decide if companies owed young users better protections sooner but prioritized profits while pushing off interventions that platforms have more recently introduced amid mounting backlash.

“Internal documents that have been held establishing the willful misconduct of these companies are going to—for the first time—be given a public airing,” Bergman said. “The public is going to know for the first time what social media companies have done to prioritize their profits over the safety of our kids.”

Platforms failed to get experts’ testimony tossed

One seeming advantage K.G.M. has heading into the trial is that tech companies failed to get expert testimony dismissed that backs up her claims.

Platforms tried to exclude testimony from several experts, including Kara Bagot, a board-certified adult, child, and adolescent psychiatrist, as well as Arturo Bejar, a former Meta safety researcher and whistleblower. They claimed that experts’ opinions were irrelevant because they were based on K.G.M.’s interactions with content. They also suggested that child safety experts’ opinions “violate the standards of reliability” since the causal links they draw don’t account for “alternative explanations” and allegedly “contradict the experts’ own statements in non-litigation contexts.”

However, Kuhl ruled that platforms will have the opportunity to counter experts’ opinions at trial, while reminding social media companies that “ultimately, the critical question of causation is one that must be determined by the jury.” Only one expert’s testimony was excluded, Social Media Victims Law Center noted, a licensed clinical psychologist deemed unqualified.

“Testimony by Bagot as to design features that were employed on TikTok as well as on other social media platforms is directly relevant to the question of whether those design features cause the type of harms allegedly suffered by K.G.M. here,” Kuhl wrote.

That means that a jury will get a chance to weigh Bagot’s opinion that “social media overuse and addiction causes or plays a substantial role in causing or exacerbating psychopathological harms in children and youth, including depression, anxiety and eating disorders, as well as internalizing and externalizing psychopathological symptoms.”

The jury will also consider the insights and information Bejar (a fact witness and former consultant for the company) will share about Meta’s internal safety studies. That includes hearing about “his personal knowledge and experience related to how design defects on Meta’s platforms can cause harm to minors (e.g., age verification, reporting processes, beauty filters, public like counts, infinite scroll, default settings, private messages, reels, ephemeral content, and connecting children with adult strangers),” as well as “harms associated with Meta’s platforms including addiction/problematic use, anxiety, depression, eating disorders, body dysmorphia, suicidality, self-harm, and sexualization.”

If K.G.M. can convince the jury that she was not harmed by platforms’ failure to remove content but by companies “designing their platforms to addict kids” and “developing algorithms that show kids not what they want to see but what they cannot look away from,” Bergman thinks her case could become a “data point” for “settling similar cases en masse,” he told Barrons.

“She is very typical of so many children in the United States—the harms that they’ve sustained and the way their lives have been altered by the deliberate design decisions of the social media companies,” Bergman told the Post.

“IG is a drug”: Internal messages may doom Meta at social media addiction trial Read More »