Google removes some AI health summaries after investigation finds “dangerous” flaws

Why AI Overviews produces errors

The recurring problems with AI Overviews stem from a design flaw in how the system works. As we reported in May 2024, Google built AI Overviews to show information backed up by top web results from its page ranking system. The company designed the feature this way based on the assumption that highly ranked pages contain accurate information.

However, Google’s page ranking algorithm has long struggled with SEO-gamed content and spam. The system now feeds these unreliable results to its AI model, which then summarizes them with an authoritative tone that can mislead users. Even when the AI draws from accurate sources, the language model can still draw incorrect conclusions from the data, producing flawed summaries of otherwise reliable information.

The technology does not inherently provide factual accuracy. Instead, it reflects whatever inaccuracies exist on the websites Google’s algorithm ranks highly, presenting the facts with an authority that makes errors appear trustworthy.

Other examples remain active

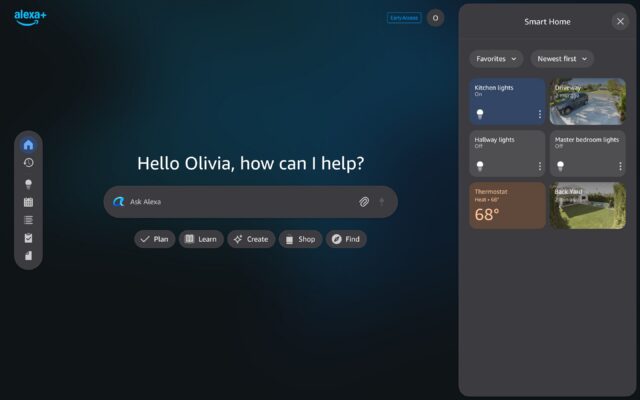

The Guardian found that typing slight variations of the original queries into Google, such as “lft reference range” or “lft test reference range,” still prompted AI Overviews. Hebditch said this was a big worry and that the AI Overviews present a list of tests in bold, making it very easy for readers to miss that these numbers might not even be the right ones for their test.

AI Overviews still appear for other examples that The Guardian originally highlighted to Google. When asked why these AI Overviews had not also been removed, Google said they linked to well-known and reputable sources and informed people when it was important to seek out expert advice.

Google said AI Overviews only appear for queries where it has high confidence in the quality of the responses. The company constantly measures and reviews the quality of its summaries across many different categories of information, it added.

This is not the first controversy for AI Overviews. The feature has previously told people to put glue on pizza and eat rocks. It has proven unpopular enough that users have discovered that inserting curse words into search queries disables AI Overviews entirely.

Google removes some AI health summaries after investigation finds “dangerous” flaws Read More »