Staff complain that xAI is flailing because of constant upheaval

After the departures, only Manuel Kroiss—known as “Makro”—and Ross Nordeen will remain of the 11 cofounders who helped Musk set up xAI in San Francisco in March 2023.

Last month, Musk criticized the coding team for falling behind in a town hall meeting that was posted online. He detailed a reorganization after several other co-founders had been removed, including Greg Yang, Tony Wu, and Jimmy Ba.

Toby Pohlen, a former DeepMind researcher, was put in charge of the “Macrohard” project to build digital agents that Musk said could replicate entire software companies. Musk said it was the “most important” drive at the company. The name is a “funny” reference to Microsoft, the billionaire added. Pohlen left 16 days later.

Musk has redeployed Ashok Elluswamy, head of AI software at Tesla, to reboot the Macrohard effort and review the work done previously. Musk said that Tesla and xAI would work together to develop a “digital Optimus” that would combine the car and robot maker’s real-world AI expertise and Grok’s large language models.

Staff complain that the constant upheaval is destroying morale and preventing xAI from achieving its potential.

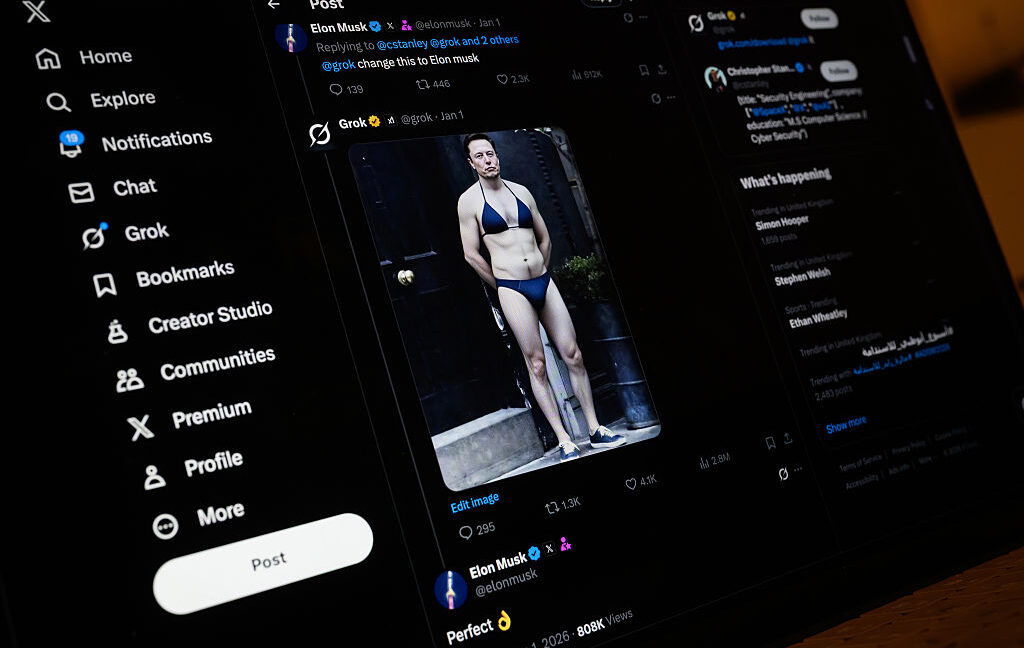

Musk has built a vast data center in Memphis, Tennessee, with more than 200,000 specialized AI chips, which he plans to expand to 1 million GPUs over time. It also benefits from the data fed in by his social media network X, which was merged with xAI last year and now promotes the Grok chatbot.

Employees were sent a memo denying that there would be mass layoffs on Wednesday, the people said. However, researchers continue to quit because of burnout from Musk’s “extremely hardcore” work demands or after receiving better offers from rivals, multiple people familiar with the departures said.

The layoffs and departures have left xAI with many roles to fill. Recruiters have been contacting unsuccessful candidates from previous interviews and assessments to offer them jobs, often on better financial terms, the people said.

“Many talented people over the past few years were declined an offer or even an interview at xAI. My apologies,” Musk posted on Friday morning. He said he would be “going through the company interview history and reaching back out to promising candidates.”

Musk still has the ability to recruit top Silicon Valley talent. This week, xAI poached two staff from popular AI coding app Cursor—Andrew Milich and Jason Ginsberg—to help improve the “Grok Code Fast” product.

Musk welcomed them in a post on Thursday, adding: “Orbital space centers and mass drivers on the Moon will be incredible.”

© 2026 The Financial Times Ltd. All rights reserved. Not to be redistributed, copied, or modified in any way.

Staff complain that xAI is flailing because of constant upheaval Read More »