97% of CrowdStrike systems are back online; Microsoft suggests Windows changes

falcon punch —

Kernel access gives security software a lot of power, but not without problems.

Enlarge / A bad update to CrowdStrike’s Falcon security software crashed millions of Windows PCs last week.

CrowdStrike

CrowdStrike CEO George Kurtz said Thursday that 97 percent of all Windows systems running its Falcon sensor software were back online, a week after an update-related outage to the corporate security software delayed flights and took down emergency response systems, among many other disruptions. The update, which caused Windows PCs to throw the dreaded Blue Screen of Death and reboot, affected about 8.5 million systems by Microsoft’s count, leaving roughly 250,000 that still need to be brought back online.

Microsoft VP John Cable said in a blog post that the company has “engaged over 5,000 support engineers working 24×7” to help clean up the mess created by CrowdStrike’s update and hinted at Windows changes that could help—if they don’t run afoul of regulators, anyway.

“This incident shows clearly that Windows must prioritize change and innovation in the area of end-to-end resilience,” wrote Cable. “These improvements must go hand in hand with ongoing improvements in security and be in close cooperation with our many partners, who also care deeply about the security of the Windows ecosystem.”

Cable pointed to VBS enclaves and Azure Attestation as examples of products that could keep Windows secure without requiring kernel-level access, as most Windows-based security products (including CrowdStrike’s Falcon sensor) do now. But he stopped short of outlining what specific changes might be made to Windows, saying only that Microsoft would continue to “harden our platform, and do even more to improve the resiliency of the Windows ecosystem, working openly and collaboratively with the broad security community.”

When running in kernel mode rather than user mode, security software has full access to a system’s hardware and software, which makes it more powerful and flexible; this also means that a bad update like CrowdStrike’s can cause a lot more problems.

Recent versions of macOS have deprecated third-party kernel extensions for exactly this reason, one explanation for why Macs weren’t taken down by the CrowdStrike update. But past efforts by Microsoft to lock third-party security companies out of the Windows kernel—most recently in the Windows Vista era—have been met with pushback from European Commission regulators. That level of skepticism is warranted, given Microsoft’s past (and continuing) record of using Windows’ market position to push its own products and services. Any present-day attempt to restrict third-party vendors’ access to the Windows kernel would be likely to draw similar scrutiny.

Microsoft has also had plenty of its own security problems to deal with recently, to the point that it has promised to restructure the company to make security more of a focus.

CrowdStrike’s aftermath

CrowdStrike has made its own promises in the wake of the outage, including more thorough testing of updates and a phased-rollout system that could prevent a bad update file from causing quite as much trouble as the one last week did. The company’s initial incident report pointed to a lapse in its testing procedures as the cause of the problem.

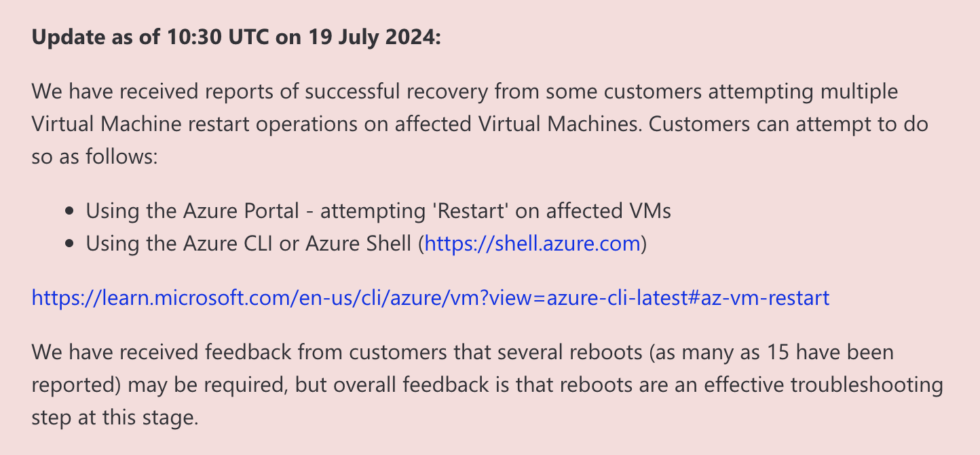

Meanwhile, recovery continues. Some systems could be fixed simply by rebooting, though they had to do it as many as 15 times—this could give systems a chance to grab a new update file before they could crash. For the rest, IT admins were left to either restore them from backups or delete the bad update file manually. Microsoft published a bootable tool that could help automate the process of deleting that file, but it still required laying hands on every single affected Windows install, whether on a virtual machine or a physical system.

And not all of CrowdStrike’s remediation solutions have been well-received. The company sent out $10 UberEats promo codes to cover some of its partners’ “next cup of coffee or late night snack,” which occasioned some eye-rolling on social media sites (the code was also briefly unusable because Uber flagged it as fraudulent, according to a CrowdStrike representative). For context, analytics company Parametrix Insurance estimated the cost of the outage to Fortune 500 companies somewhere in the realm of $5.4 billion.

97% of CrowdStrike systems are back online; Microsoft suggests Windows changes Read More »