Uncertainty loomed as FDA advisors met to discuss this year’s COVID shot

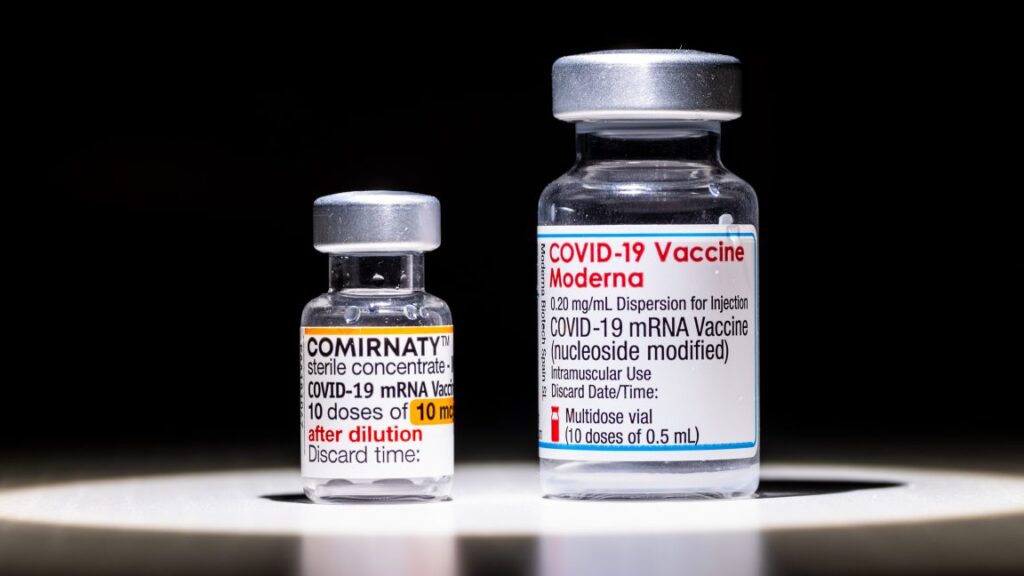

Calling it a “practical question,” he asked, “If we were to change strains, can we assume that age-specific licensure won’t change for any of these [vaccine] products?” Currently, COVID-19 boosters are accessible to those aged 6 months and up.

Weir reiterated that there was no answer. Another FDA official, David Kaslow, chimed in to say only, “Rest assured that we’re engaging with the manufacturers on this topic.”

As a follow-up to that exchange, VRBPAC member and infectious disease expert Eric Rubin of Harvard University shot down the FDA’s plan to use randomized placebo-controlled trials for licensure for healthy children and adults. The plethora of observational data—aka real-world data—on the boosters shows clear efficacy, Rubin pointed out. That suggests that requiring people in a trial to take placebos despite the availability of a clearly effective treatment could be unethical.

It suggests “that a randomized controlled trial (RCT) has no equipoise right now, and that you cannot do one,” Rubin said. “I don’t think the RCT is feasible,” he added.

The selection

While the pushback and the questions lingered, the committee still had to select a strain. For now, omicron still reigns, and variants in the JN.1 lineage are still dominant. That is largely unchanged from last year, when vaccine makers were advised to target their seasonal shots against the JN.1 lineage generally, or KP.2, the leading variant in the JN.1 lineage at the time, specifically.

This year, advisors unanimously voted to stick with vaccines that target the JN.1 lineage, in line with recommendations from the World Health Organization. The question of targeting the JN.1 lineage was the only voting question the FDA tasked them with. But there was open discussion on a more specific recommendation. Given the regulatory uncertainty, advisors were divided on whether to stick with the JN.1 and KP.2 formulations from last year or recommend switching to the latest leading variant in the JN.1 family, LP.8.1.

Shortly after the meeting, the FDA announced that it would essentially leave it up to manufacturers; they could stick with JN.1 or KP.2 but, if feasible, switch to LP.8.1.

“The COVID-19 vaccines for use in the United States beginning in fall 2025 should be monovalent JN.1-lineage-based COVID-19 vaccines (2025–2026 Formula), preferentially using the LP.8.1 strain,” it said.

Uncertainty loomed as FDA advisors met to discuss this year’s COVID shot Read More »