Why synthetic emerald-green pigments degrade over time

Perhaps most relevant to this current paper is a 2020 study in which scientists analyzed Munch’s The Scream, which was showing alarming signs of degradation. They concluded the damage was not the result of exposure to light, but humidity—specifically, from the breath of museum visitors, perhaps as they lean in to take a closer look at the master’s brushstrokes.

Let there be (X-ray) light

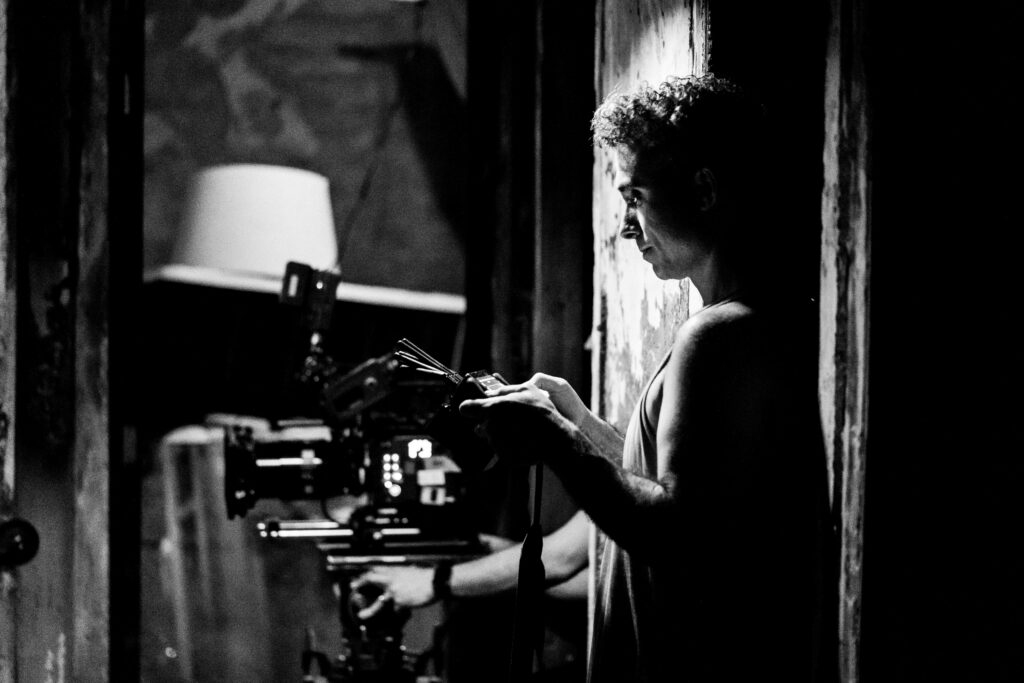

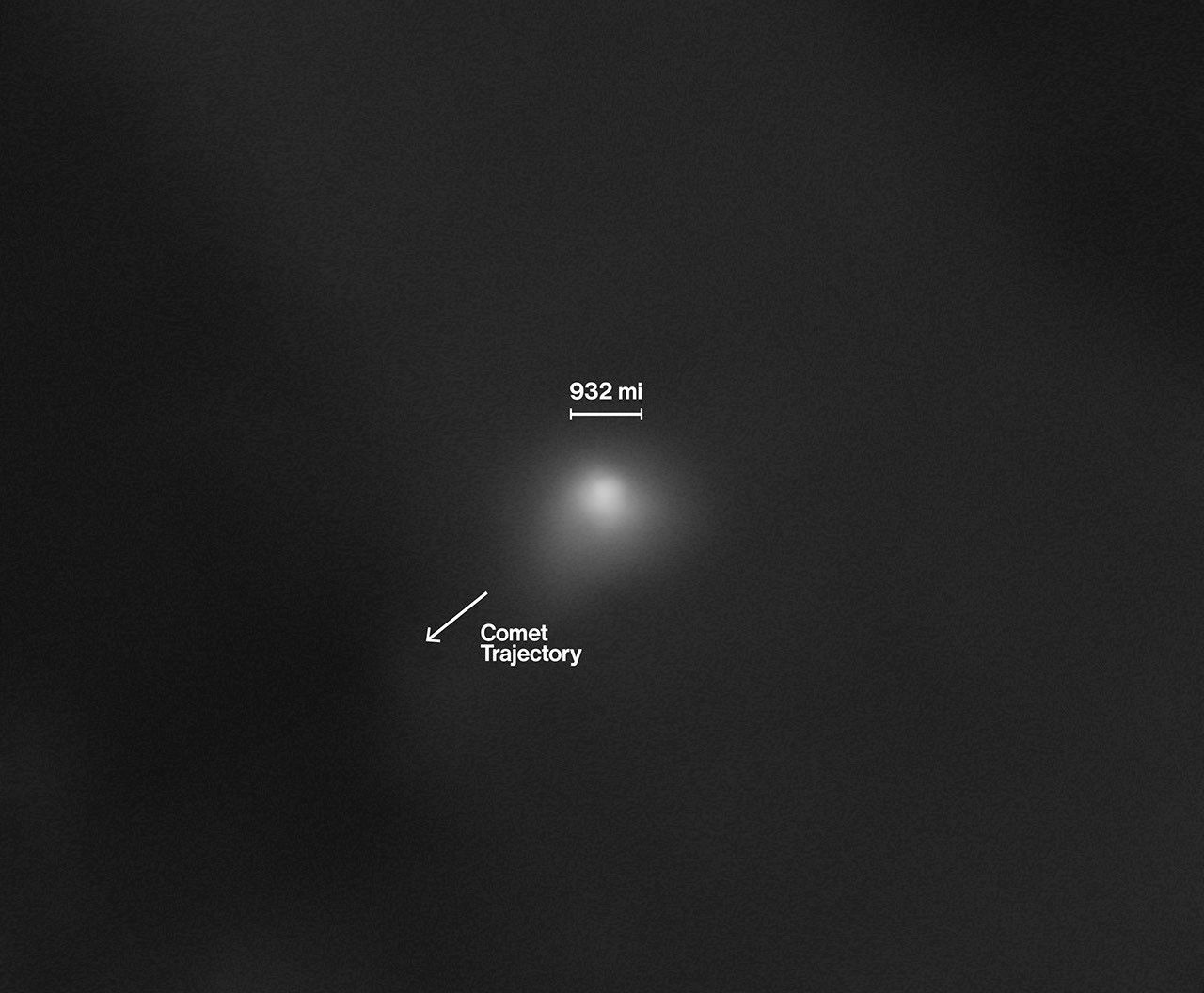

Co-author Letizia Monico during the experiments at the European Synchrotron. ESRF

Emerald-green pigments are particularly prone to degradation, so that’s the pigment the authors of this latest paper decided to analyze. “It was already known that emerald-green decays over time, but we wanted to understand exactly the role of light and humidity in this degradation,” said co-author Letizia Monico of the University of Perugia in Italy.

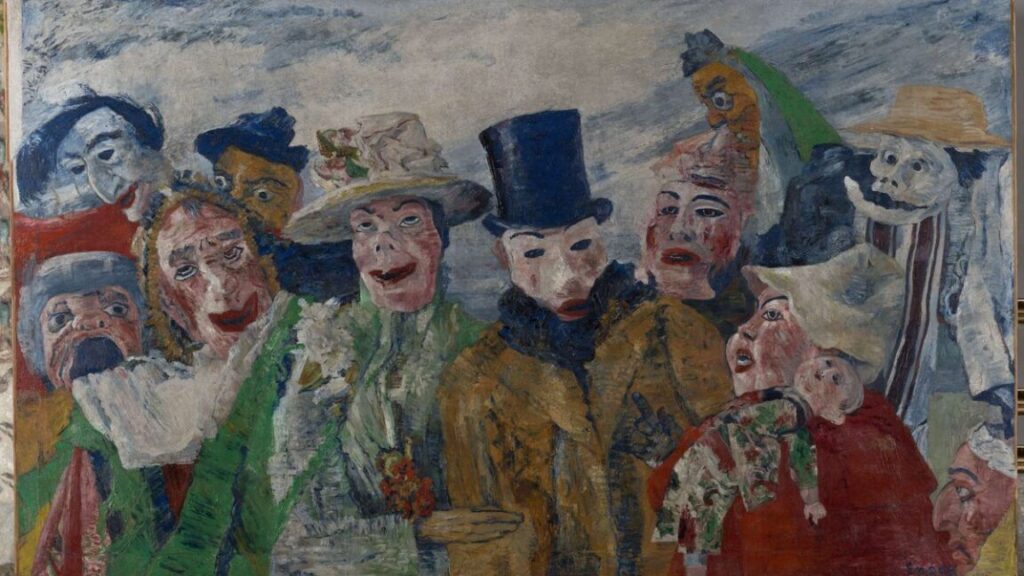

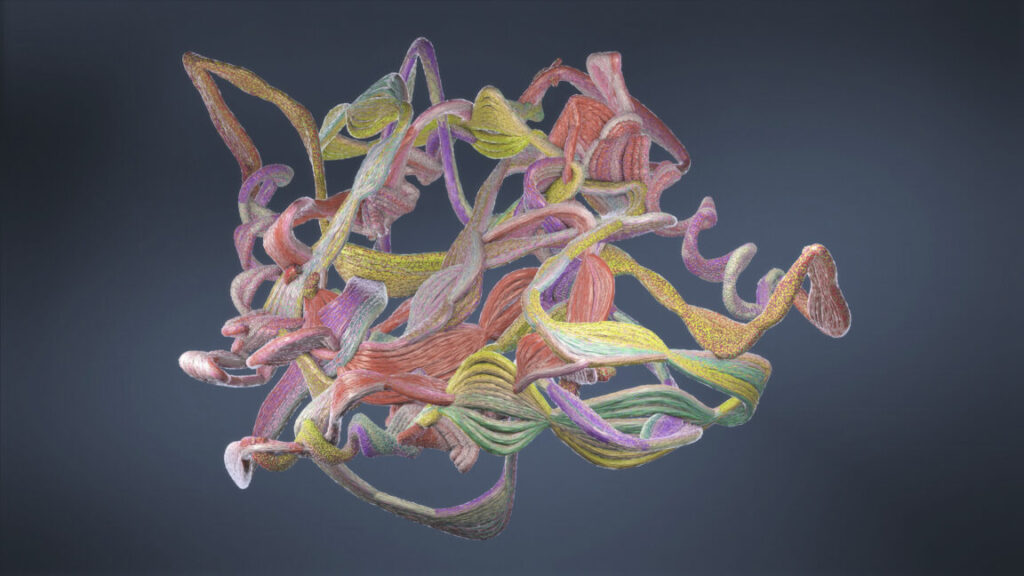

The first step was to collect emerald-green paint microsamples with a scalpel and stereomicroscope from an artwork of that period—in this case, The Intrigue (1890) by James Ensor, currently housed in the Royal Museum of Fine Arts, in Antwerp, Belgium. The team analyzed the untreated samples using Fourier transform infrared imaging, then embedded the samples in polyester resin for synchrotron radiation X-ray analysis. They conducted separate analyses on both commercial and historical samples of emerald-green pigment powders and paint tubes, including one from a museum collection of paint tubes used by Munch.

Next, the authors created their own paint mockups by mixing commercial emerald-green pigment powders and their lab-made powders with linseed oil, and then applied the concoctions to polycarbonate substrates. They also squeezed paint from the Munch paint tube onto a substrate. Once the mockups were dry, thin samples were sliced from each mockup and also analyzed with synchrotron radiation. Then the mockups were subjected to two aging protocols designed to determine the effects of UV light (to simulate indoor lighting) and humidity on the pigments.

The results: In the mockups, light and humidity trigger different degradation pathways in emerald-green paints. Humidity results in the formation of arsenolite, making the paint brittle and prone to flaking. Light dulls the color by causing trivalent arsenic already in the pigment to oxidize into pentavalent compounds, forming a thin white layer on the surface. Those findings are consistent with the analyzed samples taken from The Intrigue, confirming the degradation is due to photo-oxidation. Light, it turns out, is the greatest threat to that particular painting, and possibly other masterpieces from the same period.

Science Advances, 2025. DOI: 10.1126/sciadv.ady1807 (About DOIs).

Why synthetic emerald-green pigments degrade over time Read More »