Nvidia is ditching dedicated G-Sync modules to push back against FreeSync’s ubiquity

sync or swim —

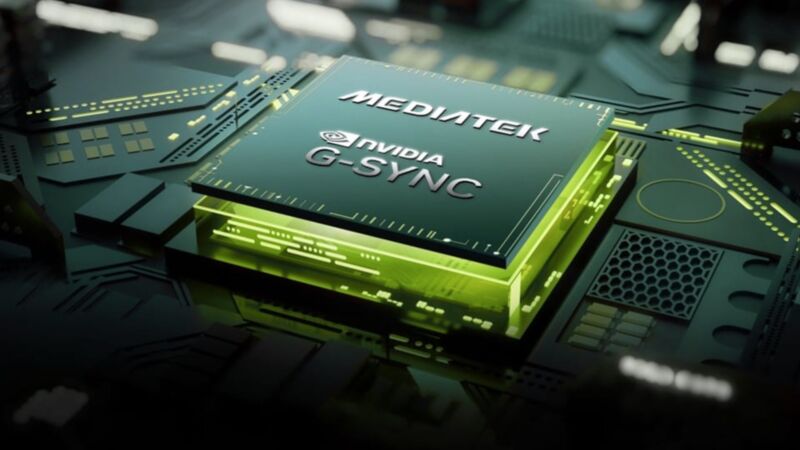

But G-Sync will still require specific G-Sync-capable MediaTek scaler chips.

Nvidia

Back in 2013, Nvidia introduced a new technology called G-Sync to eliminate screen tearing and stuttering effects and reduce input lag when playing PC games. The company accomplished this by tying your display’s refresh rate to the actual frame rate of the game you were playing, and similar variable refresh-rate (VRR) technology has become a mainstay even in budget monitors and TVs today.

The issue for Nvidia is that G-Sync isn’t what has been driving most of that adoption. G-Sync has always required extra dedicated hardware inside of displays, increasing the costs for both users and monitor manufacturers. The VRR technology in most low-end to mid-range screens these days is usually some version of the royalty-free AMD FreeSync or the similar VESA Adaptive-Sync standard, both of which provide G-Sync’s most important features without requiring extra hardware. Nvidia more or less acknowledged that the free-to-use, cheap-to-implement VRR technologies had won in 2019 when it announced its “G-Sync Compatible” certification tier for FreeSync monitors. The list of G-Sync Compatible screens now vastly outnumbers the list of G-Sync and G-Sync Ultimate screens.

Today, Nvidia is announcing a change that’s meant to keep G-Sync alive as its own separate technology while eliminating the requirement for expensive additional hardware. Nvidia says it’s partnering with chipmaker MediaTek to build G-Sync capabilities directly into scaler chips that MediaTek is creating for upcoming monitors. G-Sync modules ordinarily replace these scaler chips, but they’re entirely separate boards with expensive FPGA chips and dedicated RAM.

These new MediaTek scalers will support all the same features that current dedicated G-Sync modules do. Nvidia says that three G-Sync monitors with MediaTek scaler chips inside will launch “later this year”: the Asus ROG Swift PG27AQNR, the Acer Predator XB273U F5, and the AOC AGON PRO AG276QSG2. These are all 27-inch 1440p displays with maximum refresh rates of 360 Hz.

As of this writing, none of these companies has announced pricing for these displays—the current Asus PG27AQN has a traditional G-Sync module and a 360 Hz refresh rate and currently goes for around $800, so we’d hope for the new version to be significantly cheaper to make good on Nvidia’s claim that the MediaTek chips will reduce costs (or, if they do reduce costs, whether monitor makers are willing to pass those savings on to consumers).

For most people most of the time, there won’t be an appreciable difference between a “true” G-Sync monitor and one that uses FreeSync or Adaptive-Sync, but there are still a few fringe benefits. G-Sync monitors support a refresh rate between 1 and the maximum refresh rate of the monitor, whereas FreeSync and Adaptive-Sync stop working on most displays when the frame rate drops below 40 or 48 frames per second. All G-Sync monitors also support “variable overdrive” technology to help eliminate display ghosting, and the new MediaTek-powered displays will support the recent “G-Sync Pulsar” feature to reduce blur.

Nvidia is ditching dedicated G-Sync modules to push back against FreeSync’s ubiquity Read More »