Amazon joins Google in investing in small modular nuclear power

Small nukes is good nukes?

What’s with the sudden interest in nuclear power among tech titans?

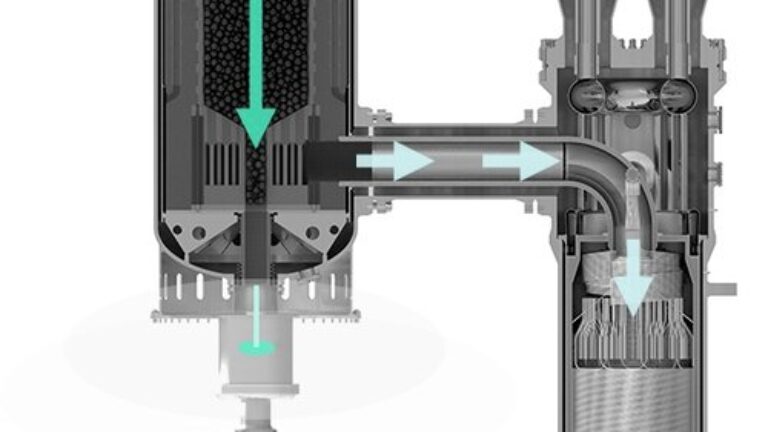

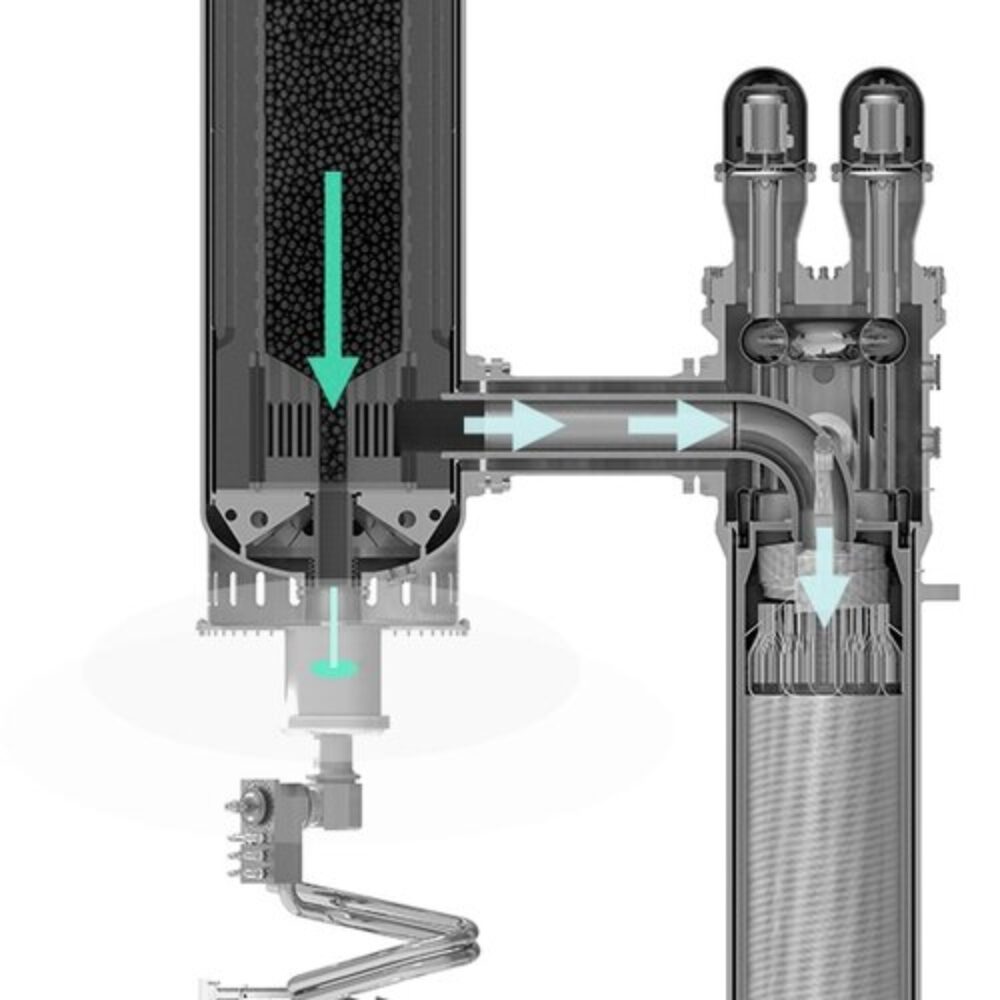

Fuel pellets flow down the reactor (left), as gas transfer heat to a boiler (right). Credit: X-energy

On Tuesday, Google announced that it had made a power purchase agreement for electricity generated by a small modular nuclear reactor design that hasn’t even received regulatory approval yet. Today, it’s Amazon’s turn. The company’s Amazon Web Services (AWS) group has announced three different investments, including one targeting a different startup that has its own design for small, modular nuclear reactors—one that has not yet received regulatory approval.

Unlike Google’s deal, which is a commitment to purchase power should the reactors ever be completed, Amazon will lay out some money upfront as part of the agreements. We’ll take a look at the deals and technology that Amazon is backing before analyzing why companies are taking a risk on unproven technologies.

Money for utilities and a startup

Two of Amazon’s deals are with utilities that serve areas where it already has a significant data center footprint. One of these is Energy Northwest, which is an energy supplier that sends power to utilities in the Pacific Northwest. Amazon is putting up the money for Energy Northwest to study the feasibility of adding small modular reactors to its Columbia Generating Station, which currently houses a single, large reactor. In return, Amazon will get the right to purchase power from an initial installation of four small modular reactors. The site could potentially support additional reactors, which Energy Northwest would be able to use to meet demands from other users.

The deal with Virginia’s Dominion Energy is similar in that it would focus on adding small modular reactors to Dominion’s existing North Anna Nuclear Generating Station. But the exact nature of the deal is a bit harder to understand. Dominion says the companies will “jointly explore innovative ways to advance SMR development and financing while also mitigating potential cost and development risks.”

Should either or both of these projects go forward, the reactor designs used will come from a company called X-energy, which is involved in the third deal Amazon is announcing. In this case, it’s a straightforward investment in the company, although the exact dollar amount is unclear (the company says Amazon is “anchoring” a $500 million round of investments). The money will help finalize the company’s reactor design and push it through the regulatory approval process.

Small modular nuclear reactors

X-energy is one of several startups attempting to develop small modular nuclear reactors. The reactors all have a few features that are expected to help them avoid the massive time and cost overruns associated with the construction of large nuclear power stations. In these small reactors, the limited size allows them to be made at a central facility and then be shipped to the power station for installation. This limits the scale of the infrastructure that needs to be built in place and allows the assembly facility to benefit from economies of scale.

This also allows a great deal of flexibility at the installation site, as you can scale the facility to power needs simply by adjusting the number of installed reactors. If demand rises in the future, you can simply install a few more.

The small modular reactors are also typically designed to be inherently safe. Should the site lose power or control over the hardware, the reactor will default to a state where it can’t generate enough heat to melt down or damage its containment. There are various approaches to achieving this.

X-energy’s technology is based on small, self-contained fuel pellets called TRISO particles for TRi-structural ISOtropic. These contain both the uranium fuel and a graphite moderator and are surrounded by a ceramic shell. They’re structured so that there isn’t sufficient uranium present to generate temperatures that can damage the ceramic, ensuring that the nuclear fuel will always remain contained.

The design is meant to run at high temperatures and extract heat from the reactor using helium, which is used to boil water and generate electricity. Each reactor can produce 80 megawatts of electricity, and the reactors are designed to work efficiently as a set of four, creating a 320 MW power plant. As of yet, however, there are no working examples of this reactor, and the design hasn’t been approved by the Nuclear Regulatory Commission.

Why now?

Why is there such sudden interest in small modular reactors among the tech community? It comes down to growing needs and a lack of good alternatives, even given the highly risky nature of the startups that hope to build the reactors.

It’s no secret that data centers require enormous amounts of energy, and the sudden popularity of AI threatens to raise that demand considerably. Renewables, as the cheapest source of power on the market, would be one way of satisfying that growth, but they’re not ideal. For one thing, the intermittent nature of the power they supply, while possible to manage at the grid level, is a bad match for the around-the-clock demands of data centers.

The US has also benefitted from over a decade of efficiency gains keeping demand flat despite population and economic growth. This has meant that all the renewables we’ve installed have displaced fossil fuel generation, helping keep carbon emissions in check. Should newly installed renewables instead end up servicing rising demand, it will make it considerably more difficult for many states to reach their climate goals.

Finally, renewable installations have often been built in areas without dedicated high-capacity grid connections, resulting in a large and growing backlog of projects (2.6 TW of generation and storage as of 2023) that are stalled as they wait for the grid to catch up. Expanding the pace of renewable installation can’t meet rising server farm demand if the power can’t be brought to where the servers are.

These new projects avoid that problem because they’re targeting sites that already have large reactors and grid connections to use the electricity generated there.

In some ways, it would be preferable to build more of these large reactors based on proven technologies. But not in two very important ways: time and money. The last reactor completed in the US was at the Vogtle site in Georgia, which started construction in 2009 but only went online this year. Costs also increased from $14 billion to over $35 billion during construction. It’s clear that any similar projects would start generating far too late to meet the near-immediate needs of server farms and would be nearly impossible to justify economically.

This leaves small modular nuclear reactors as the least-bad option in a set of bad options. Despite many startups having entered the space over a decade ago, there is still just a single reactor design approved in the US, that of NuScale. But the first planned installation saw the price of the power it would sell rise to the point where it was no longer economically viable due to the plunge in the cost of renewable power; it was canceled last year as the utilities that would have bought the power pulled out.

The probability that a different company will manage to get a reactor design approved, move to construction, and manage to get something built before the end of the decade is extremely low. The chance that it will be able to sell power at a competitive price is also very low, though that may change if demand rises sufficiently. So the fact that Amazon is making some extremely risky investments indicates just how worried it is about its future power needs. Of course, when your annual gross profit is over $250 billion a year, you can afford to take some risks.

John is Ars Technica’s science editor. He has a Bachelor of Arts in Biochemistry from Columbia University, and a Ph.D. in Molecular and Cell Biology from the University of California, Berkeley. When physically separated from his keyboard, he tends to seek out a bicycle, or a scenic location for communing with his hiking boots.

Amazon joins Google in investing in small modular nuclear power Read More »