OpenAI collapses media reality with Sora, a photorealistic AI video generator

Pics and it didn’t happen —

Hello, cultural singularity—soon, every video you see online could be completely fake.

Enlarge / Snapshots from three videos generated using OpenAI’s Sora.

On Thursday, OpenAI announced Sora, a text-to-video AI model that can generate 60-second-long photorealistic HD video from written descriptions. While it’s only a research preview that we have not tested, it reportedly creates synthetic video (but not audio yet) at a fidelity and consistency greater than any text-to-video model available at the moment. It’s also freaking people out.

“It was nice knowing you all. Please tell your grandchildren about my videos and the lengths we went to to actually record them,” wrote Wall Street Journal tech reporter Joanna Stern on X.

“This could be the ‘holy shit’ moment of AI,” wrote Tom Warren of The Verge.

“Every single one of these videos is AI-generated, and if this doesn’t concern you at least a little bit, nothing will,” tweeted YouTube tech journalist Marques Brownlee.

For future reference—since this type of panic will some day appear ridiculous—there’s a generation of people who grew up believing that photorealistic video must be created by cameras. When video was faked (say, for Hollywood films), it took a lot of time, money, and effort to do so, and the results weren’t perfect. That gave people a baseline level of comfort that what they were seeing remotely was likely to be true, or at least representative of some kind of underlying truth. Even when the kid jumped over the lava, there was at least a kid and a room.

The prompt that generated the video above: “A movie trailer featuring the adventures of the 30 year old space man wearing a red wool knitted motorcycle helmet, blue sky, salt desert, cinematic style, shot on 35mm film, vivid colors.“

Technology like Sora pulls the rug out from under that kind of media frame of reference. Very soon, every photorealistic video you see online could be 100 percent false in every way. Moreover, every historical video you see could also be false. How we confront that as a society and work around it while maintaining trust in remote communications is far beyond the scope of this article, but I tried my hand at offering some solutions back in 2020, when all of the tech we’re seeing now seemed like a distant fantasy to most people.

In that piece, I called the moment that truth and fiction in media become indistinguishable the “cultural singularity.” It appears that OpenAI is on track to bring that prediction to pass a bit sooner than we expected.

Prompt: Reflections in the window of a train traveling through the Tokyo suburbs.

OpenAI has found that, like other AI models that use the transformer architecture, Sora scales with available compute. Given far more powerful computers behind the scenes, AI video fidelity could improve considerably over time. In other words, this is the “worst” AI-generated video is ever going to look. There’s no synchronized sound yet, but that might be solved in future models.

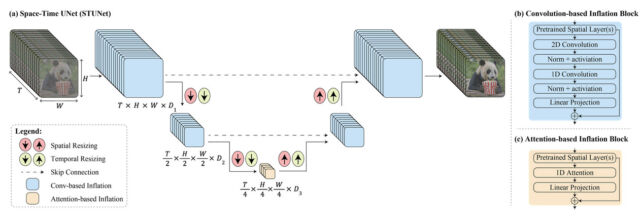

How (we think) they pulled it off

AI video synthesis has progressed by leaps and bounds over the past two years. We first covered text-to-video models in September 2022 with Meta’s Make-A-Video. A month later, Google showed off Imagen Video. And just 11 months ago, an AI-generated version of Will Smith eating spaghetti went viral. In May of last year, what was previously considered to be the front-runner in the text-to-video space, Runway Gen-2, helped craft a fake beer commercial full of twisted monstrosities, generated in two-second increments. In earlier video-generation models, people pop in and out of reality with ease, limbs flow together like pasta, and physics doesn’t seem to matter.

Sora (which means “sky” in Japanese) appears to be something altogether different. It’s high-resolution (1920×1080), can generate video with temporal consistency (maintaining the same subject over time) that lasts up to 60 seconds, and appears to follow text prompts with a great deal of fidelity. So, how did OpenAI pull it off?

OpenAI doesn’t usually share insider technical details with the press, so we’re left to speculate based on theories from experts and information given to the public.

OpenAI says that Sora is a diffusion model, much like DALL-E 3 and Stable Diffusion. It generates a video by starting off with noise and “gradually transforms it by removing the noise over many steps,” the company explains. It “recognizes” objects and concepts listed in the written prompt and pulls them out of the noise, so to speak, until a coherent series of video frames emerge.

Sora is capable of generating videos all at once from a text prompt, extending existing videos, or generating videos from still images. It achieves temporal consistency by giving the model “foresight” of many frames at once, as OpenAI calls it, solving the problem of ensuring a generated subject remains the same even if it falls out of view temporarily.

OpenAI represents video as collections of smaller groups of data called “patches,” which the company says are similar to tokens (fragments of a word) in GPT-4. “By unifying how we represent data, we can train diffusion transformers on a wider range of visual data than was possible before, spanning different durations, resolutions, and aspect ratios,” the company writes.

An important tool in OpenAI’s bag of tricks is that its use of AI models is compounding. Earlier models are helping to create more complex ones. Sora follows prompts well because, like DALL-E 3, it utilizes synthetic captions that describe scenes in the training data generated by another AI model like GPT-4V. And the company is not stopping here. “Sora serves as a foundation for models that can understand and simulate the real world,” OpenAI writes, “a capability we believe will be an important milestone for achieving AGI.”

One question on many people’s minds is what data OpenAI used to train Sora. OpenAI has not revealed its dataset, but based on what people are seeing in the results, it’s possible OpenAI is using synthetic video data generated in a video game engine in addition to sources of real video (say, scraped from YouTube or licensed from stock video libraries). Nvidia’s Dr. Jim Fan, who is a specialist in training AI with synthetic data, wrote on X, “I won’t be surprised if Sora is trained on lots of synthetic data using Unreal Engine 5. It has to be!” Until confirmed by OpenAI, however, that’s just speculation.

OpenAI collapses media reality with Sora, a photorealistic AI video generator Read More »