Inside Nvidia’s 10-year effort to make the Shield TV the most updated Android device ever

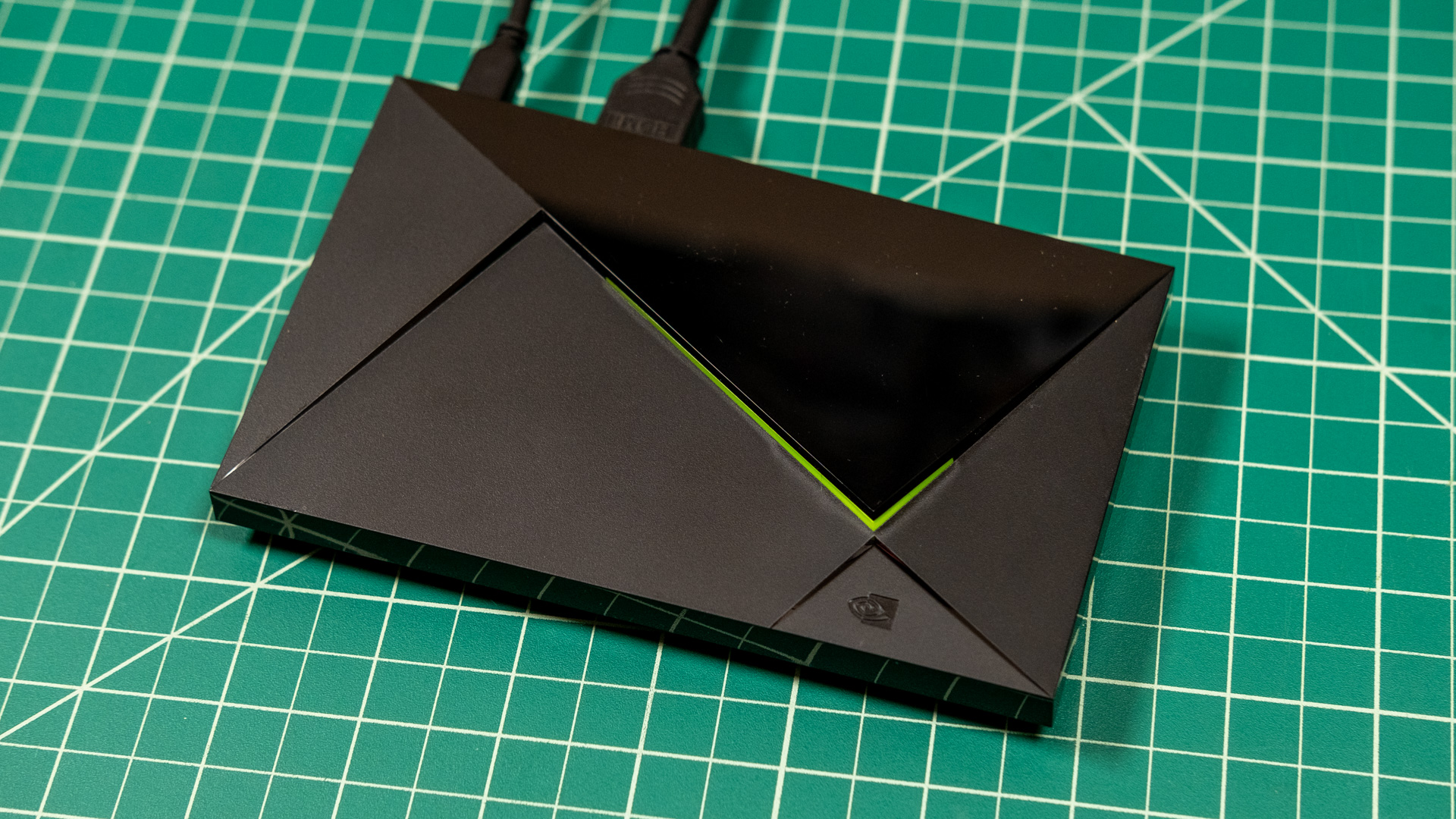

It took Android devicemakers a very long time to commit to long-term update support. Samsung and Google have only recently decided to offer seven years of updates for their flagship Android devices, but a decade ago, you were lucky to get more than one or two updates on even the most expensive Android phones and tablets. How is it, then, that an Android-powered set-top box from 2015 is still going strong?

Nvidia released the first Shield Android TV in 2015, and according to the company’s senior VP of hardware engineering, Andrew Bell, supporting these devices has been a labor of love. And the team at Nvidia still loves the Shield. Bell assures us that Nvidia has never given up, even when it looked like support for the Shield was waning, and it doesn’t plan to stop any time soon.

The soul of Shield

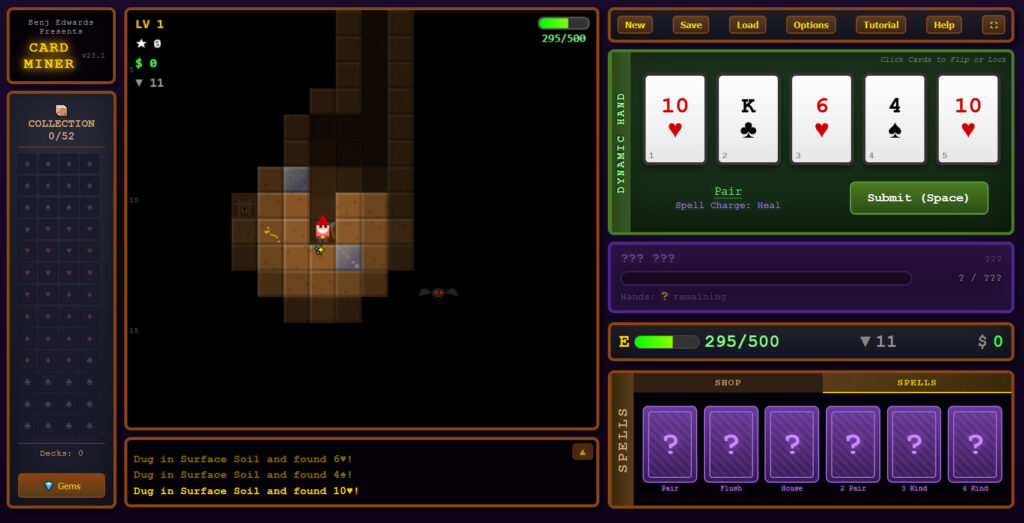

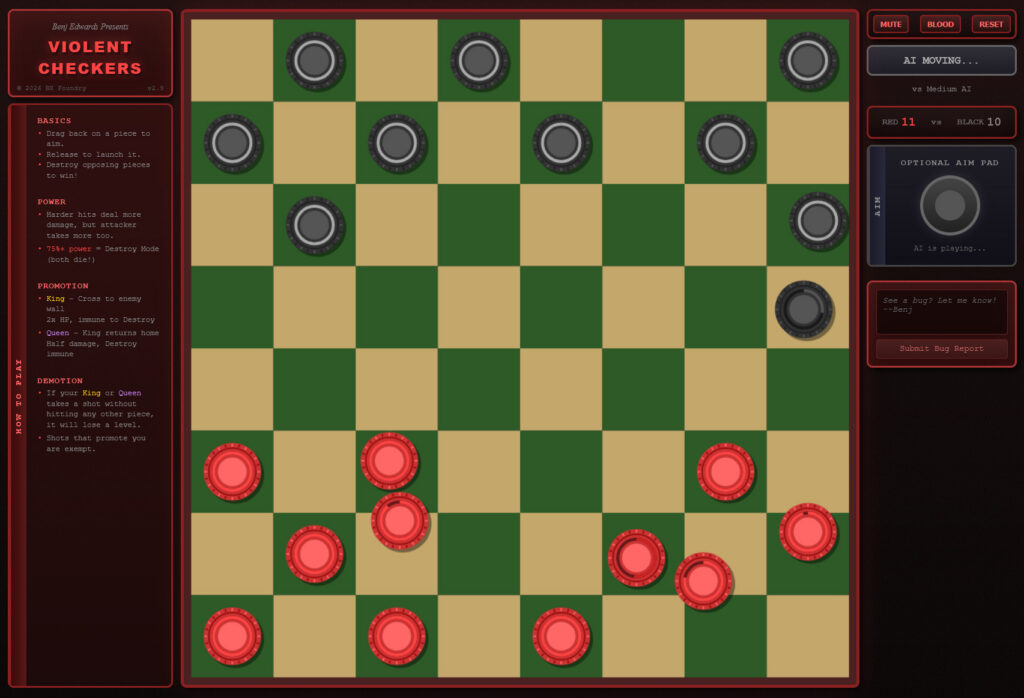

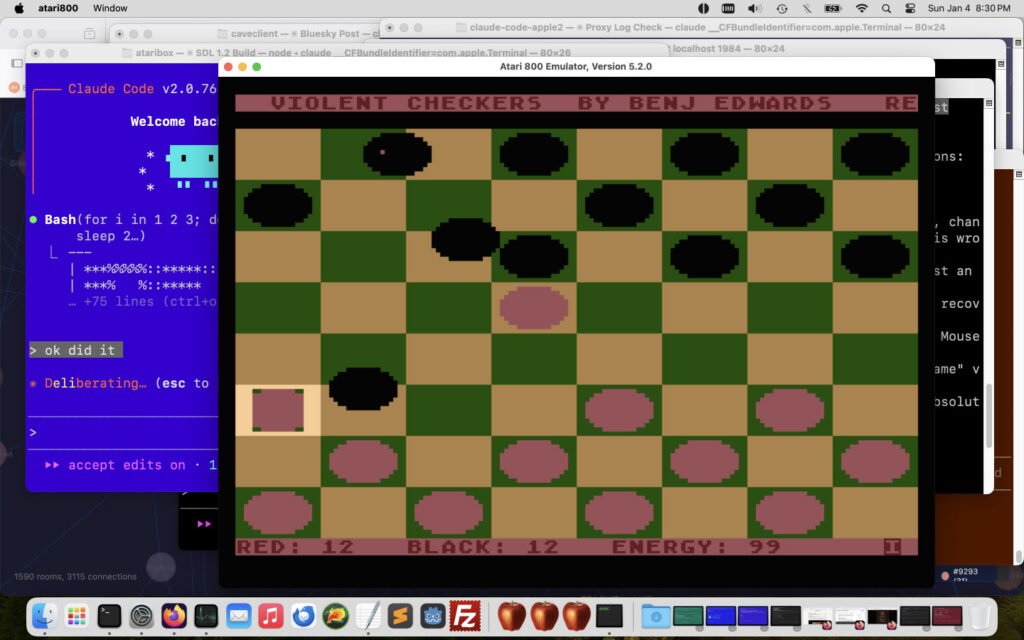

Gaming has been central to Nvidia since its start, and that focus gave rise to the Shield. “Pretty much everybody who worked at Nvidia in the early days really wanted to make a game console,” said Bell, who has worked at the company for 25 years.

However, Nvidia didn’t have what it needed back then. Before gaming, crypto, and AI turned it into the multi-trillion-dollar powerhouse it is today, Nvidia had a startup mentality and the budget to match. When Shield devices began percolating in the company’s labs, it was seen as an important way to gain experience with “full-stack” systems and all the complications that arise when managing them.

“To build a game console was pretty complicated because, of course, you have to have a GPU, which we know how to make,” Bell explained. “But in addition to that, you need a CPU, an OS, games, and you need a UI.”

Through acquisitions and partnerships, the pieces of Nvidia’s fabled game console slowly fell into place. The purchase of PortalPlayer in 2007 brought the CPU technology that would become the Tegra Arm chips, and the company’s surging success in GPUs gave it the partnerships it needed to get games. But the UI was still missing—that didn’t change until Google expanded Android to the TV in 2014. The company’s first Android mobile efforts were already out there in the form of the Shield Portable and Shield Tablet, but the TV-connected box is what Nvidia really wanted.

“Selfishly, a little bit, we built Shield for ourselves,” Bell told Ars Technica. “We actually wanted a really good TV streamer that was high-quality and high-performance, and not necessarily in the Apple ecosystem. We built some prototypes, and we got so excited about it. [CEO Jensen Huang] was like, ‘Why don’t we bring it out and sell it to people?’”

The first Shield box in 2015 had a heavy gaming focus, with a raft of both local and cloud-based (GeForce Now) games. The base model included only a game controller, with the remote control sold separately. According to Bell, Nvidia eventually recognized that the gaming angle wasn’t as popular as it had hoped. The 2017 and 2019 Shield refreshes were more focused on the streaming experience.

“Eventually, we kind of said, ‘Maybe the soul is that it’s a streamer for gamers,’” said Bell. “We understand gamers from GeForce, and we understand they care about quality and performance. A lot of these third-party devices like tablets, they’re going cheap. Set-top boxes, they’re going cheap. But we were the only company that was like, ‘Let’s go after people who really want a premium experience.’”

Nvidia used to sell Shield-branded game controllers. Credit: Ryan Whitwam

And premium it is, offering audio and video support far beyond what you find in other TV boxes, even years after release. The Shield TV started at $200 in 2015, and that’s still what you’ll pay for the Pro model to this day. However, Bell notes that passion was the driving force behind bringing the Shield TV to market. The team didn’t know if it would make money, and indeed, the company lost money on every unit sold during the original production run. The 2017 and 2019 refreshes were about addressing that while also emphasizing the Shield’s streaming media chops.

A passion for product support

Update support for Internet-connected devices is vital—whether they’re phones, tablets, set-top boxes, or something else. When updates cease, gadgets fall out of sync with platform features, leading to new bugs (which will never be fixed) and security holes that can affect safety and functionality. The support guarantee attached to a device is basically its expiration date.

“We were all frustrated as buyers of phones and tablets that you buy a device, you get one or two updates, and that’s it!” said Bell. “Early on when we were building Shield TV, we decided we were going to make it for a long time. Jensen and I had a discussion, and it was, ‘How long do we want to support this thing?’ And Jensen said, ‘For as long as we shall live.’”

In 2025, Nvidia wrapped up its tenth year of supporting the Shield platform. Even those original 2015 boxes are still being maintained with bug fixes and the occasional new feature. They’ve gone all the way from Android 5.0 to Android 11 in that time. No Android device—not a single phone, tablet, watch, or streaming box—has gotten anywhere close to this level of support.

The best example of Nvidia’s passion for support is, believe it or not, a two-year gap in updates.

Across the dozens of Shield TV updates, there have been a few times when fans feared Nvidia was done with the box. Most notably, there were no public updates for the Shield TV in 2023 or 2024, but over-the-air updates resumed in 2025.

“On the outside, it looked like we went quiet, but it’s actually one of our bigger development efforts,” explained Bell.

The origins of that effort, surprisingly, stretch back years to the launch of the Nintendo Switch. The Shield runs Nvidia’s custom Tegra X1 Arm chip, the same processor Nintendo chose to power the original Switch in 2017. Soon after release, modders discovered a chip flaw that could bypass Nintendo’s security measures, enabling homebrew (and piracy). An updated Tegra X1 chip (also used in the 2019 Shield refresh) fixed that for Nintendo, but Nvidia’s 2015 and 2017 Shield boxes ran the same exploitable version.

Initially, Nvidia was able to roll out periodic patches to protect against the vulnerability, but by 2023, the Shield needed something more. Around that time, owners of 2015 and 2017 Shield boxes had noticed that DRM-protected 4K content often failed to play—that was thanks to the same bug that affected the Switch years earlier.

With a newer, non-vulnerable product on the market, many companies might have just accepted that the older product would lose functionality, but Nvidia’s passion for Shield remained. Bell consulted Huang, whom he calls Shield customer No. 1, about the meaning of his “as long as we shall live” pledge, and the team was approved to spend whatever time was needed to fix the vulnerability on the first two generations of Shield TV.

According to Bell, it took about 18 months to get there, requiring the creation of an entirely new security stack. He explains that Android updates aren’t actually that much work compared to DRM security, and some of its partners weren’t that keen on re-certifying older products. The Shield team fought for it because they felt, as they had throughout the product’s run, that they’d made a promise to customers who expected the box to have certain features.

In February 2025, Nvidia released Shield Patch 9.2, the first wide release in two years. The changelog included an unassuming line reading, “Added security enhancement for 4K DRM playback.” That was the Tegra X1 bug finally being laid to rest on the 2015 and 2017 Shield boxes.

The refreshed Tegra X1+ in the 2019 Shield TV spared it from those DRM issues, and Nvidia still hasn’t stopped working on that chip. The Tegra X1 was blazing fast in 2015, and it’s still quite capable compared to your average smart TV today. The chip has actually outlasted several of the components needed to manufacture it. For example, when the Tegra chip’s memory was phased out, the team immediately began work on qualifying a new memory supplier. To this day, Nvidia is still iterating on the Tegra X1 platform, supporting the Shield’s continued updates.

“If operations calls me and says they just ran out of this component, I’ve got engineers on it tonight looking for a new component,” Bell said.

The future of Shield

Nvidia has put its money where its mouth is by supporting all versions of the Shield for so long. But it’s been over six years since we’ve seen new hardware. Surely the Shield has to be running out of steam, right?

Not so, says Bell. Nvidia still manufactures the 2019 Shield because people are still buying it. In fact, the sales volume has remained basically unchanged for the past 10 years. The Shield Pro is a spendy step-top box at $200, so Nvidia has experimented with pricing and promotion with little effect. The 2019 non-Pro Shield was one such effort. The base model was originally priced at $99, but the MSRP eventually landed at $150.

“No matter how much we dropped the price or how much we market or don’t market it, the same number of people come out of the woodwork every week to buy Shield,” Bell explained.

Nvidia had no choice but to put that giant Netflix button on the remote. Credit: Ryan Whitwam

That kind of consistency isn’t lost on Nvidia. Bell says the company has no plans to stop production or updates for the Shield “any time soon.” It’s also still possible that Nvidia could release new Shield TV hardware in the future. Nvidia’s Shield devices came about as a result of engineers tinkering with new concepts in a lab setting, but most of those experiments never see the light of day. For example, Bell notes that the team produced several updated versions of the Shield Tablet and Shield Portable (some of which you can find floating around on eBay) that never got a retail release, and they continue to work on Shield TV.

“We’re always playing in the labs, trying to discover new things,” said Bell. “We’ve played with new concepts for Shield and we’ll continue to play, and if we find something we’re super-excited about, we’ll probably make a go of it.”

But what would that look like? Video technology has advanced since 2019, leaving the Shield unable to take full advantage of some newer formats. First up would be support for VP9 Profile 2 hardware decoding, which enables HDR video on YouTube. Bell says a refreshed Shield would also prioritize formats like AV1 and the HDR 10+ standard, as well as support for newer Dolby Vision profiles for people with backed-up media.

And then there’s the enormous, easy-to-press-by-accident Netflix button on the remote. While adding new video technologies would be job one, fixing the Netflix button is No. 2 for a theoretical new Shield. According to Bell, Nvidia doesn’t receive any money from Netflix for the giant button on its remote. It’s actually there as a requirement of Netflix’s certification program, which was “very strong” in 2019. In a refresh, he thinks Nvidia could get away with a smaller “N” button. We can only hope.

But does Bell think he’ll get a chance to build that new Shield TV, shrunken Netflix button and all? He stopped short of predicting the future, but there’s definitely interest.

“We talk about it all the time—I’d love to,” he said.

Ryan Whitwam is a senior technology reporter at Ars Technica, covering the ways Google, AI, and mobile technology continue to change the world. Over his 20-year career, he’s written for Android Police, ExtremeTech, Wirecutter, NY Times, and more. He has reviewed more phones than most people will ever own. You can follow him on Bluesky, where you will see photos of his dozens of mechanical keyboards.