Musk fails to block California data disclosure law he fears will ruin xAI

Musk can’t convince judge public doesn’t care about where AI training data comes from.

Elon Musk’s xAI has lost its bid for a preliminary injunction that would have temporarily blocked California from enforcing a law that requires AI firms to publicly share information about their training data.

xAI had tried to argue that California’s Assembly Bill 2013 (AB 2013) forced AI firms to disclose carefully guarded trade secrets.

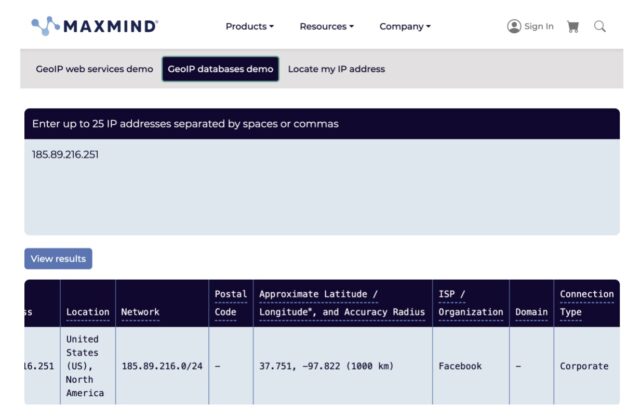

The law requires AI developers whose models are accessible in the state to clearly explain which dataset sources were used to train models, when the data was collected, if the collection is ongoing, and whether the datasets include any data protected by copyrights, trademarks, or patents. Disclosures would also clarify whether companies licensed or purchased training data and whether the training data included any personal information. It would also help consumers assess how much synthetic data was used to train the model, which could serve as a measure of quality.

However, this information is precisely what makes xAI valuable, with its intensive data sourcing supposedly setting it apart from its biggest rivals, xAI argued. Allowing enforcement could be “economically devastating” to xAI, Musk’s company argued, effectively reducing “the value of xAI’s trade secrets to zero,” xAI’s complaint said. Further, xAI insisted, these disclosures “cannot possibly be helpful to consumers” while supposedly posing a real risk of gutting the entire AI industry.

Specifically, xAI argued that its dataset sources, dataset sizes, and cleaning methods were all trade secrets.

“If competitors could see the sources of all of xAI’s datasets or even the size of its datasets, competitors could evaluate both what data xAI has and how much they lack,” xAI argued. In one hypothetical, xAI speculated that “if OpenAI (another leading AI company) were to discover that xAI was using an important dataset to train its models that OpenAI was not, OpenAI would almost certainly acquire that dataset to train its own model, and vice versa.”

However, in an order issued on Wednesday, US District Judge Jesus Bernal said that xAI failed to show that California’s law, which took effect in January, required the company to reveal any trade secrets.

xAI’s biggest problem was being too vague about the harms it faced if the law was not halted, the judge said. Instead of explaining why the disclosures could directly harm xAI, the company offered only “a variety of general allegations about the importance of datasets in developing AI models and why they are kept secret,” Bernal wrote, describing X as trading in “frequent abstractions and hypotheticals.”

He denied xAI’s motion for a preliminary injunction while supporting the government’s interest in helping the public assess how the latest AI models were trained.

The lawsuit will continue, but xAI will have to comply with California’s law in the meantime. That could see Musk sharing information he’d rather OpenAI had no knowledge of at a time when he’s embroiled in several lawsuits against the leading AI firm he now regrets helping to found.

While not ending the fight to keep OpenAI away from xAI’s training data, this week’s ruling is another defeat for Musk after a judge last month tossed one of his OpenAI lawsuits, ruling that Musk had no proof that OpenAI had stolen trade secrets.

xAI argued California wants to silence Grok

xAI’s complaint argued that California’s law was unconstitutional since data can be considered a trade secret under the Fifth Amendment. The company also argued that the state was trying to regulate the outputs of xAI’s controversial chatbot, Grok, and was unfairly compelling speech from xAI while exempting other firms for security purposes.

At this stage of the litigation, Bernal disagreed that xAI might be irreparably harmed if the law was not halted.

On the Fifth Amendment claim, the judge said it’s not that training data could never be considered a trade secret. It’s just that xAI “has not identified any dataset or approach to cleaning and using datasets that is distinct from its competitors in a manner warranting trade secret protection.”

“It is not lost on the Court the important role of datasets in AI training and development, and that, hypothetically, datasets and details about them could be trade secrets,” Bernal wrote. But xAI “has not alleged that it actually uses datasets that are unique, that it has meaningfully larger or smaller datasets than competitors, or that it cleans its datasets in unique ways.”

Therefore, xAI is not likely to succeed on the merits of its Fifth Amendment claim.

The same goes for First Amendment arguments. xAI failed to show that the law improperly “forces developers to publicly disclose their data sources in an attempt to identify what California deems to be ‘data riddled with implicit and explicit biases,’” Bernal wrote.

To xAI, it seemed like the state was trying to use the law to influence the outputs of its chatbot Grok, the company argued, which should be protected commercial speech.

Over the past year, Grok has increasingly drawn global public scrutiny for its antisemitic rants and for generating nonconsensual intimate imagery (NCII) and child sexual abuse materials (CSAM). But despite these scandals, which prompted a California probe, Bernal contradicted xAI, saying California did not appear to be trying to regulate controversial or biased outputs, as xAI feared.

“Nothing in the language of the statute suggests that California is attempting to influence Plaintiff’s models’ outputs by requiring dataset disclosure,” Bernal wrote.

Addressing xAI’s other speech concerns, he noted that “the statute does not functionally ask Plaintiff to share its opinions on the role of certain datasets in AI model development or make ideological statements about the utility of various datasets or cleaning methods.”

“No part of the statute indicates any plan to regulate or censor models based on the datasets with which they are developed and trained,” Bernal wrote.

Public “cannot possibly” care about AI training data

Perhaps most frustrating for xAI as it continues to fight to block the law, Bernal also disputed that the public had no interest in the training data disclosures.

“It strains credulity to essentially suggest that no consumer is capable of making a useful evaluation of Plaintiff’s AI models by reviewing information about the datasets used to train them and that therefore there is no substantial government interest advanced by this disclosure statute,” Bernal wrote.

He noted that the law simply requires companies to alert the public about information that can feasibly be used to weigh whether they want to use one model over another.

Nothing about the required disclosures is inherently political, the judge suggested, although some consumers might select or avoid certain models with perceived political biases. As an example, Bernal opined that consumers may want to know “if certain medical data or scientific information was used to train a model” to decide if they can trust the model “to be sufficiently comprehensively trained and reliable for the consumer’s purposes.”

“In the marketplace of AI models, AB 2013 requires AI model developers to provide information about training datasets, thereby giving the public information necessary to determine whether they will use—or rely on information produced by—Plaintiff’s model relative to the other options on the market,” Bernal wrote.

Moving forward, xAI seems to face an uphill battle to win this fight. It will need to gather more evidence to demonstrate that its datasets or cleaning methods are sufficiently unique to be considered trade secrets that give the company a competitive edge.

It will also likely have to deepen its arguments that consumers don’t care about disclosures and that the government has not explored less burdensome alternatives that could “achieve the goal of transparency for consumers,” Bernal suggested.

One possible path to a win could be proving that California’s law is so vague that it potentially puts xAI on the hook for disclosing its customers’ training data for individual Grok licenses. But Bernal emphasized that xAI “must actually face such a conundrum—rather than raising an abstract possible issue among AI systems developers—for the Court to make a determination on this issue.”

xAI did not respond to Ars’ request to comment.

A spokesperson for the California Department of Justice told Reuters that the department “celebrates this key win and remains committed to continuing our defense” of the law.

Musk fails to block California data disclosure law he fears will ruin xAI Read More »