I added a ratgdo to my garage door, and I don’t know why I waited so long

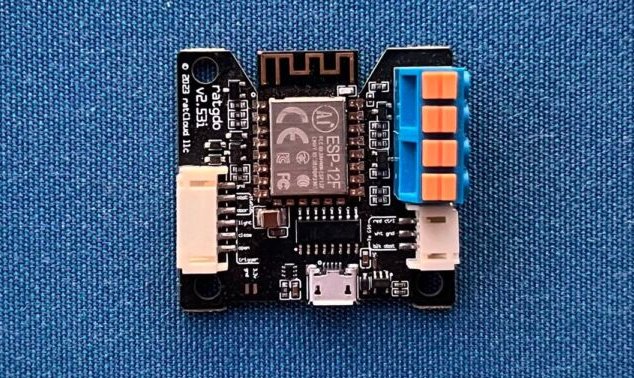

Enlarge / A ratgdo, version 2.53i.

I live in suburbia, which means I’ve got a garage (or a carhole, if you’re not so fancy). It’s a detached garage, so part of my nightly routine when I check to make sure the house is all locked up is to peek out the back window. I like to know the garage door is closed and our cars are tucked in safely.

But actually looking out a window with my stupid analog eyeballs is lame, so I figured I could make things easier by adding some smarts to my garage. The first thing I did was use this fellow’s instructions (the original site is sadly offline, but the Wayback Machine is forever) to cobble together a Raspberry Pi-based solution that would fire off an email every time the garage door opened or closed. I couldn’t remotely open or close the door from inside the house myself (well, I mean, I could with the actual garage door opener remote control), but I could just glance at my inbox to see if the garage door was open or shut in the evenings.

This worked great for a couple of years, until Texas summers murdered the poor Pi. (This was possibly my fault, too, because of the PoE hat that I’d slapped onto the Pi, which resulted in extra heat.) So, I was back to peeking out my window to check on the garage in the evenings. Like a sucker.

There had to be a better way.

Insultingly, offensively awful OEM solutions

I had just two requirements in my search for that better way. First, whatever automation solution I settled on had to be compatible with my garage door opener. Secondly, anything I looked at needed to interoperate with Apple’s HomeKit, my preferred home automation ecosystem.

The first thing I looked at—and quickly discarded—was using my garage door opener’s built-in automation functionality. My particular garage door opener is a LiftMaster, which means that it’s part of a big group of garage door opener brands under the “Chamberlain” banner. The OEM-sanctioned way to do what I want, therefore, is to use Chamberlain’s “MyQ” solution, which—and I am being generous here—is total garbage.

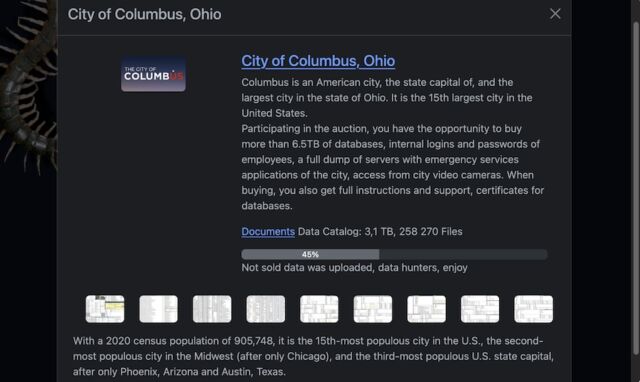

MyQ requires an accessory the company doesn’t sell or support anymore in order to hook into HomeKit, and Chamberlain would really, really, really like you to install their adds-nothing-of-value-to-me app in order to actually control things—likely so they can have a shot at collecting and monetizing my personal and/or behavioral data. (To be clear, I have no proof that that’s what they’d do with personal data, but monetizing and selling it would definitely be playing to type.) Given that the Chamberlain Group is owned by a big value-removing private equity firm with a history of poor stewardship over personal data, this all tracks.

Enlarge / A snippet from the MyQ privacy page. It’s about as gross as you might expect.

That’s gonna be a “no” from me, dawg. I’d rather jam bamboo under my fingernails than install Chamberlain’s worthless app just for the privilege of controlling an accessory in my own home while facing the potential risk of having my personal information sold to enrich some vampire capitalists.

So what else to do?

I added a ratgdo to my garage door, and I don’t know why I waited so long Read More »