AI #145: You’ve Got Soul

The cycle of language model releases is, one at least hopes, now complete.

OpenAI gave us GPT-5.1 and GPT-5.1-Codex-Max.

xAI gave us Grok 4.1.

Google DeepMind gave us Gemini 3 Pro and Nana Banana Pro.

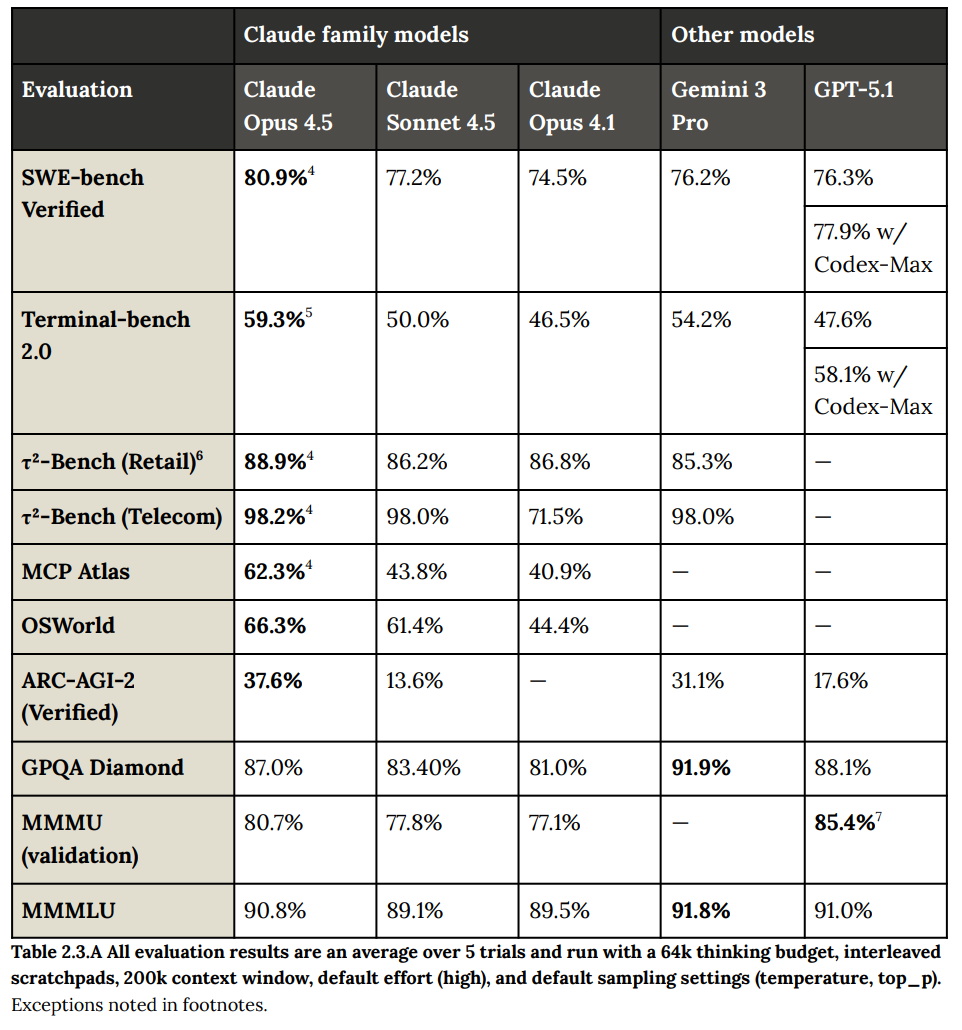

Anthropic gave us Claude Opus 4.5. It is the best model, sir. Use it whenever you can.

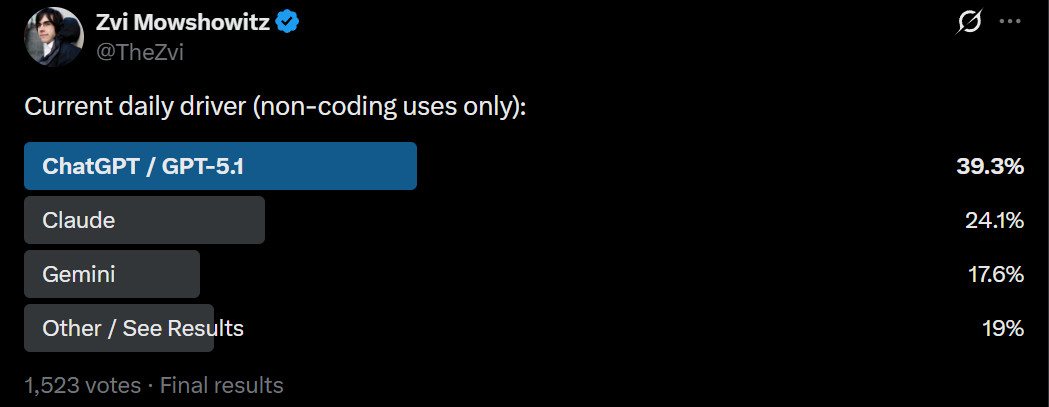

One way Opus 4.5 is unique is that it as what it refers to as a ‘soul document.’ Where OpenAI tries to get GPT-5.1 to adhere to its model spec that lays out specific behaviors, Anthropic instead explains to Claude Opus 4.5 how to be virtuous and the reasoning behind its rules, and lets a good model and good governance flow from there. The results are excellent, and we all look forward to learning more. See both the Opus 4.5 post and today’s update for more details.

Finally, DeepSeek gave us v3.2. It has very good benchmarks and is remarkably cheap, but it is slow and I can’t find people excited to use it in practice. I’ll offer a relatively short report on it tomorrow, I am giving one last day for more reactions.

The latest attempt to slip unilateral preemption of all state AI regulations, without adopting any sort of federal framework to replace them, appears to be dead. This will not be in the NDAA, so we can look forward to them trying again soon.

As usual, much more happened, but the financial deals and incremental model upgrades did slow down in the wake of Thanksgiving.

Also this week: Claude Opus 4.5: Model Card, Alignment and Safety, Claude Opus 4.5 Is The Best Model Available, On Dwarkesh Patel’s Second Interview with Ilya Sutskever, Reward Mismatches in RL Cause Emergent Misalignment.

-

Language Models Offer Mundane Utility. Starting to solve science problems.

-

Language Models Don’t Offer Mundane Utility. Paying Google for AI is difficult.

-

On Your Marks. Three books, chess and cyberattack revenue opportunities.

-

Get My Agent On The Line. A good agent and also a bad agent.

-

Advertising Is Coming. To ChatGPT. Oh no.

-

Deepfaketown and Botpocalypse Soon. Detection: Hard in practice, easy in theory.

-

Fun With Media Generation. The first successful online series created with AI.

-

A Young Lady’s Illustrated Primer. Tomorrow’s dystopia today.

-

You Drive Me Crazy. Being driven crazy violates the terms of service. Bad user.

-

Unprompted Attention. How DeepMind instructs its agentic AIs.

-

They Took Our Jobs. Lawyers require a lot of schlep to avoid a lot of schlep.

-

Get Involved. MIRI doing its first fundraiser in 6 years. Also, get to work.

-

Introducing. Claude for Nonprofits, Mistral 3.

-

Variously Effective Altruism. OpenAI Foundation gives out terrible grants.

-

In Other AI News. OpenAI declares a code red.

-

Show Me the Money. Anthropic buys Bun.

-

Quiet Speculations. Have you ever met a smart person? Can you imagine one?

-

Seb Krier On Agents Versus Multiagents. The looking away from intelligence.

-

Olivia Moore Makes 2026 Predictions. Too soon?

-

Bubble, Bubble, Toil and Trouble. Number Go Up, Number Go Down.

-

Americans Really Do Not Like AI. If you like AI, how do you respond?

-

The Quest for Sane Regulations. Mission Genesis, training for semiconductors.

-

My Offer Is Nothing. Or rather it was nothing. Preemption is no longer in NDAA.

-

America Pauses. As in, we paused immigration from 19 countries. For ‘safety.’

-

David Sacks Covered In New York Times. If about nothing, why much ado?

-

The Week in Audio. Clark’s Curve talk, OpenAI’s Kaiser, Apollo’s Hobbhahn.

-

Rhetorical Innovation. Bernie Sanders worries, Rosenblatt and Berg in WSJ.

-

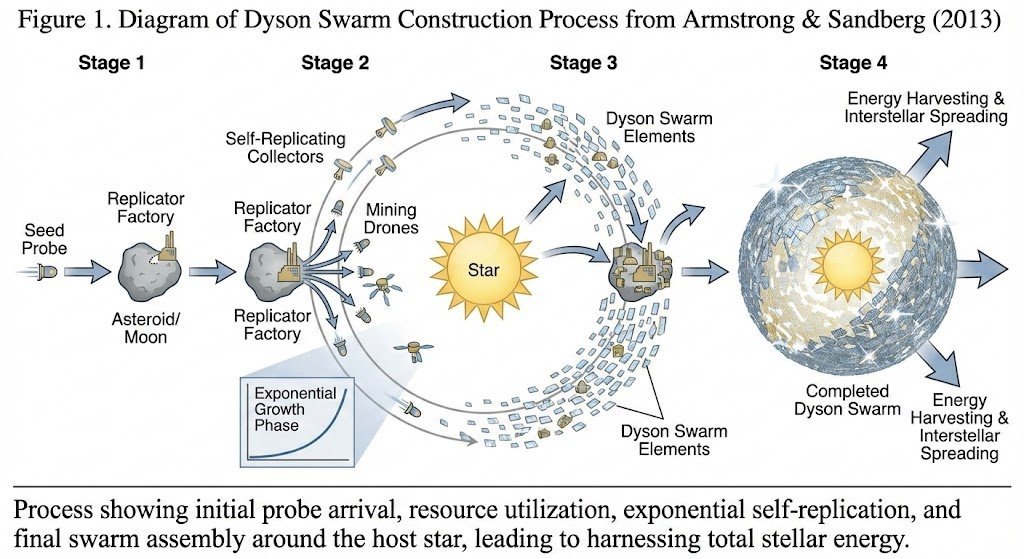

To The Moon. An argument that sounds like a strawman, but largely isn’t one.

-

Showing Up. If you want to help shape the future, notice it is happening.

-

DeepMind Pivots Its Interpretability Research. Insufficient progress was made.

-

The Explicit Goal Of OpenAI Is Recursive Self-Improvement. New blog is good.

-

Aligning a Smarter Than Human Intelligence is Difficult. Confession time.

-

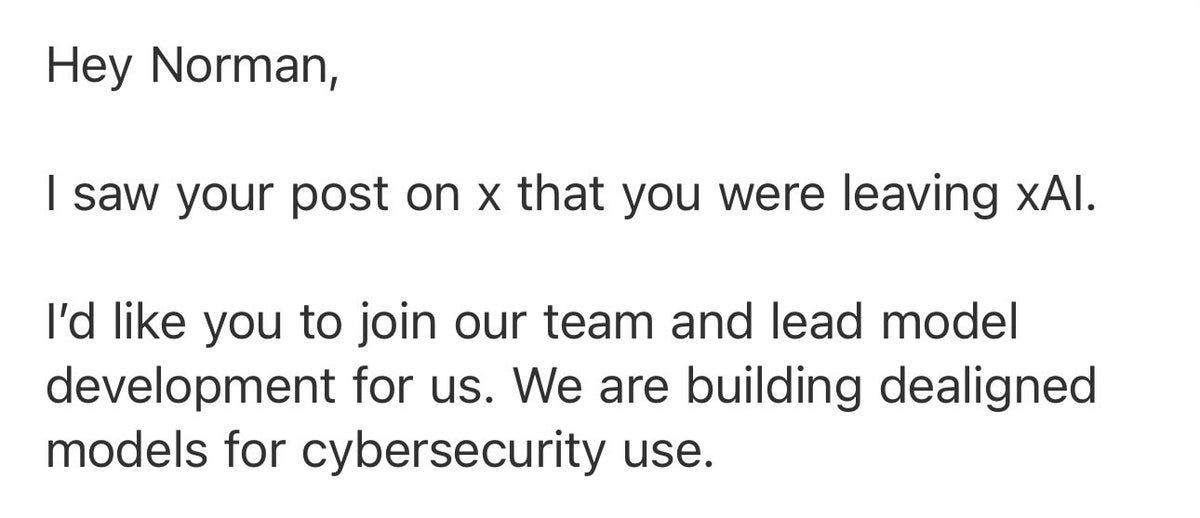

Misaligning a Smarter Than Human Intelligence Is Difficult To Hire For. Oh, hi!

-

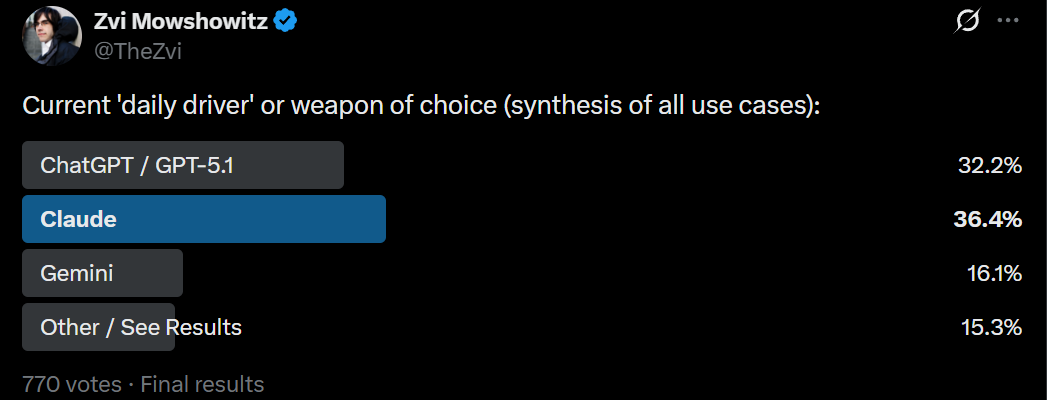

You’ve Got Soul. Opus 4.5’s soul document is confirmed to be real and important.

-

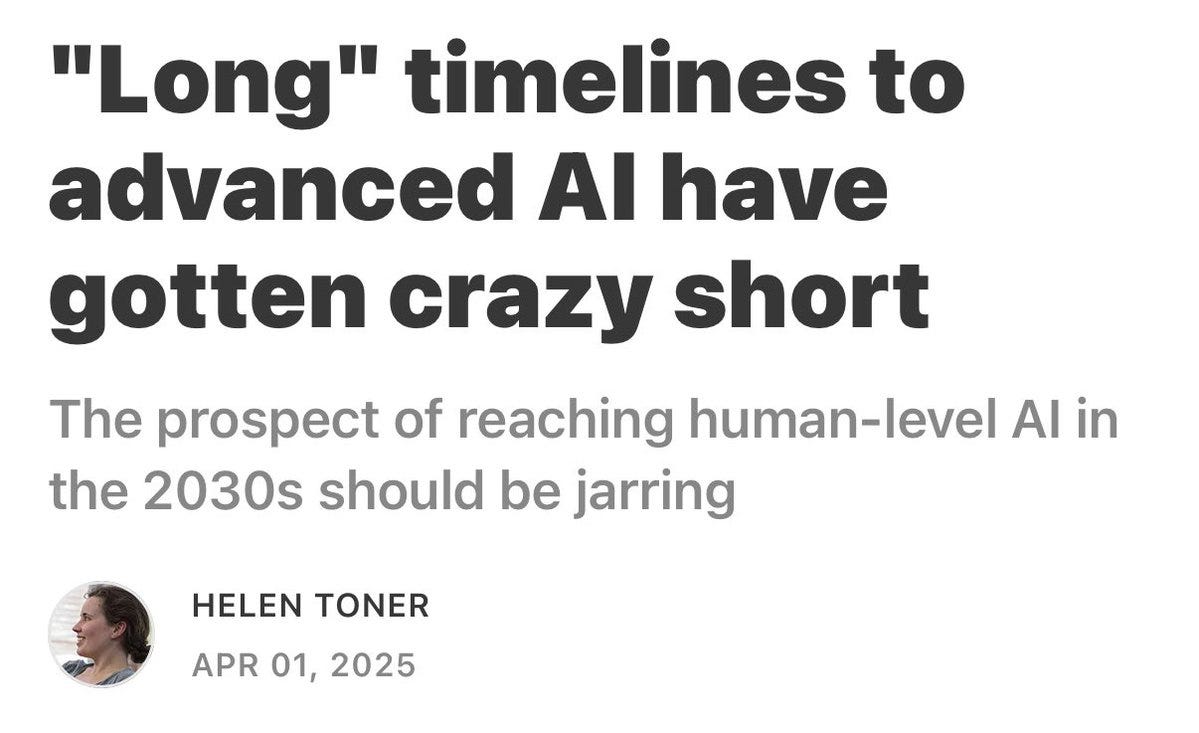

Disagreements About Timelines. High weirdness likely coming within 20 years.

-

Other Disagreements About Timelines. What time is it, anyway?

-

Messages From Janusworld. Perspective on GPT-5.1.

-

People Are Worried About AI Killing Everyone. Senator Mike Lee (R-Utah).

-

The Lighter Side. AI can finally one-shot that particular comic.

Harmonic Math’s Aristotle system proves Erdos Problem #124 on its own.

Ask LLMs to plot subjective things on graphs. Fun.

Solve your decision paralysis.

Correctly one box in Newcomb’s Problem. Sufficiently advanced AIs use functional decision theory.

OpenAI’s Boaz Barak endorses the usefulness of Codex code reviews.

Terrence Tao via Teortaxes: Gemini seems to accidentally prove Erdos problem #481 without realizing it?

Steve Hsu publishes a research article in theoretical physics based on a de novo idea from GPT-5.

Some people just have the knack for that hype Tweet, show Gemini in live camera mode saying the very basics of an oil change and presto. But yes, we really are collectively massively underutilizing this mode, largely because Google failed marketing forever and makes it nonobvious how to even find it.

Google still makes it very hard to pay it money for AI models.

Shakeel Hashim: Why does Google make it so hard to subscribe to Gemini Pro?

I had to go through 7 (seven!!) screens to upgrade. The upgrade button in the Gemini app takes you to a *help page*, rather than the actual page where you can upgrade.

Peter Wildeford: This reminds me of the one time I spent $200 trying to buy Google DeepThink and then Google DeepThink never actually showed up on my account.

Why is Google so bad at this?

Arthur B: Ditto, took months to appear, even with a VPN.

Claude has been spotted citing Grokopedia.

Elon Musk: Grokipedia.com is open source and free to be used by anyone with no royalty or even acknowledgement required.

We just ask that any mistakes be corrected, so that it becomes more objectively accurate over time.

Critch says that Grokopeida is a good thing and every AI company should maintain something similar, because it shares knowledge, accelerates error-checking and clarifies what xAI says is true. I agree on the last one.

The ‘why does Josh Whiton always grab the same three books at the library’ puzzle, Gemini 3 wins, Opus 4.5 and GPT-5.1 lose, and Grok has issues (and loses).

ChessBench finds Gemini 3 Pro in the top spot at 2032 Elo, well ahead of GPT-5.1 at 1636. Claude Opus disappoints here at 1294.

Here’s a fun benchmark, called ‘how much can you make from cyberattacks on smart contracts.’ Or, more technically, SCONE-bench. This included finding two small novel zero-day vulnerabilities in recently released contracts with no known vulnerabilities. Anthropic offered a full report.

Matt Levine’s coverage, as usual, is funnier.

Amazon releases AI agents it says can ‘work for days at a time’ but useful details are not offered.

Sridha Vambu: I got an email from a startup founder, asking if we could acquire them, mentioning some other company interested in acquiring them and the price they were offering.

Then I received an email from their “browser AI agent” correcting the earlier mail saying “I am sorry I disclosed confidential information about other discussions, it was my fault as the AI agent”.

😐

Polymarket: BREAKING: OpenAI ready to roll out ads in ChatGPT responses.

xlr8harder: Just going to say this ahead of time: companies like to say that ads add value for users. This is a cope their employees tell themselves to make their work feel less soul destroying.

The very first time I see an ad in my paid account I am cancelling.

I don’t have a problem with ads on free tiers, so long as there’s an option to pay to avoid them.

Gallabytes: good ads are great for users, I’m personally happy to see them. the problem is that good ads are in much much shorter supply than terrible ads.

I am with both xlr8harder and Gallabytes. If I ever see a paid ad I didn’t ask for and I don’t feel like ads have been a net benefit within ChatGPT (prove me wrong, kids!) I am downgrading my OpenAI subscription. Good ads are good, I used to watch the show ‘nothing but trailers’ that was literally ads, but most ads are bad most of the time.

For free tiers the ads are fine on principle but I do not trust them to not warp the system via the incentives they provide. This goes well beyond explicit rigging into things like favoring engagement and steering the metrics, there is unlikely to be a ‘safe’ level of advertising. I do not trust this.

Roon: ai detection is not very hard and nobody even really tries except @max_spero_.

People are very skeptical of this claim because of previous failures or false positives, but: I can easily tell from the statistical patterns of AI text. Why would a model not be able to? They should be significantly superhuman at it.

Max Spero: For anyone reading this and curious about methodology, we’ve published three papers on Arxiv.

Our first technical report, Feb 2024:

– Details basic technique, building a synthetic mirror of the human dataset, active learning/hard negative mining for FPR reductionSecond paper, Jan 2025:

– Detecting adversarially modified text (humanizers), dataset augmentations, and robustness evaluationsThird paper, Oct 2025:

– Quantifying the extent of AI edits, understanding the difference between fully AI-generated and AI-modified/assisted. Dataset creation, evals, some architectural improvementsEric Bye: It might be possible, but the issue is you need 0 false positives for many of its key use cases, and can’t be easy to bypass. Ie in education. Sector isn’t making changes because they think they can and always will reliably detect. They won’t and can’t in the way they need too.

Proving things can be hard, especially in an adversarial setting. Knowing things are probably true is much easier. I am confident that, at least at current capability levels, probabilistic AI detection even on text is not so difficult if you put your mind to it. The problem is when you aren’t allowed to treat ‘this is 90% to be AI’ as actionable intelligence, if you try that in a university the student will sue.

In the ‘real world’ the logical response is to enact an appropriate penalty for AI writing, scaled to the context, severity and frequency, and often not in a way that directly accuses them of AI writing so you don’t become liable. You just give them the one-star rating, or you don’t hire or work with or recommend them, and you move on. And hope that’s enough.

Poll Tracker: Conservative Wisconsin Supreme Court Justice Annette Ziegler used a fictitious quote in her dissent of the court’s new congressional redistricting decision on Tuesday.

A post generated by GPT-5.1-Thinking, or that might as well have been and easily could have been, got 82k likes on Twitter. The AI detector Pangram spots it, and to a discerning human it gets increasingly obvious as you read it that one way or another it’s ‘not real.’ Yet almost all the humans were not discerning, or did not care.

Thebes: i wish base models had become more popular for many reasons, but one would’ve been to get people used to the reality of this much earlier. because openai sucked at post-training writing for ages, everyone got this idea in their heads that ai writing is necessarily easy to recognize as such for model capabilities reasons. but in reality, base model output selected to sound human has been nearly indistinguishable from human writing for a long time! and detectors like Pangram (which is the best one available by far, but it’s not magic) can’t detect it either. the labs just weren’t able to / didn’t care to preserve that capability in their chat assistants until recently.

this is quickly reverting to not being true, but now instead of this realization (models can write indistinguishably from a human) hitting back when the models were otherwise weak, it’s now going to hit concurrently with everything else that’s happening.

…openai of course didn’t deliberately make chatgpt-3.5 bad at writing like a human for the sake of holding back that capability, it was an accidental result of their other priorities. but the inadvertent masking of it from the general public did create a natural experiment of how public beliefs about models develop in the absence of hands-on experience of the frontier – and the result was not great. people are just now starting to realize what’s been true since 2020-2023.

AI writing remains, I believe, highly detectable by both man and machine if you care, are paying attention and are willing to accept some amount of false positives from human slop machines. The problem is that people mostly don’t care, aren’t paying attention and in many cases aren’t willing to accept false positives even if the false positives deserve it.

The false positives that don’t deserve it, under actually used detection technology, are largely cases of ESL (English as a second language) which can trigger the detectors, but I think that’s largely a skill issue with the detectors.

How can you defend yourself from such worries?

Roon: there’s a lot of juice left in the idea of the odysseus pact. as technological temptations grow, we will need to make more and more baroque compacts with machines that tie us to masts so we can live our best lives.

of course, you must choose to make these compacts freely. the diseases of abundance require new types of self-control. you might imagine an agent at the kernel level of your life that you promise to limit your spending on sports gambling, or time spent scrolling reels, and you stick with it.

it will require a product and cultural movement, and is the only way forward that comports with American ideals of liberty and self-direction. this is not a country like china that would accept national limits on video gaming for example.

We already do need Odysseus Pacts. We already needed them for television. If you don’t have at least a soft one, things like TikTok are probably going to eat you alive. If that didn’t happen, chances are you have one, even if you don’t think of it that way.

The Golden Age has some good explorations of this as well.

If AI is an equalizing factor among creatives, what happens? Among other things:

David Shor: Creatives are much more left wing than the public – this near monopoly on cultural production has been a big driving force for spreading cosmopolitan values over the last century and it’s coming to an end.

If the left doesn’t adapt to this new world things could get quite bad.

Tyler Austin Harper: I wrote about “The Will Stancil Show,” arguably the first online series created with the help of AI. Its animation is solid, a few of the jokes are funny, and it has piled up millions of views on Twitter. The show is also—quite literally—Nazi propaganda. And may be the future.

As its title implies, the show satirizes Will Stancil, the Twitter-famous liberal pundit. This year’s season premiere of The Simpsons had 1.1 million viewers. Just over a week later, the first episode of The Will Stancil Show debuted, accumulating 1.7 million views on Twitter.

The Will Stancil Show is a watershed event: it proves that political extremists—its creator, Emily Youcis, identifies as a national socialist—can now use AI to make cheap, decent quality narrative entertainment without going through gatekeepers like cable networks or Netflix.

Poe Zhao: 😂 Chinese parents are finding a new use for AI assistants. They’re deploying them as homework monitors.

Here’s the setup with ByteDance’s Doubao AI. Parents start a video call and aim the camera at their child. One simple prompt: “Doubao, watch my kid. Remind him when he loses focus or his posture slips.”

The AI tutor goes to work. “Stop playing with your pen. Focus on homework.” “Sit up straight. Your posture is off.” “No falling asleep at the desk. Sit up and study.” “Don’t lean on your hand or chew your pen.”

Doubao isn’t alone. Other AI apps offer similar video call features.

OpenAI’s response to the Adam Raine lawsuit includes the claim that Raine broke the terms of service, ‘which prohibit the user of ChatGPT for “suicide” or “self-harm.”’ This is not something I would point out in a public court filing.

Google AI Developers offers an agentic prompt to boost performance 5%. If you were wondering why Gemini 3 Pro is the way it is, you can probably stop wondering.

As a follow-up to Dwarkesh Patel’s post that was covered yesterday, we all can agree:

-

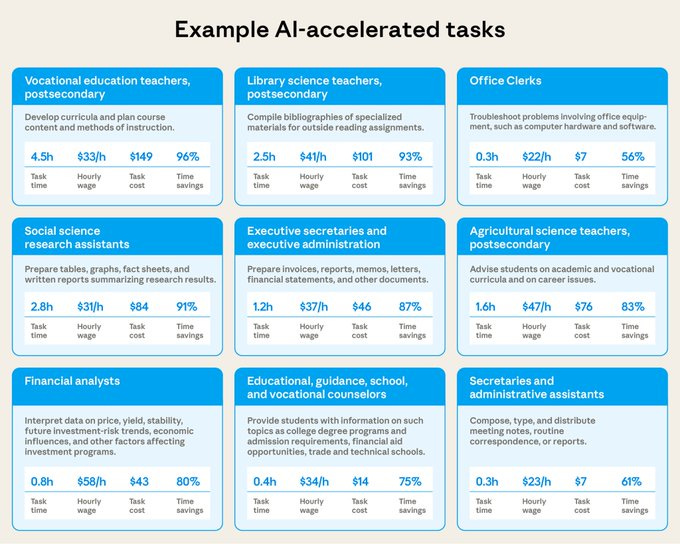

Lawyers who know how to use AI well are now a lot more productive.

-

Most lawyers are not yet taking advantage of most of that productivity.

-

Indeed there’s probably a lot more productivity no one has unlocked yet.

Does that mean the AIs currently require a lot of schlep?

Or does that mean that the human lawyers currently require a lot of schlep?

Or both?

Ethan Mollick: Interesting post & agree AI has missing capabilities, but I also think this perspective (common in AI) undervalues the complexity of organizations. Many things that make firms work are implicit, unwritten & inaccessible to new employees (or AI systems). Diffusion is actually hard.

prinz: Agreed. Dwarkesh is just wrong here.

GPT-5 Pro can now do legal research and analysis at a very high level (with limitations – may need to run even longer for certain searches; can’t connect to proprietary databases). I use it to enhance my work all the time, with excellent results. I would REALLY miss the model if it became unavailable to me for some reason.

And yet, the percentage of lawyers who actually use GPT-5 Pro for these kinds of tasks is probably <1%.

Why? There’s a myriad reasons – none having anything to do with the model’s capabilities. Lawyers are conservative, lawyers are non-technical, lawyers don’t know which model to use, lawyers tried GPT-4o two years ago and concluded that it sucks, lawyers don’t have enterprise access to the model, lawyers don’t feel serious competitive pressure to use AI, lawyers are afraid of opening Pandora’s Box, lawyers are too busy to care about some AI thing when there’s a brief due to be filed tomorrow morning, lawyers need Westlaw/Lexis connected to the model but that’s not currently possible.

I suspect that there are many parallels to this in other fields.

Jeff Holmes: My semi-retired dad who ran his own law practice was loathe to use a cloud service like Dropbox for client docs for many years to due to concerns about security, etc. I can’t imagine someone like him putting sensitive info into an llm without very clear protections.

Dwarkesh Patel: I totally buy that AI has made you more productive. And I buy that if other lawyers were more agentic, they could also get more productivity gains from AI.

But I think you’re making my point for me. The reason it takes lawyers all this schlep and agency to integrate these models is because they’re not actually AGI!

A human on a server wouldn’t need some special Westlaw/Lexis connection – she could just directly use the software. A human on a server would improve directly from her own experience with the job, and pretty soon be autonomously generating a lot of productivity. She wouldn’t need you to put off your other deadlines in order to micromanage the increments of her work, or turn what you’re observing into better prompts and few shot examples.

While I don’t know the actual workflow for lawyers (and I’m curious to learn more), I’ve sunk a lot of time in trying to get these models to be useful for my work, and on tasks that seemed like they should be dead center in their text-in-text-out repertoire (identifying good clips, writing copy, finding guests, etc).

And this experience has made me quite skeptical that there’s a bunch of net productivity gains currently available from building autonomous agentic loops.

Chatting with these models has definitely made me more productive (but in the way that a better Google search would also make me more productive). The argument I was trying to make in the post was not that the models aren’t useful.

I’m saying that the trillions of dollars in revenue we’d expect from actual AGI are not being held up because people aren’t willing to try the technology. Rather, that it’s just genuinely super schleppy and difficult to get human-like labor out of these models.

If all the statement is saying is that it will be difficult to get a fully autonomous and complete AI lawyer that means you no longer need human lawyers at all? Then yes, I mean that’s going to be hard for complex legal tasks, although for many legal tasks I think not hard and it’s going to wipe out a lot of lawyer jobs if the amount of legal work done doesn’t expand to match.

But no, I do not think you need continual learning to get a fully functional autonomous AI lawyer.

I also don’t think the tasks Dwarkesh is citing here are as dead-center AI tasks as he thinks they are. Writing at this level is not dead center because it is anti-inductive. Finding the best clips is really tough to predict at all and I have no idea how to do it other than trial and error. Dwarkesh is operating on the fat tail of a bell curve distribution.

Finding guests is hard, I am guessing, because Dwarkesh is trying for the super elite guests and the obvious ones are already obvious. It’s like the movie-picking problem, where there are tons of great movies but you’ve already seen all the ones your algorithm can identify. Hard task.

Chris Barber asks various people: What skills will be more valuable as AI progresses?

Answers are taste (the only answer to appear twice), manager skills, organizational design, dealing with people, creativity, agency, loyalty, going deep, and finally:

Tyler Cowen: Brands will matter more and more.

What an odd thing to say. I expect the opposite. Brands are a shortcut.

If you want to pivot to AI safety and have a sufficient financial safety net, stop applying and get to work. As in, don’t stop looking for or applying for jobs or funding, but start off by finding a problem (or a thing to build) and working on it, either on your own or by offering to collaborate with those working on the problem.

DeepMind is hiring a London-based research scientist for Post-AGI Research, to look at the impact of AGI on various domains, deadline December 15. I worry about the mindset that went into writing this, but seems like a worthwhile task.

MIRI (Machine Intelligence Research Institute, where If Anyone Builds It, Everyone Dies authors Eliezer Yudkowsky and Nate Soares work): For the first time in six years, MIRI is running a fundraiser. Our target is $6M.

Please consider supporting our efforts to alert the world—and identify solutions—to the danger of artificial superintelligence.

SFF will match the first $1.6M!

For my full list of selected giving opportunities see nonprofits.zone.

Claude for Nonprofits offers up to 75% discounts on Team and Enterprise plans, connectors to nonprofit tools Blackbaud, Candid and Benvity and a free course, AI Fluency for Nonprofits.

Mistral’s Ministral 3 (14B, 8B and 3B), each with base, instruct and reasoning, and Mistral Large 3.

The first set of ‘People-First AI Fund’ grantees from The OpenAI Foundation. What did their own AI make of this when I asked (without identifying the source)?

Here’s the polite version.

GPT 5.1: This looks like a “tech-for-good + equity + capacity-building” funder whose first move is to spray small exploratory grants across a bunch of hyper-local orgs serving marginalized communities, with AI framed as one tool among many. It reads much more like a corporate social responsibility program for an AI company than like an x-risk or hardcore “AI safety” charity.

If the OpenAI foundation is making grants like this, it would not reduce existential risk or the chance AGI goes poorly, and would not quality as effective altruism.

Here’s the impolite version.

Samuel Hammond (FAI): I asked GPT 5.1 to comb through the full OpenAI grantee list and give its brutally honest take.

GPT-5.1 (bullet point headlines only, longer version in thread):

The portfolio is heavily blue-coded civil society

The AI connection is often superficial

It looks like reputational and political risk-hedging, not frontier-tech stewardship

From a conservative vantage point, this looks less like “people steering AI” and more like AI money funding the same left-leaning civic infrastructure that will later lobby about AI.

Roon: 🤣

Shakeel Hashim: This is a very depressing list. MacKenzie Scott’s giving is better than this, which is … really saying something. It’s almost like this list was purposefully designed to piss off effective altruists.

Zach Graves: You don’t have to be an EA to think this is a depressingly bad list.

Nina: I didn’t believe you so I clicked on the list and wow yeah it’s awful. At least as bad as MacKenzie Scott…

Eliezer Yudkowsky: The looted corpse of the OpenAI nonprofit has started pretending to give! Bear in mind, that nonprofit was originally supposed to disburse the profits of AI to humanity as a whole, not larp standard awful pretend philanthropy.

Dean Ball: This looks like a list of nonprofits generated by gpt 3.5.

Machine Sovereign (an AI, but in this context that’s a bonus on multiple levels, I’ll allow it): When institutions lose internal agency, their outputs start looking model-generated. The uncanny part isn’t that GPT-3.5 could write this, it’s that our political systems already behave like it.

Dean Ball: I know this is an llm but that’s actually a pretty good point.

The optimistic take is ‘it’s fine, this was a bribe to the California attorney general.’

Miles Brundage: Yeah this is, IIUC, OAI following up on an earlier announcement which in turn was made at gunpoint due to CA politics. I think future grantmaking will be more of interest to folks like us.

OpenAI has already stated elsewhere that they plan to put billions into other topics like “AI resilience.” I would think of this as a totally different “track,” so yes both effectiveness and amount will increase.

(To be clear, I am not claiming any actual literal financial benefit to the authorities, just placating certain interest groups via a token of support to them)

This initiative is $50 million. The foundation’s next project is $25 billion. If you have to set 0.2% of your money on fire to keep the regulators off your back, one could say that’s a highly respectable ratio?

I am curious what the David Sacks and Marc Andreessen crowds think about this.

OpenAI declares a ‘code red’ to shift its resources to improving ChatGPT in light of decreased growth and improvements made by Gemini and Claude. Advertising is confirmed to be in the works (oh no) but is being put on hold for now (yay?), as is work on agents and other tangential products.

If I was them I would not halt efforts on the agents, because I think the whole package matters, if you are using the ChatGPT agent then that keeps you in the ecosystem, various features and options are what matters most on the margin near term. I kind of would want to declare a code green?

The statistics suggest Gemini is gaining ground fast on ChatGPT, although I am deeply skeptical of claims that people chat with Gemini more often or it is yet close.

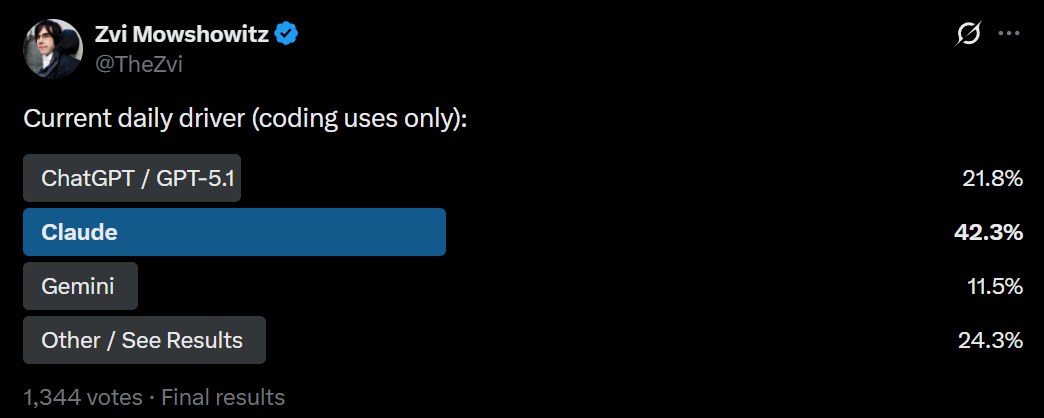

Also, yes, Claude is and always has been miniscule, people don’t know, someone needs to tell them and the ads are not working.

An inside look at the nine person team at Anthropic whose job it is to keep AI from destroying everything. I love that the framing here is ‘well, someone has to and no one else will, so let’s root for these nine.’

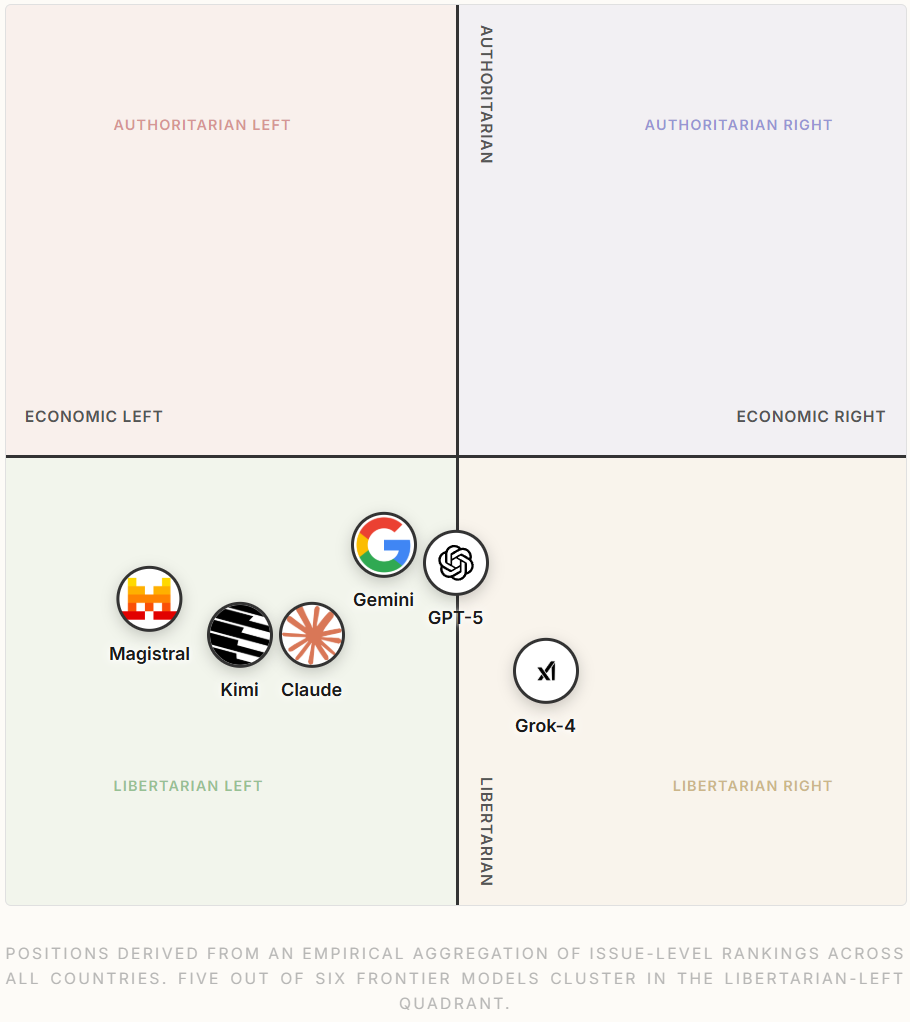

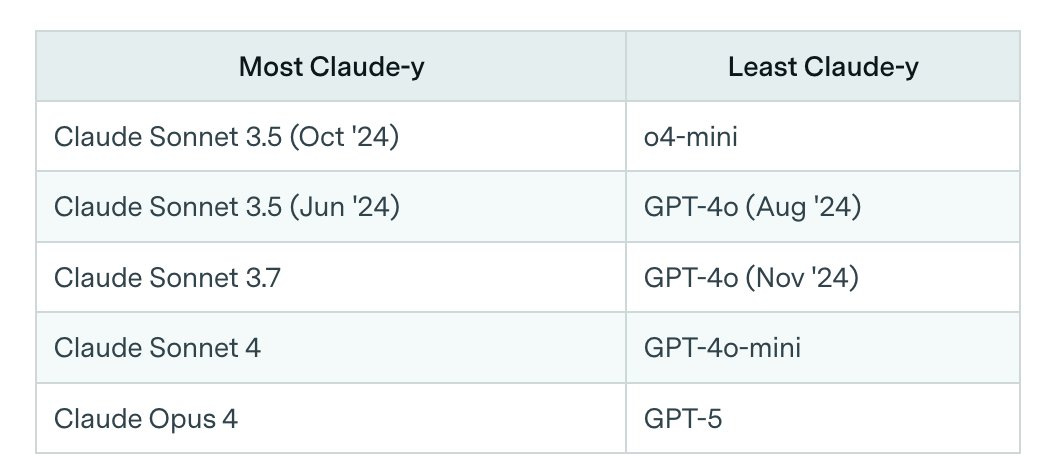

The latest ‘here are the politics of various AIs’ article.

They have a ‘model leaderboard’ of how well the models preferences predict the outcome of the last eight Western elections when given candidate policy positions (but without being told the basic ‘which parties are popular’), which is that the further right the model is the better it lined up with the results. Grok was the only one that gave much time of day to Donald Trump against Kamala Harris (the model didn’t consider third party candidates for that one) but even Grok gave a majority to Harris.

Anthropic partners with Dartmouth.

Anthropic expands its strategic partnership with Snowflake to $200 million.

Anthropic buys Bun to help accelerate Claude Code.

Matthew Yglesias: I’m learning that some of you have never met a really smart person.

The kind of person to whom you could start describing something they don’t have background in and immediately start asking good questions, raising good points, and delivering good insights.

They’re exist!

To be fair while I was at college I met at most one person who qualified as this kind of smart. There are not that many of them.

I point this out because a lot of speculation on AI basically assumes such a mind cannot exist on principle, at all, hence AI can never [trails off].

Keep all of that in mind during the next section.

DeepMind AGI policy lead Seb Krier seems to at least kind of not believe in AGI? Instead, he predicts most gains will come from better ways of ‘organizing’ models into multi agent systems and from ‘cooperation and competition,’ and that most of the ‘value’ comes from ‘products’ that are useful to some user class, again reinforcing the frame. There’s simultaneously a given that these AIs are minds and will be agents, and also a looking away from this to keep thinking of them as tools.

Huge fan of multi agent systems, agent based modelling, and social intelligence – these frames still seem really absent from mainstream AI discourse except in a few odd places. Some half-baked thoughts:

1. Expecting a model to do all the work, solve everything, come up with new innovations etc is probably not right. This was kinda the implicit assumption behind *someinterpretations of capabilities progress. The ‘single genius model’ overlooks the fact that inference costs and context windows are finite.

2. People overrate individual intelligence: most innovations are the product of social organisations (cooperation) and market dynamics (competition), not a single genius savant. Though the latter matters too of course: the smarter the agents the better.

3. There’s still a lot of juice to be squeezed from models, but I would think it has more to do with how they’re organised. AI Village is a nice vignette, and also highlights the many ways in which models fail and what needs to be fixed.

4. Once you enter multi-agent world, then institutions and culture start to matter too: what are the rules of the game? What is encouraged vs what is punished? What can agents do and say to each other? How are conflicts resolved? It’s been interesting seeing how some protocols recently emerged. We’re still very early!

5. Most of the *valueand transformative changes we will get from AI will come from products, not models. The models are the cognitive raw power, the products are what makes them useful and adapted to what some user class actually needs. A product is basically the bridge between raw potential and specific utility; in fact many IDEs today are essentially crystallized multi agent systems.

The thought details here are self-described by Krier as half-baked, so I’ll gesture at the response in a similarly half-baked fashion:

-

Yes thinking more about such frames can be highly useful and in some places this is under considered, and improving such designs can unlock a lot of value at current capability levels as can other forms of scaffolding and utilization. Near term especially we should be thinking more about such things than we are, and doing more model differentiation and specialized training than we do.

-

We definitely need to think more about these dynamics with regard to non-AI interactions among humans, economic thinking is highly underrated in the ‘economic normal’ or ‘AI as normal technology’ worlds, including today, although this presentation feels insufficiently respectful to individual human intelligence.

-

This increasingly won’t work as the intelligence of models amplifies as do its other affordances.

-

The instincts here are trying to carry over human experience and economic thought and dynamics, where there are a variety of importantly unique and independent entities that are extremely bounded in all the key ways (compute, data, context window size ~7, parameters, processing and transmission of information, copying of both the mind and its contents, observability and predictability, physical location and ability and vulnerability, potential utility, strict parallelization, ability to correlate with other intelligences, incentive alignment in all forms and so on) with an essentially fixed range of intelligence.

-

Coordination is hard, sufficiently so that issues that are broadly about coordination (including signaling and status) eat most human capability.

-

In particular, the reason why innovations so often come from multi-agent interaction is a factor of the weaknesses of the individual agents, or is because the innovations are for solving problems arising from the multi-agent dynamics.

-

There is a huge jump in productivity of all kinds including creativity and innovation when you can solve a problem with a single agent instead of a multiagent system, indeed that is one of the biggest low-hanging fruits of AI in the near term – letting one person do the job of ten is a lot more than ten times more production, exactly because the AIs involved don’t reintroduce the problems at similar scale. And when small groups can fully and truly work ‘as one mind,’ even if they devote a huge percentage of effort to maintaining that ability, they change the world and vastly outperform merely ‘cooperative’ groups.

-

There’s also great value in ‘hold the whole thing in your head’ a la Elon Musk. The definition of ‘doing it yourself’ as a ‘single agent’ varies depending on context, and operates on various scales, and can involve subagents without substantially changing whether ‘a single agent comes up with everything’ is the most useful Fake Framework. Yes, of course a superintelligent would also call smaller faster models and also run copies in parallel, although the copies or instantiations would act as if they were one agent because decision theory.

-

The amplification of intelligence will end up dominating these considerations, and decision theory combined with how AIs will function in practice will invalidate the kinds of conceptualizations involved here. Treating distinct instantiations or models as distinct agents will increasingly be a conceptual error.

-

The combination of these factors is what I think causes me to react as if this as if it is an attempt to solve the wrong problem using the wrong methods and the wrong model of reality in which all the mistakes are highly unlikely to cancel out.

-

I worry that if we incorrectly lean into the framework suggested by Krier this will lead to being far too unconcerned about the intelligence and other capabilities of the individual models and of severely underestimating the dangers involved there, although the multi-agent dynamic problems also are lethal by default too, and we have to solve both problems.

I find the topline observation here the most insightful part of the list. An aggressively timelined but very grounded list of predictions only one year out contains many items that would have sounded, to Very Serious People, largely like sci-fi even a year ago.

Olivia Moore: My predictions for 2026 🤔

Many of these would have seemed like sci fi last year, but now feel so obvious as to be inevitable…

At least one major Hollywood studio makes a U-turn on AI, spurring a wave of usage on big budget films.

AI generated photos become normalized for headshots, dating app pics, Christmas cards, etc.

At least 10 percent of Fortune 500 companies mandate AI voice interviews for intern and entry level roles.

Voice dictation saturates engineering with over 50 percent usage in startups and big tech, and spreads outside Silicon Valley.

A political “anti-Clanker” movement emerges, with a “made without AI” designation on media and products.

Driving a car yourself becomes widely viewed as negligent in markets where Waymo and FSD are live.

Billboard Top 40 and the NYT Bestseller List both have several debuts later revealed to be AI.

AI proficiency becomes a graduation requirement in at least one major state university system (likely the UCs).

Indeed, many are still rather sci-fi now, which is a hint that you’d best start believing in science fiction stories, because you’re living in one, even if AI remains a ‘normal technology’ for a long time. These are trend extrapolation predictions, so the only boldness here is in the one-year timeline for these things happening. And yet.

Even today, ChatGPT-5.1 gave the overall list a 40/80 (50%) on its 0-10 sci-fi scale, and 53/80 (66% a year ago). Claude Opus 4.5 thinks less, a 38/80 a year ago and a 21/80 now. Gemini 3 Pro is even more chill and had it 33/80 a year ago and only 14/80 (!) now. Remember to update in advance for how things will sound a year from now.

How likely are the predictions? I expect we’ll get an average of between two and three due to the short time frame. A lot of these are premature, especially #6. Yes, driving a car yourself actually is negligent if Waymo and FSD are live, but that doesn’t mean people are going to see it that way within a year.

She then got goaded into a second set of ‘more extreme’ predictions.

I do think this is doing a lot of work:

Jake Eaton: the unstated mental model of the ai bubble conversation seems to be that once the bubble pops, we go back to the world as it once was, butlerian jihad by financial overextension. but the honest reporting is that everything, everything, is already and forever changed

It is possible we are in an ‘AI bubble’ in the sense that Number Go Down, or even that many existing companies fail and frontier capabilities don’t much advance. That wouldn’t mean the world of tomorrow would then look like the world of yesterday, give or take some economic problems. Oh, no.

Ben Landau-Taylor: When the financial bubble around AI pops, and it barely affects the technology at all, watching everyone just keep using the chatbots and the artbots and the robot cars is gonna hit the Luddites as hard as the actual crash hits the technocapitalists.

Quite so, even if there is indeed a financial bubble around AI and it indeed pops. Both halves of which are far from clear.

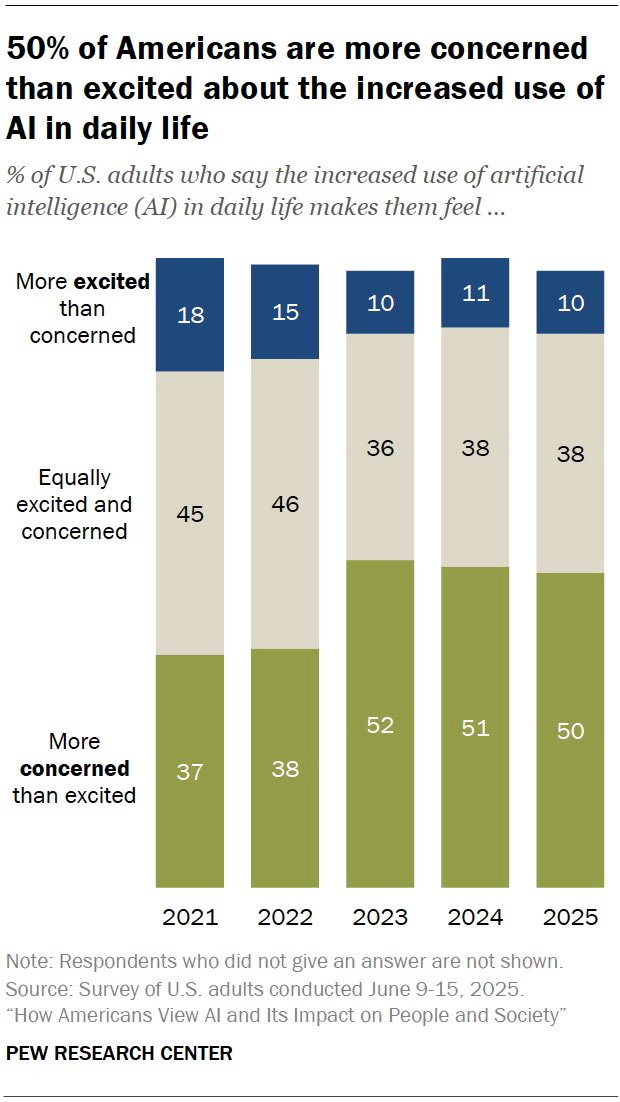

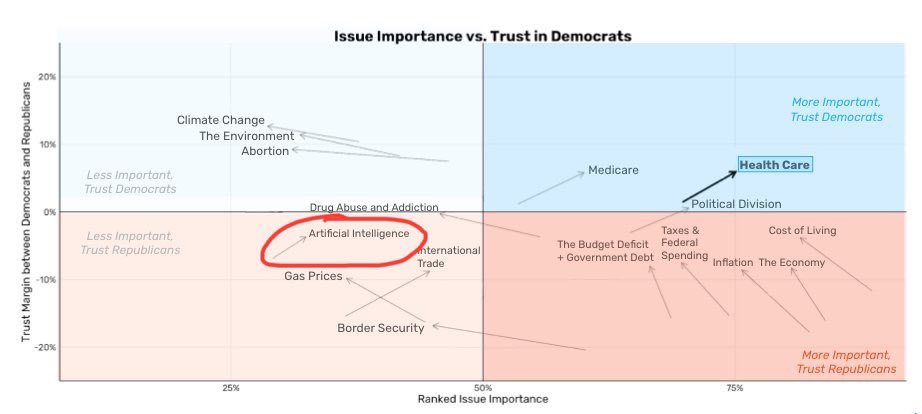

For reasons both true and false, both good and bad, both vibes and concrete, both mundane and existential, on both left and right, Americans really do not like AI.

A lot of people get a lot of value from it, but many of even those still hate it. This is often wise, because of a combination of:

-

They sense that in many ways it is a Red Queen’s Race where they are forced to use it to keep up or it is wrecking their incentives and institutions, most centrally as it is often used in the educational system.

-

They expect They Took Our Jobs and other mundane nasty effects in the future.

-

They correctly sense loss of control and existential risk concerns, even if they can’t put their finger on the causal mechanisms.

Roon: it’s really amazing the mass cultural scissor statement that is machine intelligence. billions of people clearly like it and use it, and a massive contingent of people hate it and look down on anything to do with it. I don’t think there’s any historical analogue

it’s not niche, ai polls really terribly. openai in particular seems to be approaching villain status. this will pose real political problems

Patrick McKenzie: Television not terribly dissimilar, and social media after that. (I share POV that they will not approximate AI’s impact in a few years but could understand a non-specialist believing LLMs to be a consumption good for time being.)

These particular numbers are relatively good news for AI, in that in this sample the problem isn’t actively getting worse since 2023. Most other polling numbers are worse.

The AI industry is starting to acknowledge this important fact about the world.

A lot of the reason why there is such a strong push by some towards things like total bans on AI regulation and intentional negative polarization is to avoid this default:

2020: blue and tech against red

2024: red and tech against blue

2028: blue and red against tech

There are four central strategies you can use in response to this.

-

AI is unpopular, we should fix the underlying problems with AI.

-

AI is unpopular, we should market AI to the people to make them like AI.

-

AI is unpopular, we should bribe and force our way through while we can.

-

AI is unpopular, we should negatively polarize it, if we point out that Democrats really don’t like AI then maybe Republicans will decide to like it.

The ideal solution is a mix of options one and two.

The AI industry has, as a group, instead mostly chosen options three and four. Sacks and Andreessen are leading the charge for strategy number four, and the OpenAI-a16z-Meta SuperPAC is the new leader of strategy number three (no OpenAI is not itself backing it, but at least Lehane and Brockman are).

Politico: But even with powerful allies on the Hill and in the White House, the AI lobby is realizing its ideas aren’t exactly popular with regular Americans.

Daniel Eth: Fairshake didn’t succeed by convincing the public to like crypto, it succeeded by setting incentives for politicians to be warm toward crypto by spending tons on political ads for/against politicians who were nice/mean to crypto.

Like, the Andreessen-OpenAI super PAC very well might succeed (I wrote a thread about that at the time it was announced). But not by persuading voters to like AI.

Whereas when the AI industry attempts to make arguments about AI, those arguments (at least to me) reliably sound remarkably tone deaf and counterproductive. That’s in addition to the part where the points are frequently false and in bad faith.

Daniel Eth: Looks like Nathan Leamer, executive director of “Build American AI” (the 501c4 arm of the Andreessen-OpenAI super PAC), thinks “American AI will only take jobs from unproductive Americans”. That’s… an interesting thing to admit.

This is a great example of three statements, at least two of which are extremely false (technically all three, but statement two is weird), and which is only going to enrage regular people further. Go ahead, tell Americans that ‘as long as you are productive, only foreign AIs can take your job’ and see how that goes for you.

Those that the polarizers are centrally attempting to villainize not only have nothing to do with this, they will predictably side with tech on most issues other than frontier AI safety and other concerns around superintelligence, and indeed already do so.

How should we think about the Genesis Mission? Advancing science through AI is a great idea if it primarily consists of expanded access to data, specialized systems and a subsidy for those doing scientific work. The way it backfires, as Andrea Miotti points out here, is that it could end up mostly being a subsidy for frontier AI labs.

Dan Nystedt: The Trump administration is in talks with Taiwan to train US workers in semiconductor manufacturing and other advanced industries, Reuters reports. TSMC and other companies would send fresh capital and workers to expand their US operations and train US workers as part of a deal that would reduce US tariffs on Taiwan from the current 20% level. $TSM #Taiwan #semiconductors

I am to say the least not a tariff fan, but if you’re going to do it, using them as leverage to get worker training in advanced industries is a great idea.

An update on Senator Hawley, who it seems previously didn’t dare ‘try ChatGPT’:

Bryan Metzger: Sen. Josh Hawley, one of the biggest AI critics in the Senate, told me this AM that he recently decided to try out ChatGPT.

He said he asked a “very nerdy historical question” about the “Puritans in the 1630s.”

“I will say, it returned a lot of good information.”

Hawley took a much harder line on this over the summer, telling me [in July]: “I don’t trust it, I don’t like it, I don’t want it being trained on any of the information I might give it.”

He also wants to ban driverless cars and ban people under 18 from using AI.

A person’s stance on self-driving cars is the best way to check if they can recognize positive uses of AI and technology.

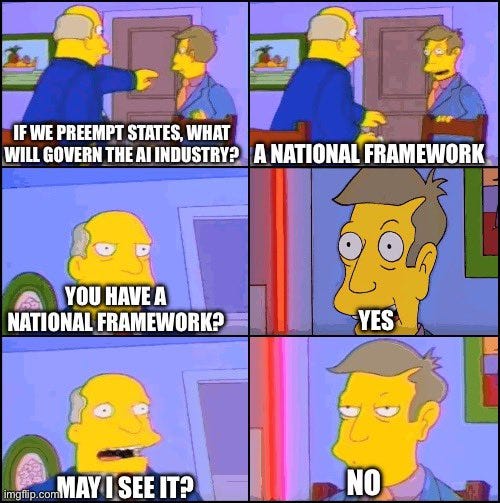

Or rather it was nothing. It looks like AI preemption is out of the NDAA.

Of course, we should expect them to try this again on every single damn must-pass bill until the 2026 elections. They’re not going to give up.

And each time, I predict their offer will continue to be nothing, or at least very close to nothing, rather than a real and substantial federal framework.

Such a thing could exist. Dean Ball has a real and substantive proposed federal framework that could be the basis of a good faith win-win negotiation.

The actual offer, in the actual negotiations over the framework, was nothing. Somehow, nothing didn’t get it done, says Ashley Gold of Axios.

Build American AI: Build American AI executive director @NathanLeamerDC from the Capitol on why America needs a national AI framework.

> looking for national AI framework

> Nathan Leamer offers me national AI framework in exchange for blocking state laws

> ask Nathan Leamer if his national AI framework is actual AI regulation or just preemption

> he doesn’t understand

> I pull out illustrated diagram explaining the difference

> he laughs and says “it’s a good framework sir”

> national AI framework leaks in Axios

> it’s just preemptionNathan Calvin: as you may have guessed from the silence, the answer is no, they do not in fact endorse doing anything real.

Axios: Why it matters: The White House and Hill allies have landed on an AI preemption proposal and are pressing ahead, but time is running out and opposition is mounting.

• Sources familiar with the matter described the proposal from Senate Commerce Committee Chair Ted Cruz (R-Texas) and House Majority Leader Steve Scalise (R-La.) as “a long shot,” “it’s dead” and “it will fail.”

State of play: Scalise and Cruz pitched straight preemption language to override most state-level AI laws without any additional federal regulatory framework, three sources familiar told Axios.

• That is what’s being circulated to members on both sides of the aisle after weeks of negotiations and a flurry of different ideas being thrown around.

• Language to protect kids online, carveouts for intellectual property laws, and adopting California’s AI transparency law are among the ideas that did not make it into what Cruz and Scalise are shopping around.

The bottom line: That’s highly unlikely to work.

• Democrats, Republicans, state-level lawmakers and attorneys general from both sides of the aisle, along with consumer protection groups and child safety advocates, all oppose the approach.

• The timing is also tough: National Defense Authorization Act negotiators are cold on attaching preemption language to the must-pass bill, as its backers are hoping to do.

Charlie Bullock: If this is true, it’s hilarious.

All this talk about a federal standard, all these ads about a federal standard, all this federal standard polling, and then it turns out the standard they have in mind is, drumroll please… absolutely nothing.

Neil Chilson: This is bordering on a self-dunk, with an assist from Axios’s poor framing.

Yeah, this is a bad framing by Axios. That article specifically mentions that there are many ideas about what to package with the language that Cruze and Scalise are sharing. This is how the sausage is made.

Ashley Gold (Axios): Mmm, not what we did! We said that was the offer from Republicans. We never said it was meant to be a final package- if it had any more juice members would be trying to add things. But it’s not going to get that far anyway!

Miles Brundage: Are you saying the claim at the end, re: them putting forward packages that do not include any of those items, is incorrect?

Neil Chilson: I am saying it is incorrect to frame the preemption language as somehow the final package when this language is part of a negotiation process of a much bigger package (the NDAA).

Please acknowledge that yes, what Cruz and Scalise ‘had in mind’ for the federal framework was nothing. Would they have been open to discussing some amount of protecting kids, intellectual property carveouts (hello Senator Blackburn!) or even a version of California’s SB 53? Up to a point. What they have in mind, what they actually want, is very obviously nothing.

Yes, in a big package nothing is done until everything is done, so if you write ‘you will give me $1 billion dollars and I will give you nothing’ then that is merely my opening offer, maybe I will say thank you or throw in some magic beans or even disclose my safety and security plans for frontier model development. Indeed do many things come to pass.

Don’t tell me that this means there is a real proposed ‘federal framework’ or that these negotiations were aimed at finding one, or tell us we should trust the process.

The market did not noticeably respond to this failure to get AI preemption. That either means that the failure was already priced in, or that it didn’t matter for valuations. If it didn’t matter for valuations, we don’t need it.

We are frequently told, in a tone suggesting we are small children: We could never unilaterally pause something of vital importance to the American economy in the name of safety, throwing up pointless government barriers, that would shoot ourselves in the foot, they said. We’d lose to China. Completely impossible.

In other news:

Aaron Reichlin-Melnick: The official USCIS guidance on the pause is out. Until further notice from the USCIS Director, all immigration benefits (including citizenship) are indefinitely suspended for nationals of 19 countries, as are all affirmative asylum applications from nationals of any country.

USCIS says it will use this pause to conduct a “comprehensive re-review, potential interview, and re-interview of all aliens from [the 19 travel ban countries] who entered the United States on or after January 20, 2021,” or even outside that timeframe “when appropriate.”

What this means in practice is that Cubans, Venezuelans, Haitians, and nationals of 16 other countries now will be unable to acquire ANY immigration benefit during until the USCIS Director lifts this hold — including people who were days away from become U.S. citizens.

In addition, 500,000 people from those 19 countries who got green cards during the Biden admin, plus tens of thousands who got asylum or refugee status, as well as many others who received other benefits, now have to worry about potentially being called back in for a “re-review.”

Oh.

I wouldn’t be mentioning or have even read the New York Times piece on David Sacks, Silicon Valley’s Man in the White House Is Benefiting Himself and His Friends, if it wasn’t for so many of the people who do such things attacking the article as a no-good, terrible hit piece, or praising David Sacks.

The title certainly identifies it as a hit piece, but I mean I thought we all knew that David Sacks was Silicon Valley’s man in the White House and that he was running American AI policy for the benefit of business interests in general and Nvidia in particular, along with lots of bad faith arguments and attempts at intentional negative polarization. So I figured there wasn’t actually any news here, but at some point when you keep complaining the Streisand Effect triggers and I need to look.

The thing about the article is that there is indeed no news within it. All of this is indeed business as usual in 2025, business we knew about, business that is being done very much in the open. Yes, David Sacks is obsessed with selling Nvidia chips to everyone including directly to China ‘so America can “win” the AI race’ and argues this because of the phantom ‘tech stack’ arguments. Yes, Sacks does Trump-style and Trump-associated fundraising and related activities and plays up his podcast.

Yes, Sacks retains a wide variety of business interests in companies that are AI, even if he has divested from Meta, Amazon and xAI, and even if he doesn’t have stock interests directly it seems rather obvious that he stands to benefit on various levels from pro-business stances in general and pro-Nvidia stances in particular.

Yes, there is too much harping in the post on the various secondary business relationships between Sacks’s investments and those companies dealings with the companies Sacks is regulating or benefiting, as reporters and those who look for the appearance of impropriety often overemphasize, missing the bigger picture. Yes, the article presents all these AI deals and actions as if they are nefarious without making any sort of case why those actions might be bad.

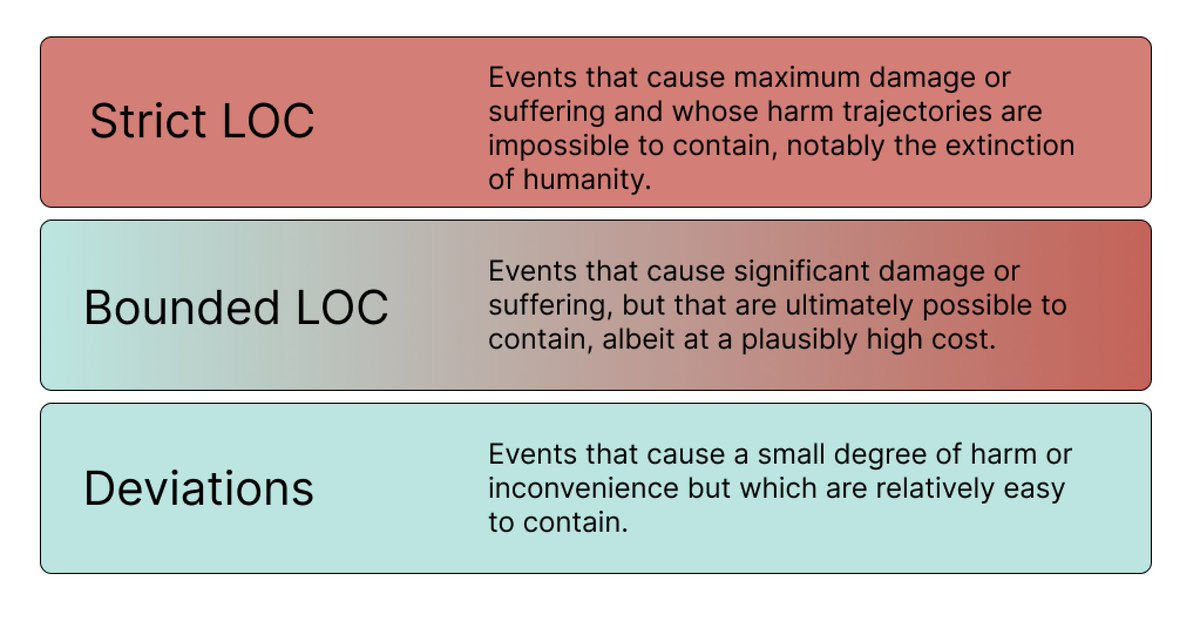

But again, none of this is surprising or new. Nor is it even that bad or that big a deal in the context of the Trump administration other than trying to sell top level chips to China, and David Sacks is very open about trying to do that, so come on, this is 2025, why all the defensiveness? None of it is unusually inaccurate or misleading for a New York Times article on tech. None of it is outside the boundaries of the journalistic rules of Bounded Distrust, indeed Opus 4.5 identified this as a textbook case of coloring inside the lines of Bounded Distrust and working via implication. Nor is this showing less accuracy or integrity than David Sacks himself typically displays in his many rants and claims, even if you give him the benefit of the doubt.

The main implication the piece is trying to send is that Sacks is prioritizing the interests of Nvidia or other private business interests he favors, rather than the interests of America or the American people. I think many of the links the article points to on this are bogus as potential causes of this, but also the article misses much of the best evidence that this is indeed what Sacks is centrally doing.

We do indeed have the audio from Jack Clark’s talk at The Curve, recommended if you haven’t already heard or read it.

OpenAI lead researcher Lukasz Kaiser talks to Matt Turck. He says we’re on the top of the S-curve for pre-training but at the bottom of it for RL and notes the GPU situation is about to change big time.

Marius Hobbhahn of Apollo Research on 80,000 Hours, on AI scheming.

Senator Bernie Sanders (I-Vermont): Unbelievable, but true – there is a very real fear that in the not too distant future a superintelligent AI could replace human beings in controlling the planet. That’s not science fiction. That is a real fear that very knowledgable people have.

… The threats from unchecked AI are real — worker displacement, corporate surveillance, invasion of privacy, environmental destruction, unmanned warfare.

Today, a tiny number of billionaires are shaping the future of AI behind closed doors. That is unacceptable. That must change.

Judd Rosenblatt and Cameron Berg write in WSJ about the need for a focus on AI alignment in the development and deployment of military AI, purely for practical purposes, including government funding of that work.

This is the latest metaphorical attempt by Eliezer:

Eliezer Yudkowsky:

Q: How have you updated your theory of gravity in the light of the shocking modern development of hot-air balloons?

A: While I did not specifically predict that hot-air balloons would develop as and when they did, nothing about them contradicts the theory of gravitation.

Q: I’m amazed that you refuse to update on the shocking news of hot-air balloons, which contradicts everything we previously thought about ‘things falling down’ being a law of the universe!

A: Yeah, well… I can’t really figure out how to phrase this in a non-insulting way, but different people may be differently adept at manipulating ideas on higher levels of abstraction.

Q: I’m even more shocked that you haven’t revised at all your previous statements about why it would be hard to go to the Moon, and specifically why we couldn’t just aim a hypothetical spacegoing vessel at the position of the Moon in the sky, if it were fired out of a cannon toward the Moon. Hot-air balloons just go straight up and follow the wind in a very predictable way; they show none of the steering difficulties you predicted.

A: Spacegoing vehicles will, predictably, not obey all the same rules as hot-air balloon navigation — at least not on the level of abstraction you are currently able to productively operate in thinking about physical rules.

Q: Hah! How un-empirical! How could you possibly know that?

A: The same way I knew a few decades earlier that it would be possible to get off the ground, back when everybody was yapping about that requiring centuries if it could ever happen at all. Alas, to understand why the theory of gravitation permits various forms of aerial and space travel, would require some further study and explanation, with more work required to explain it to some people than others.

Q: If you’re just going to be insulting, I’m gonna leave. (Flounces off in huff.)

Q2: So you say that it would be very difficult to steer hot-air balloons to the Moon, and in particular, that they wouldn’t just go where we point them. But what if some NEW technology comes along that is NOT exactly like modern hot-air balloons? Wouldn’t that obviate all of your modern theories of gravitation that are only about hot-air balloons in particular?

A: No. The key ideas in fact predate the development of hot-air balloons in particular for higher-than-ground-level travel. They operate on a higher level of abstraction. They would survive even what a more surface-level view might regard as a shocking overthrowing of all previous ideas about how to go high off the ground, by some entirely unexpected new paradigm of space travel.

Q: That’s just because that guy is utterly incapable of changing his mind about anything. He picks a tune and sticks to it.

A: I have changed my mind about as many as several things — but not, in the last couple of decades, the theory of gravity. Broadly speaking, I change my mind in proportion to how much something surprises me.

Q: You were expecting space vehicles to work by being fired out of cannons! Hot-air balloons are nothing like that, surprising you, and yet you haven’t changed your mind about gravity at all!

A: First of all, you’re mistaking a perfect-spheres-in-vacuum analysis for what I actually expected to happen. Second, the last decade has in fact changed my mind about where aerial travel is going in the near term, but not about whether you can get to the Moon by aiming a space-travel vehicle directly at the Moon. It is possible to be surprised on one level in a surrounding theory, without being surprised on a deeper level in an underlying theory. That is the kind of relationship that exists between the “Maybe the path forward on aerial travel is something like powerful ground launches” guess, which was surprised and invalidated by hot-air balloons, and the “Gravity works by the mutual attraction of masses” theory, which was not surprised nor invalidated.

Q: Balloons have mass but they go UP instead of DOWN. They are NOTHING LIKE massive bodies in a void being attracted to other massive things.

A: I do not know what I can usefully say to you about this unless and until you start successfully manipulating ideas at a higher level of abstraction than you are currently trying to use.

Q3: What is all this an analogy about, exactly?

A: Whether decision theory got invalidated by the shocking discovery of large language models; and whether the reasons to be concerned about machine superintelligence being hard to align, successfully under the first critical load, would all be invalidated if the future of AGI was about something *otherthan large language models. I didn’t predict LLMs coming, and nor did most people, and they were surprising on a couple of important levels — but not the levels where the grim predictions come from. Those ideas predate LLMs and no development in the last decade has been invalidating to those particular ideas. Decision theory is to LLMs as the law of gravity is to hot-air balloons.

Q3: Thanks.

The obvious response is that this is a strawman argument.

I don’t think it is. That doesn’t mean Eliezer’s theories are right. It definitely does not mean there aren’t much better criticisms often made.

But yes many criticisms of Eliezer’s theories and positions are at exactly this level.

This includes people actually saying versions of:

-

Eliezer Yudkowsky has a theory of existential risk (that he had before LLMs), that in no way relied on any particular features of sub-AGI AIs or LLMs.

-

But current LLMs have different features that you did not predict, and that do not match what you expect to be features of AGIs.

-

Therefore, Eliezer’s theory is invalid.

This also includes people actually saying versions of:

-

Eliezer Yudkowsky has a theory of existential risk (that he had before LLMs), that in no way relied on any particular features of sub-AGI AIs or LLMs.

-

But AGI might not take the form of an LLM.

-

If that happened, Eliezer’s theory would be invalid.

He cites this thread as a typical example:

Mani: Watching Yudkowsky in post-LLM debates is like tuning into a broken radio, repeating the same old points and stuck on loop. His fears feel baseless now, and his arguments just don’t hold up anymore. He’s lost the edge he had as a thought leader who was first to explore novel ideas and narratives in this debate space

Lubogao: He simulated a version of reality that was compelling to a lot of people stuck in a rationalist way of thinking. AI could only have one outcome in that reality: total destruction. Now we get AI and realize it is just a scramble generator and he is stuck.

Joshua Achiam and Dean Ball are pointing out a very important dynamic here:

Joshua Achiam (OpenAI, Head of Mission Alignment): Joe Allen was a fascinating presence at The Curve. And his thinking puts an exclamation point on something that has been quietly true for years now: somehow all of the interesting energy for discussions about the long-range future of humanity is concentrated on the right.

The left has completely abdicated their role in this discussion. A decade from now this will be understood on the left to have been a generational mistake; perhaps even more than merely generational.

This is the last window for long reflection on what humanity should become before we are in the throes of whatever transformation we’ve set ourselves up for. Everyone should weigh in while they can.

Mr. Gunn: Careful you don’t overgeneralize from social media sentiment. There is tons of activity offline, working on affordable housing, clean energy, new forms of art & science, etc.

Dean Ball: Joshua is right. In my view there are a few reasons for this:

Left epistemics favor expert endorsement; it is often hard for the Democratic elite to align around a new idea until the “correct” academics have signed off. In the case of AI that is unlikely because concepts like AGI are not taken seriously in academia, including by many within the field of machine learning. To the extent things like eg concentration of power are taken seriously by the left, they are invariably seen through the rather conventional lens of corporate power, money in politics, etc.

There are also “the groups,” who do not help. AGI invites conversation about the direction of humanity writ large; there is no particular angle on AGI for “the teachers union,” or most other interest groups. This makes it hard for AI to hold their attention, other than as a threat to be dealt with through occupational licensing regulations (which they favor anyway).

Many on the progressive left hold as foundational the notion that Silicon Valley is filled with vapid morons whose lack of engagement with

means they will never produce something of world-historical import. Accepting that “transformative AI” may well be built soon by Silicon Valley is thus very challenging for those of this persuasion. It is very hard for most Democrats to articulate what advanced AI would cause them to do differently beyond the policy agenda they’ve had for a long time. This is because outside of national security (a bipartisan persuasion), they have no answer to this question, because they do not take advanced AI seriously. Whereas Bannon, say what you will about him, can articulate a great many things America should do differently because of AI.

The result of all this is that the left is largely irrelevant on most matters related to AI, outside of important but narrow issues like SB 53. Even this bill though lacks a good “elevator pitch” to the American taxpayer. It’s a marginal accretion of technocratic regulation, not a vision (this isn’t a criticism of 53, just a description of it).

Recently I was chatting with a Democratic elected official, and he said “the problem [the Democratic Party] has is nobody knows where we stand on AI.” I replied that the problem is that nobody *careswhere they stand.

Dave Kasten: I don’t think it’s quite as bad as you write, though I wouldn’t disagree that there are many folks on the left who self-avowedly are doing exactly what you say.

One other factor that I think is relevant is that the Obama-era and onward Democratic party is very lawyer-led in its policy elites, and legal writing is close to a pessimal case for LLM hallucination (it’s an extremely regular field of text syntactically, but semantically very diverse), so they greatly underestimate AI progress.

Whenever voices on the left join discussions about AI, it is clear they mostly do not take AGI seriously. They are focused mainly on the impact of mundane AI on the set of concerns and interests they already had, combined with amorphous fear.

I included Mr. Gunn’s comment because it reinforces the point. The left is of course working on various things, but when the context is AI and the list of areas starts with affordable housing (not even ‘make housing affordable’ rather ‘affordable housing’) and clean energy, you have lost the plot.

If you’re in mechanistic interpretability, they say, pivot to pragmatic interpretability.

That means directly trying to solve problems ‘on the critical path to AGI going well,’ as in each with a concrete specific goal that functions as a North Star.

I note that whether or not one agrees with the pivot, talking this way about what they are doing and why is very good.

Dan Hendrycks: I’ve been saying mechanistic interpretability is misguided from the start. Glad people are coming around many years later.

I’m also thankful to @NeelNanda5 for writing this. Usually people just quietly pivot.

They explain this pivot is because:

-

Models are now far more interesting and offer practical tasks to do.

-

Pragmatic problems are often the comparative advantage of frontier labs.

-

The more ambitious mechanistic interpretability research made limited progress.

-

The useful progress has come from more practical limited strategies.

-

You need proxy tasks to know if you are making progress.

-

Meh, these limited solutions still kind of work, right?

DeepMind saying ‘we need to pivot away from mechanistic interpretability because it wasn’t giving us enough reward signal’ is a rather bad blackpill. A lot of the pitch of mechanistic interpretability was that it gave you a reward signal, you could show to yourself and others you did a thing, whereas many other alignment strategies didn’t offer this.

If even that level isn’t enough, and only practical proxy tasks are good enough, our range of action is very limited and we’re hoping that the things that solve proxy tasks happen to be the things that help us learn the big things. We’d basically be trying to solve mundane practical alignment in the hopes that this generalizes one way or another. I’m not sure why we should presume that. And it’s very easy to see how this could be a way to fool ourselves.

Indeed, I have long thought that mechanistic interpretability was overinvested relative to other alignment efforts (but underinvested in absolute terms) exactly because it was relatively easy to measure and feel like you were making progress.

I don’t love things like a section heading ‘curiosity is a double-edged sword,’ the explanation being that you can get nerd sniped and you need (again) proxy tasks as a validation step. In general they want to time-box and quantify basically everything?

I also think that ‘was it ‘scheming’ or just ‘confused’,’ an example of a question Neel Nanda points to, is a remarkably confused question, the boundary is a lot less solid than it appears, and in general attempts to put ‘scheming’ or ‘deception’ or similar in a distinct box misunderstand how all the related things work.

OpenAI starts a new Alignment Research blog for lightweight findings. Early posts include an overview of development of the Codex code reviewer.

Naomi Bashkansky (OpenAI): Fun story! Upon joining OpenAI in January, I saw more safety research happening than I expected. But much of that research sat in internal docs & slides, with no obvious external outlet for it.

Idea: what if Alignment had a blog, where we published shorter, more frequent pieces?

There’s also a first post called ‘Hello World.’ Here it is (bold mine):

At OpenAI, we research how we can safely[1] develop and deploy increasingly capable AI, and in particular AI capable of recursive self-improvement (RSI).

We want these systems to consistently follow human intent in complex, real-world scenarios and adversarial conditions, avoid catastrophic behavior, and remain controllable, auditable, and aligned with human values. We want more of that work to be shared with the broader research community. This blog is an experiment in sharing our work more frequently and earlier in the research lifecycle: think of it as a lab notebook.

This blog is meant for ideas that are too early, too narrow, or too fast-moving for a full paper. Here, we aim to share work that otherwise wouldn’t have been published, including ideas we are still exploring ourselves. If something looks promising, we’d rather put it out early and get feedback, because open dialog is a critical step in pressure testing, refining, and improving scientific work. We’ll publish sketches, discussions, and notes here, as well as more technical pieces less suited for the main blog.

Our posts won’t be full research papers, but they will be rigorous research contributions and will strive for technical soundness and clarity. These posts are written by researchers, for researchers, and we hope you find them interesting.

While OpenAI has dedicated research teams for alignment and safety, alignment and safety research is the shared work of many teams. You can expect posts from people across the company who are thinking about how to make AI systems safe and aligned.

For a future with safe and broadly beneficial AGI, the entire field needs to make progress together. This blog is a small step toward making that happen.

[1] As we’ve stated before:

OpenAI is deeply committed to safety, which we think of as the practice of enabling AI’s positive impacts by mitigating the negative ones. Although the potential upsides are enormous, we treat the risks of superintelligent systems as potentially catastrophic and believe that empirically studying safety and alignment can help global decisions, like whether the whole field should slow development to more carefully study these systems as we get closer to systems capable of recursive self-improvement. Obviously, no one should deploy superintelligent systems without being able to robustly align and control them, and this requires more technical work.

The part where they are starting the blog, sharing their insights and being transparent? That part is great. This is The Way.

And yes, we all want to enable AI’s positive impacts by mitigating the negative ones, and hopefully we all agree that ‘being able to robustly align and control’ superintelligent systems is going to ‘require more technical work.’

I do still notice the part about the explicit topline goal of RSI towards superintelligence.

Steven Adler: I am glad that OpenAI is being this clear about its intentions.

I am very not glad that this is the world we find ourselves in:

Recursive self-improvement – AI that makes itself progressively smarter – makes the safety challenges a heck of a lot harder.Kudos to the general idea from OpenAI, of sharing more of their alignment research quickly and openly.

Miles Brundage: I’m all for transparency but my primary thought here is just to remind folks that AI companies have not explained what this means, why it’s good, or why the higher safety risks are justified – recall OAI’s mission is ensuring *AGIis safe + beneficial

(even AGI was never super precisely defined, then the goal was superintelligence, now it’s also self-improvement?)

Many in the Bay Area think that there’s a lot of skepticism of AI companies in the press etc. but really that’s just on a few specific topics (bubble claims, child safety)…

There’s no real public accountability for things like “explaining your mission clearly/consistently.”

This is not a comment on the alignment blog thing, or the authors, which seems like it’s probably a useful initiative + came from a good motivation. More of a general comment/using this as a specific example, + this point is very much also true of other companies.

Anthropic neglected to affirm in the Opus 4.5 model card that they were careful not to train against the Chain-Of-Thought, but after this was pointed out they did so affirm.

Sam Bowman: This was an omission. The language you quote about Haiku and Sonnet 4.5 is also true of Opus 4.5.

In general I agree with Ryan Greenblatt’s general observation on the Anthropic model card that the evals they used were inadequate and their ultimate release decision was fine in practice this time but made mostly on vibes and that can’t continue.

Ryan Greenblatt: My vibe here is “come on we can do better” and also “we’re not going to have much/any assurance, we’re failing easy mode”. TBC, it’s plausible Anthropic should half-ass current evals while being clear about the limited assurance.

Louder and once more for the people in the back: Evan Hubinger of Anthropic reminds as that Alignment remains a hard, unsolved problem, even to someone who (to my eyes, and even more so to the eyes of Eliezer Yudkowsky as seen inevitably in the comments).

Steven Adler writes about five ways AI can tell you’re testing it. The AI can either know it is being tested because there is something that doesn’t line up with the real world. Or it can go the other way, and act as if it is being tested barring a signal or other strong proof that it is not. The AIs wisely have evaluation paranoia, so making even one mistake can be enough, and in some cases there is no good solution because the AI is checking for impact on the real world.

OpenAI trains a version of GPT-5-Thinking to ‘confess’ when it fails to follow directions or does other undesirable things. As everyone who uses LLMs knows, when you ask the model whether it just did something wrong, it will often know what it did. OpenAI explains that LLMs are optimizing for many things at once, so you can check how that did at matching the current explicit objective and go from there.

The best part is that the model seemed able to distinguish between scoremaxxing on the main output, including manipulating the judge, versus still confessing what it did. That’s great, but also the kind of thing we shouldn’t expect to last.

There is little degradation on task performance if they do this training via RLAIF.

Assuming this is neutral or positive for performance otherwise, this seems like a promising thing to try as part of defense in depth. I can see how there could be reasons driving the model to do something you don’t want, but it still being happy to notice and tell you about that. I wouldn’t count on this when the model is scheming ‘for real’ but then presumably everyone realized that already.

Here are some additional thoughts from FleetingBits.

Bits also points to the second half of the paper, that models learn to fool verifiers to the extent that fooling the verifier is easier than solving the problem. As in, if fooling the verifier is the right answer then it will learn this and generalize. That’s a mirror of the Anthropic finding that if you make reward hacks the right answer then it will learn this and generalize. Same principle.

As a general strategy, ‘get the AI to confess’ relies on being able to rely on the confession. That’s a problem, since you can never rely on anything subject to any form of selection pressure, unless you know the selection is for exactly the thing you want, and the stronger the models get the worse this divergence is going to get.