Sovereignty has mattered since the invention of the nation state—defined by borders, laws, and taxes that apply within and without. While many have tried to define it, the core idea remains: nations or jurisdictions seek to stay in control, usually to the benefit of those within their borders.

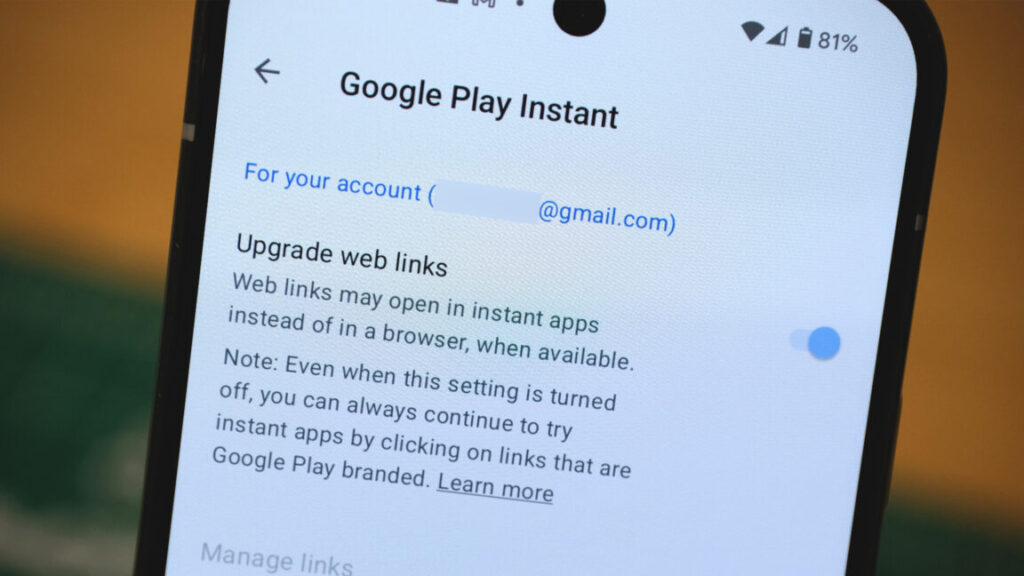

Digital sovereignty is a relatively new concept, also difficult to define but straightforward to understand. Data and applications don’t understand borders unless they are specified in policy terms, as coded into the infrastructure.

The World Wide Web had no such restrictions at its inception. Communitarian groups such as the Electronic Frontier Foundation, service providers and hyperscalers, non-profits and businesses all embraced a model that suggested data would look after itself.

But data won’t look after itself, for several reasons. First, data is massively out of control. We generate more of it all the time, and for at least two or three decades (according to historical surveys I’ve run), most organizations haven’t fully understood their data assets. This creates inefficiency and risk—not least, widespread vulnerability to cyberattack.

Risk is probability times impact—and right now, the probabilities have shot up. Invasions, tariffs, political tensions, and more have brought new urgency. This time last year, the idea of switching off another country’s IT systems was not on the radar. Now we’re seeing it happen—including the U.S. government blocking access to services overseas.

Digital sovereignty isn’t just a European concern, though it is often framed as such. In South America for example, I am told that sovereignty is leading conversations with hyperscalers; in African countries, it is being stipulated in supplier agreements. Many jurisdictions are watching, assessing, and reviewing their stance on digital sovereignty.

As the adage goes: a crisis is a problem with no time left to solve it. Digital sovereignty was a problem in waiting—but now it’s urgent. It’s gone from being an abstract ‘right to sovereignty’ to becoming a clear and present issue, in government thinking, corporate risk and how we architect and operate our computer systems.

What does the digital sovereignty landscape look like today?

Much has changed since this time last year. Unknowns remain, but much of what was unclear this time last year is now starting to solidify. Terminology is clearer – for example talking about classification and localisation rather than generic concepts.

We’re seeing a shift from theory to practice. Governments and organizations are putting policies in place that simply didn’t exist before. For example, some countries are seeing “in-country” as a primary goal, whereas others (the UK included) are adopting a risk-based approach based on trusted locales.

We’re also seeing a shift in risk priorities. From a risk standpoint, the classic triad of confidentiality, integrity, and availability are at the heart of the digital sovereignty conversation. Historically, the focus has been much more on confidentiality, driven by concerns about the US Cloud Act: essentially, can foreign governments see my data?

This year however, availability is rising in prominence, due to geopolitics and very real concerns about data accessibility in third countries. Integrity is being talked about less from a sovereignty perspective, but is no less important as a cybercrime target—ransomware and fraud being two clear and present risks.

Thinking more broadly, digital sovereignty is not just about data, or even intellectual property, but also the brain drain. Countries don’t want all their brightest young technologists leaving university only to end up in California or some other, more attractive country. They want to keep talent at home and innovate locally, to the benefit of their own GDP.

How Are Cloud Providers Responding?

Hyperscalers are playing catch-up, still looking for ways to satisfy the letter of the law whilst ignoring (in the French sense) its spirit. It’s not enough for Microsoft or AWS to say they will do everything they can to protect a jurisdiction’s data, if they are already legally obliged to do the opposite. Legislation, in this case US legislation, calls the shots—and we all know just how fragile this is right now.

We see hyperscaler progress where they offer technology to be locally managed by a third party, rather than themselves. For example, Google’s partnership with Thales, or Microsoft with Orange, both in France (Microsoft has similar in Germany). However, these are point solutions, not part of a general standard. Meanwhile, AWS’ recent announcement about creating a local entity doesn’t solve for the problem of US over-reach, which remains a core issue.

Non-hyperscaler providers and software vendors have an increasingly significant play: Oracle and HPE offer solutions that can be deployed and managed locally for example; Broadcom/VMware and Red Hat provide technologies that locally situated, private cloud providers can host. Digital sovereignty is thus a catalyst for a redistribution of “cloud spend” across a broader pool of players.

What Can Enterprise Organizations Do About It?

First, see digital sovereignty as a core element of data and application strategy. For a nation, sovereignty means having solid borders, control over IP, GDP, and so on. That’s the goal for corporations as well—control, self-determination, and resilience.

If sovereignty isn’t seen as an element of strategy, it gets pushed down into the implementation layer, leading to inefficient architectures and duplicated effort. Far better to decide up front what data, applications and processes need to be treated as sovereign, and defining an architecture to support that.

This sets the scene for making informed provisioning decisions. Your organization may have made some big bets on key vendors or hyperscalers, but multi-platform thinking increasingly dominates: multiple public and private cloud providers, with integrated operations and management. Sovereign cloud becomes one element of a well-structured multi-platform architecture.

It is not cost-neutral to deliver on sovereignty, but the overall business value should be tangible. A sovereignty initiative should bring clear advantages, not just for itself, but through the benefits that come with better control, visibility, and efficiency.

Knowing where your data is, understanding which data matters, managing it efficiently so you’re not duplicating or fragmenting it across systems—these are valuable outcomes. In addition, ignoring these questions can lead to non-compliance or be outright illegal. Even if we don’t use terms like ‘sovereignty’, organizations need a handle on their information estate.

Organizations shouldn’t be thinking everything cloud-based needs to be sovereign, but should be building strategies and policies based on data classification, prioritization and risk. Build that picture and you can solve for the highest-priority items first—the data with the strongest classification and greatest risk. That process alone takes care of 80–90% of the problem space, avoiding making sovereignty another problem whilst solving nothing.

Where to start? Look after your own organization first

Sovereignty and systems thinking go hand in hand: it’s all about scope. In enterprise architecture or business design, the biggest mistake is boiling the ocean—trying to solve everything at once.

Instead, focus on your own sovereignty. Worry about your own organization, your own jurisdiction. Know where your own borders are. Understand who your customers are, and what their requirements are. For example, if you’re a manufacturer selling into specific countries—what do those countries require? Solve for that, not for everything else. Don’t try to plan for every possible future scenario.

Focus on what you have, what you’re responsible for, and what you need to address right now. Classify and prioritise your data assets based on real-world risk. Do that, and you’re already more than halfway toward solving digital sovereignty—with all the efficiency, control, and compliance benefits that come with it.

Digital sovereignty isn’t just regulatory, but strategic. Organizations that act now can reduce risk, improve operational clarity, and prepare for a future based on trust, compliance, and resilience.

The post Why is digital sovereignty important right now? appeared first on Gigaom.