Yes, everything online sucks now—but it doesn’t have to

We all feel it: Our once-happy digital spaces have become increasingly less user-friendly and more toxic, cluttered with extras nobody asked for and hardly anybody wants. There’s even a word for it: “enshittification,” named 2023 Word of the Year by the American Dialect Society. The term was coined by tech journalist/science fiction author Cory Doctorow, a longtime advocate of digital rights. Doctorow has spun his analysis of what’s been ailing the tech industry into an eminently readable new book, Enshittification: Why Everything Suddenly Got Worse and What To Do About It.

As Doctorow tells it, he was on vacation in Puerto Rico, staying in a remote cabin nestled in a cloud forest with microwave Internet service—i.e., very bad Internet service, since microwave signals struggle to penetrate through clouds. It was a 90-minute drive to town, but when they tried to consult TripAdvisor for good local places to have dinner one night, they couldn’t get the site to load. “All you would get is the little TripAdvisor logo as an SVG filling your whole tab and nothing else,” Doctorow told Ars. “So I tweeted, ‘Has anyone at TripAdvisor ever been on a trip? This is the most enshittified website I’ve ever used.’”

Initially, he just got a few “haha, that’s a funny word” responses. “It was when I married that to this technical critique, at a moment when things were quite visibly bad to a much larger group of people, that made it take off,” Doctorow said. “I didn’t deliberately set out to do it. I bought a million lottery tickets and one of them won the lottery. It only took two decades.”

Yes, people sometimes express regret to him that the term includes a swear word. To which he responds, “You’re welcome to come up with another word. I’ve tried. ‘Platform decay’ just isn’t as good.” (“Encrapification” and “enpoopification” also lack a certain je ne sais quoi.)

In fact, it’s the sweariness that people love about the word. While that also means his book title inevitably gets bleeped on broadcast radio, “The hosts, in my experience, love getting their engineers to creatively bleep it,” said Doctorow. “They find it funny. It’s good radio, it stands out when every fifth word is ‘enbeepification.’”

People generally use “enshittification” colloquially to mean “the degradation in the quality and experience of online platforms over time.” Doctorow’s definition is more specific, encompassing “why an online service gets worse, how that worsening unfolds,” and how this process spreads to other online services, such that everything is getting worse all at once.

For Doctorow, enshittification is a disease with symptoms, a mechanism, and an epidemiology. It has infected everything from Facebook, Twitter, Amazon, and Google, to Airbnb, dating apps, iPhones, and everything in between. “For me, the fact that there were a lot of platforms that were going through this at the same time is one of the most interesting and important factors in the critique,” he said. “It makes this a structural issue and not a series of individual issues.”

It starts with the creation of a new two-sided online product of high quality, initially offered at a loss to attract users—say, Facebook, to pick an obvious example. Once the users are hooked on the product, the vendor moves to the second stage: degrading the product in some way for the benefit of their business customers. This might include selling advertisements, scraping and/or selling user data, or tweaking algorithms to prioritize content the vendor wishes users to see rather than what those users actually want.

This locks in the business customers, who, in turn, invest heavily in that product, such as media companies that started Facebook pages to promote their published content. Once business customers are locked in, the vendor can degrade those services too—i.e., by de-emphasizing news and links away from Facebook—to maximize profits to shareholders. Voila! The product is now enshittified.

The four horsemen of the shitocalypse

Doctorow identifies four key factors that have played a role in ushering in an era that he has dubbed the “Enshittocene.” The first is competition (markets), in which companies are motivated to make good products at affordable prices, with good working conditions, because otherwise customers and workers will go to their competitors. The second is government regulation, such as antitrust laws that serve to keep corporate consolidation in check, or levying fines for dishonest practices, which makes it unprofitable to cheat.

The third is interoperability: the inherent flexibility of digital tools, which can play a useful adversarial role. “The fact that enshittification can always be reversed with a dis-enshittifiting counter-technology always acted as a brake on the worst impulses of tech companies,” Doctorow writes. Finally, there is labor power; in the case of the tech industry, highly skilled workers were scarce and thus had considerable leverage over employers.

All four factors, when functioning correctly, should serve as constraints to enshittification. However, “One by one each enshittification restraint was eroded until it dissolved, leaving the enshittification impulse unchecked,” Doctorow writes. Any “cure” will require reversing those well-established trends.

But isn’t all this just the nature of capitalism? Doctorow thinks it’s not, arguing that the aforementioned weakening of traditional constraints has resulted in the usual profit-seeking behavior producing very different, enshittified outcomes. “Adam Smith has this famous passage in Wealth of Nations about how it’s not due to the generosity of the baker that we get our bread but to his own self-regard,” said Doctorow. “It’s the fear that you’ll get your bread somewhere else that makes him keep prices low and keep quality high. It’s the fear of his employees leaving that makes him pay them a fair wage. It is the constraints that causes firms to behave better. You don’t have to believe that everything should be a capitalist or a for-profit enterprise to acknowledge that that’s true.”

Our wide-ranging conversation below has been edited for length to highlight the main points of discussion.

Ars Technica: I was intrigued by your choice of framing device, discussing enshittification as a form of contagion.

Cory Doctorow: I’m on a constant search for different framing devices for these complex arguments. I have talked about enshittification in lots of different ways. That frame was one that resonated with people. I’ve been a blogger for a quarter of a century, and instead of keeping notes to myself, I make notes in public, and I write up what I think is important about something that has entered my mind, for better or for worse. The downside is that you’re constantly getting feedback that can be a little overwhelming. The upside is that you’re constantly getting feedback, and if you pay attention, it tells you where to go next, what to double down on.

Another way of organizing this is the Galaxy Brain meme, where the tiny brain is “Oh, this is because consumers shopped wrong.” The medium brain is “This is because VCs are greedy.” The larger brain is “This is because tech bosses are assholes.” But the biggest brain of all is “This is because policymakers created the policy environment where greed can ruin our lives.” There’s probably never going to be just one way to talk about this stuff that lands with everyone. So I like using a variety of approaches. I suck at being on message. I’m not going to do Enshittification for the Soul and Mornings with Enshittifying Maury. I am restless, and my Myers-Briggs type is ADHD, and I want to have a lot of different ways of talking about this stuff.

Ars Technica: One site that hasn’t (yet) succumbed is Wikipedia. What has protected Wikipedia thus far?

Cory Doctorow: Wikipedia is an amazing example of what we at the Electronic Frontier Foundation (EFF) call the public interest Internet. Internet Archive is another one. Most of these public interest Internet services start off as one person’s labor of love, and that person ends up being what we affectionately call the benevolent dictator for life. Very few of these projects have seen the benevolent dictator for life say, “Actually, this is too important for one person to run. I cannot be the keeper of the soul of this project. I am prone to self-deception and folly just like every other person. This needs to belong to its community.” Wikipedia is one of them. The founder, my friend Jimmy Wales, woke up one day and said, “No individual should run Wikipedia. It should be a communal effort.”

There’s a much more durable and thick constraint on the decisions of anyone at Wikipedia to do something bad. For example, Jimmy had this idea that you could use AI in Wikipedia to help people make entries and navigate Wikipedia’s policies, which are daunting. The community evaluated his arguments and decided—not in a reactionary way, but in a really thoughtful way—that this was wrong. Jimmy didn’t get his way. It didn’t rule out something in the future, but that’s not happening now. That’s pretty cool.

Wikipedia is not just governed by a board; it’s also structured as a nonprofit. That doesn’t mean that there’s no way it could go bad. But it’s a source of friction against enshittification. Wikipedia has its entire corpus irrevocably licensed as the most open it can be without actually being in the public domain. Even if someone were to capture Wikipedia, there’s limits on what they could do to it.

There’s also a labor constraint in Wikipedia in that there’s very little that the leadership can do without bringing along a critical mass of a large and diffuse body of volunteers. That cuts against the volunteers working in unison—they’re not represented by a union; it’s hard for them to push back with one voice. But because they’re so diffuse and because there’s no paychecks involved, it’s really hard for management to do bad things. So if there are two people vying for the job of running the Wikimedia Foundation and one of them has got nefarious plans and the other doesn’t, the nefarious plan person, if they’re smart, is going to give it up—because if they try to squeeze Wikipedia, the harder they squeeze, the more it will slip through their grasp.

So these are structural defenses against enshittification of Wikipedia. I don’t know that it was in the mechanism design—I think they just got lucky—but it is a template for how to run such a project. It does raise this question: How do you build the community? But if you have a community of volunteers around a project, it’s a model of how to turn that project over to that community.

Ars Technica: Your case studies naturally include the decay of social media, notably Facebook and the social media site formerly known as Twitter. How might newer social media platforms resist the spiral into “platform decay”?

Cory Doctorow: What you want is a foundation in which people on social media face few switching costs. If the social media is interoperable, if it’s federatable, then it’s much harder for management to make decisions that are antithetical to the interests of users. If they do, users can escape. And it sets up an internal dynamic within the firm, where the people who have good ideas don’t get shouted down by the people who have bad but more profitable ideas, because it makes those bad ideas unprofitable. It creates both short and long-term risks to the bottom line.

There has to be a structure that stops their investors from pressurizing them into doing bad things, that stops them from rationalizing their way into complying. I think there’s this pathology where you start a company, you convince 150 of your friends to risk their kids’ college fund and their mortgage working for you. You make millions of users really happy, and your investors come along and say, “You have to destroy the life of 5 percent of your users with some change.” And you’re like, “Well, I guess the right thing to do here is to sacrifice those 5 percent, keep the other 95 percent happy, and live to fight another day, because I’m a good guy. If I quit over this, they’ll just put a bad guy in who’ll wreck things. I keep those 150 people working. Not only that, I’m kind of a martyr because everyone thinks I’m a dick for doing this. No one understands that I have taken the tough decision.”

I think that’s a common pattern among people who, in fact, are quite ethical but are also capable of rationalizing their way into bad things. I am very capable of rationalizing my way into bad things. This is not an indictment of someone’s character. But it’s why, before you go on a diet, you throw away the Oreos. It’s why you bind yourself to what behavioral economists call “Ulysses pacts“: You tie yourself to the mast before you go into the sea of sirens, not because you’re weak but because you’re strong enough now to know that you’ll be weak in the future.

I have what I would call the epistemic humility to say that I don’t know what makes a good social media network, but I do know what makes it so that when they go bad, you’re not stuck there. You and I might want totally different things out of our social media experience, but I think that you should 100 percent have the right to go somewhere else without losing anything. The easier it is for you to go without losing something, the better it is for all of us.

My dream is a social media universe where knowing what network someone is using is just a weird curiosity. It’d be like knowing which cell phone carrier your friend is using when you give them a call. It should just not matter. There might be regional or technical reasons to use one network or another, but it shouldn’t matter to anyone other than the user what network they’re using. A social media platform where it’s always easier for users to leave is much more future-proof and much more effective than trying to design characteristics of good social media.

Ars Technica: How might this work in practice?

Cory Doctorow: I think you just need a protocol. This is [Mike] Maznik’s point: protocols, not products. We don’t need a universal app to make email work. We don’t need a universal app to make the web work. I always think about this in the context of administrable regulation. Making a rule that says your social media network must be good for people to use and must not harm their mental health is impossible. The fact intensivity of determining whether a platform satisfies that rule makes it a non-starter.

Whereas if you were to say, “OK, you have to support an existing federation protocol, like AT Protocol and Mastodon ActivityPub,” both have ways to port identity from one place to another and have messages auto-forward. This is also in RSS. There’s a permanent redirect directive. You do that, you’re in compliance with the regulation.

Or you have to do something that satisfies the functional requirements of the spec. So it’s not “did you make someone sad in a way that was reckless?” That is a very hard question to adjudicate. Did you satisfy these functional requirements? It’s not easy to answer that, but it’s not impossible. If you want to have our users be able to move to your platform, then you just have to support the spec that we’ve come up with, which satisfies these functional requirements.

We don’t have to have just one protocol. We can have multiple ones. Not everything has to connect to everything else, but everyone who wants to connect should be able to connect to everyone else who wants to connect. That’s end-to-end. End-to-end is not “you are required to listen to everything someone wants to tell you.” It’s that willing parties should be connected when they want to be.

Ars Technica: What about security and privacy protocols like GPG and PGP?

Cory Doctorow: There’s this argument that the reason GPG is so hard to use is that it’s intrinsic; you need a closed system to make it work. But also, until pretty recently, GPG was supported by one part-time guy in Germany who got 30,000 euros a year in donations to work on it, and he was supporting 20 million users. He was primarily interested in making sure the system was secure rather than making it usable. If you were to put Big Tech quantities of money behind improving ease of use for GPG, maybe you decide it’s a dead end because it is a 30-year-old attempt to stick a security layer on top of SMTP. Maybe there’s better ways of doing it. But I doubt that we have reached the apex of GPG usability with one part-time volunteer.

I just think there’s plenty of room there. If you have a pretty good project that is run by a large firm and has had billions of dollars put into it, the most advanced technologists and UI experts working on it, and you’ve got another project that has never been funded and has only had one volunteer on it—I would assume that dedicating resources to that second one would produce pretty substantial dividends, whereas the first one is only going to produce these minor tweaks. How much more usable does iOS get with every iteration?

I don’t know if PGP is the right place to start to make privacy, but I do think that if we can create independence of the security layer from the transport layer, which is what PGP is trying to do, then it wouldn’t matter so much that there is end-to-end encryption in Mastodon DMs or in Bluesky DMs. And again, it doesn’t matter whose sim is in your phone, so it just shouldn’t matter which platform you’re using so long as it’s secure and reliably delivered end-to-end.

Ars Technica: These days, I’m almost contractually required to ask about AI. There’s no escaping it. But it’s certainly part of the ongoing enshittification.

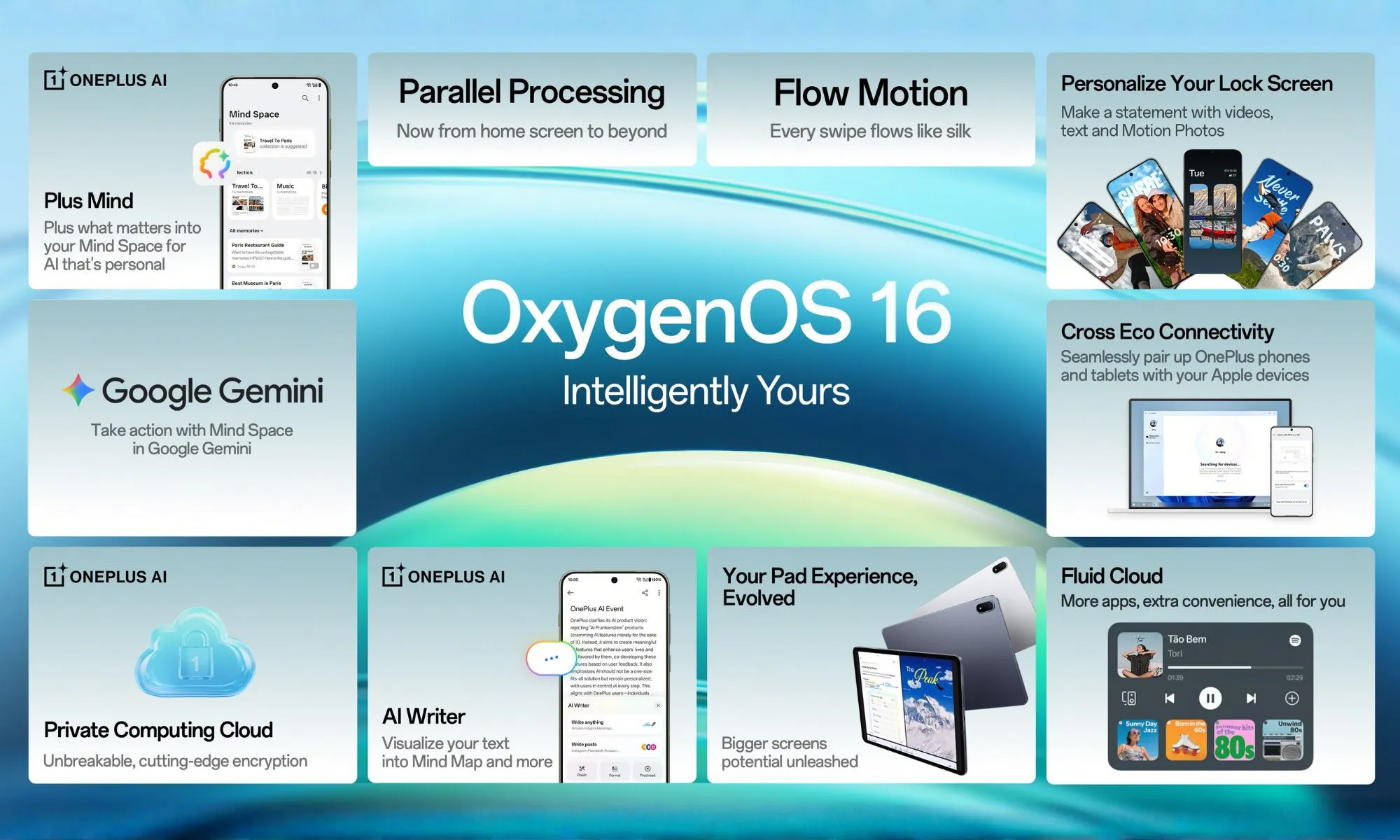

Cory Doctorow: I agree. Again, the companies are too big to care. They know you’re locked in, and the things that make enshittification possible—like remote software updating, ongoing analytics of use of devices—they allow for the most annoying AI dysfunction. I call it the fat-finger economy, where you have someone who works in a company on a product team, and their KPI, and therefore their bonus and compensation, is tied to getting you to use AI a certain number of times. So they just look at the analytics for the app and they ask, “What button gets pushed the most often? Let’s move that button somewhere else and make an AI summoning button.”

They’re just gaming a metric. It’s causing significant across-the-board regressions in the quality of the product, and I don’t think it’s justified by people who then discover a new use for the AI. That’s a paternalistic justification. The user doesn’t know what they want until you show it to them: “Oh, if I trick you into using it and you keep using it, then I have actually done you a favor.” I don’t think that’s happening. I don’t think people are like, “Oh, rather than press reply to a message and then type a message, I can instead have this interaction with an AI about how to send someone a message about takeout for dinner tonight.” I think people are like, “That was terrible. I regret having tapped it.”

The speech-to-text is unusable now. I flatter myself that my spoken and written communication is not statistically average. The things that make it me and that make it worth having, as opposed to just a series of multiple-choice answers, is all the ways in which it diverges from statistical averages. Back when the model was stupider, when it gave up sooner if it didn’t recognize what word it might be and just transcribed what it thought you’d said rather than trying to substitute a more probable word, it was more accurate. Now, what I’m getting are statistically average words that are meaningless.

That elision of nuance and detail is characteristic of what makes AI products bad. There is a bunch of stuff that AI is good at that I’m excited about, and I think a lot of it is going to survive the bubble popping. But I fear that we’re not planning for that. I fear what we’re doing is taking workers whose jobs are meaningful, replacing them with AIs that can’t do their jobs, and then those AIs are going to go away and we’ll have nothing. That’s my concern.

Ars Technica: You prescribe a “cure” for enshittification, but in such a polarized political environment, do we even have the collective will to implement the necessary policies?

Cory Doctorow: The good news is also the bad news, which is that this doesn’t just affect tech. Take labor power. There are a lot of tech workers who are looking at the way their bosses treat the workers they’re not afraid of—Amazon warehouse workers and drivers, Chinese assembly line manufacturers for iPhones—and realizing, “Oh, wait, when my boss stops being afraid of me, this is how he’s going to treat me.” Mark Zuckerberg stopped going to those all-hands town hall meetings with the engineering staff. He’s not pretending that you are his peers anymore. He doesn’t need to; he’s got a critical mass of unemployed workers he can tap into. I think a lot of Googlers figured this out after the 12,000-person layoffs. Tech workers are realizing they missed an opportunity, that they’re going to have to play catch-up, and that the only way to get there is by solidarity with other kinds of workers.

The same goes for competition. There’s a bunch of people who care about media, who are watching Warner about to swallow Paramount and who are saying, “Oh, this is bad. We need antitrust enforcement here.” When we had a functional antitrust system for the last four years, we saw a bunch of telecoms mergers stopped because once you start enforcing antitrust, it’s like eating Pringles. You just can’t stop. You embolden a lot of people to start thinking about market structure as a source of either good or bad policy. The real thing that happened with [former FTC chair] Lina Kahn doing all that merger scrutiny was that people just stopped planning mergers.

There are a lot of people who benefit from this. It’s not just tech workers or tech users; it’s not just media users. Hospital consolidation, pharmaceutical consolidation, has a lot of people who are very concerned about it. Mark Cuban is freaking out about pharmacy benefit manager consolidation and vertical integration with HMOs, as he should be. I don’t think that we’re just asking the anti-enshittification world to carry this weight.

Same with the other factors. The best progress we’ve seen on interoperability has been through right-to-repair. It hasn’t been through people who care about social media interoperability. One of the first really good state-level right-to-repair bills was the one that [Governor] Jared Polis signed in Colorado for powered wheelchairs. Those people have a story that is much more salient to normies.

What do you mean you spent six months in bed because there’s only two powered wheelchair manufacturers and your chair broke and you weren’t allowed to get it fixed by a third party?” And they’ve slashed their repair department, so it takes six months for someone to show up and fix your chair. So you had bed sores and pneumonia because you couldn’t get your chair fixed. This is bullshit.

So the coalitions are quite large. The thing that all of those forces share—interoperability, labor power, regulation, and competition—is that they’re all downstream of corporate consolidation and wealth inequality. Figuring out how to bring all of those different voices together, that’s how we resolve this. In many ways, the enshittification analysis and remedy are a human factors and security approach to designing an enshittification-resistant Internet. It’s about understanding this as a red team, blue team exercise. How do we challenge the status quo that we have now, and how do we defend the status quo that we want?

Anything that can’t go on forever eventually stops. That is the first law of finance, Stein’s law. We are reaching multiple breaking points, and the question is whether we reach things like breaking points for the climate and for our political system before we reach breaking points for the forces that would rescue those from permanent destruction.

Jennifer is a senior writer at Ars Technica with a particular focus on where science meets culture, covering everything from physics and related interdisciplinary topics to her favorite films and TV series. Jennifer lives in Baltimore with her spouse, physicist Sean M. Carroll, and their two cats, Ariel and Caliban.

Yes, everything online sucks now—but it doesn’t have to Read More »