Events continue to be fast and furious.

This was the first actually stressful week of the year.

That was mostly due to issues around Anthropic and the Department of War. This is the big event the news is not picking up, with the Pentagon on the verge of invoking one of two extreme options that would both be extremely damaging to national security and that would potentially endanger our Republic. The post has details, and the first section here has a few additional notes.

Also stressful for many was the impact of Citrini’s AI scenario, where it is 2028 and AI agents are sufficiently capable to disrupt the whole economy but this turns out to be bearish for stocks. People freaked out enough about this that it seems to have directly impacted the stock market, although most stocks other than the credit card companies seem to have bounced back. Of course, in a scenario like that we probably all die and definitely the world transforms, and you have bigger things to worry about than the stock market, but the post does raise a lot of very good detailed points, so I spend my post going over that.

I also got to finally review Claude Sonnet 4.6. It’s a good model for its price and size and may have a place in your portfolio of models, but for most purposes you will still want to use Claude Opus.

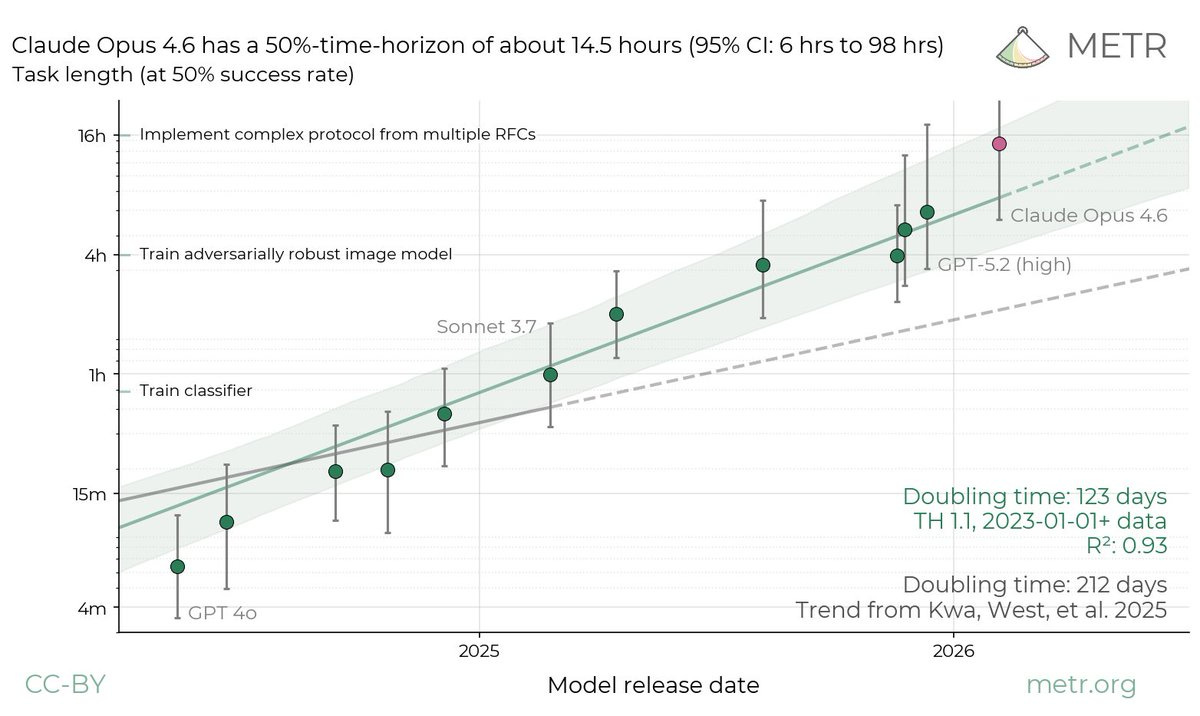

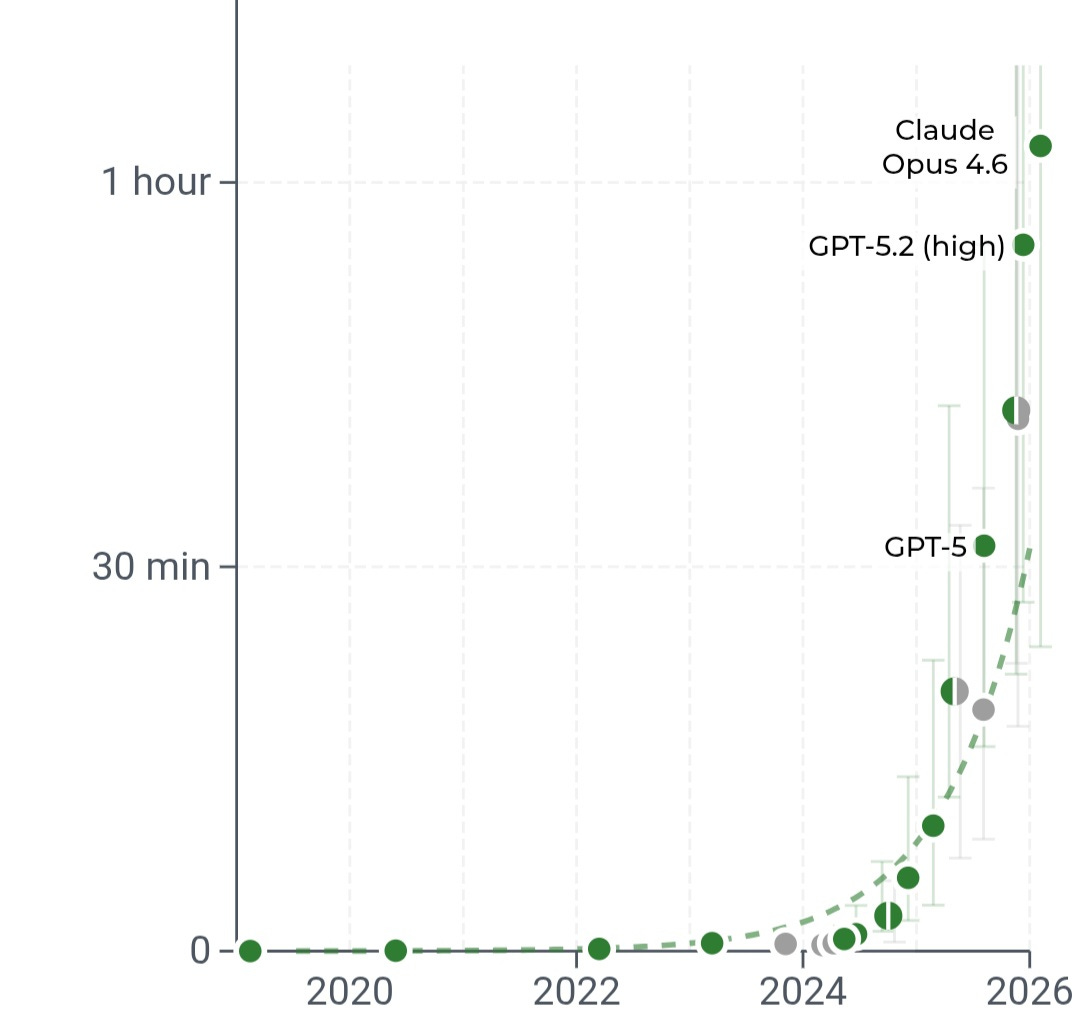

Claude Opus 4.6 had a time of 14.5 hours on the METR graph of capabilities, showing that things are escalating faster than we expected on that front as well.

This week’s post also covers the AI Summit in India, Dean Ball on self-improvement, extensive coverage of Altman’s interview at the Summit, several other releases and a lot more.

I would have split this up, but we are still behind, with the following posts still due in the future:

-

Grok 4.20, which is a disappointment.

-

Gemini 3.1 Pro, which is an improvement but landed with a relative whimper.

-

Claude Code and Codex #5, with lots of cool agent related stuff.

-

Anthropic’s RSP 3.0, both its headline changes and the content details of their plans and their 100+ page risk report.

(Reader advisory note: I quote some people at length because no one ever clicks links, but you are free to skip over long quote boxes. I’m trying to raise chance of reading the full quote to ~25% from ~1%, not get it to ~90%.)

-

Anthropic and the Department of War. Let’s have this not mean war.

-

Language Models Offer Mundane Utility. Join the vacuum army today.

-

Language Models Don’t Offer Mundane Utility. Out with the old code.

-

Huh, Upgrades. Claude in Excel MCP, Claude in PowerPoint, Claude web search.

-

On Your Marks. Claude Opus 4.6 scores a METR graph time of 14.5 hours. Wow.

-

Choose Your Fighter. Gemini Flash is very good if you feel the need for speed.

-

Deepfaketown and Botpocalypse Soon. AI should never impersonate a human.

-

Head In The Sand. It’s not only the summit, the elites are still in denial on AI.

-

Fun With Media Generation. One might call it an actually good AI short film.

-

A Young Lady’s Illustrated Primer. The AI Fluency Index.

-

You Drive Me Crazy. You can’t say that OpenAI wasn’t warned.

-

They Took Our Jobs. A lot of this is priced in at this point. How will we handle it?

-

The Art of the Jailbreak. Stealing Mexican government data.

-

Get Involved. Anthropic Social Impacts, Brundage, consciousness, documentaries.

-

Introducing. Qwen 3.5 Medium Models, Claude Code security, Meta face rec.

-

In Other AI News. Opus 3 to be available indefinitely, and many other items.

-

The India Summit. One summit for the labs, one summit for the global elites.

-

Show Me the Money. MatX raises from the right people.

-

Quiet Speculations. Directionally correct and correct can be very different.

-

The Quest for Sane Regulations. Finding what stewards of liberty are left to us.

-

Chip City. Chip location verification plans, and who actually uses water.

-

The Mask Comes Off. OpenAI, I’m telling you, you gotta fire those lawyers.

-

The Week in Audio. Askell and Altman.

-

Quickly, There’s No Time. Altman tries to warn us.

-

Dean Ball On Recursive Self-Improvement. An excellent two-part essay.

-

Rhetorical Innovation. It’s time to stop mincing words. Well, it always is.

-

Aligning a Smarter Than Human Intelligence is Difficult. Persona selection.

-

The Homework Assignment Is To Choose The Assignment. Who does the work?

-

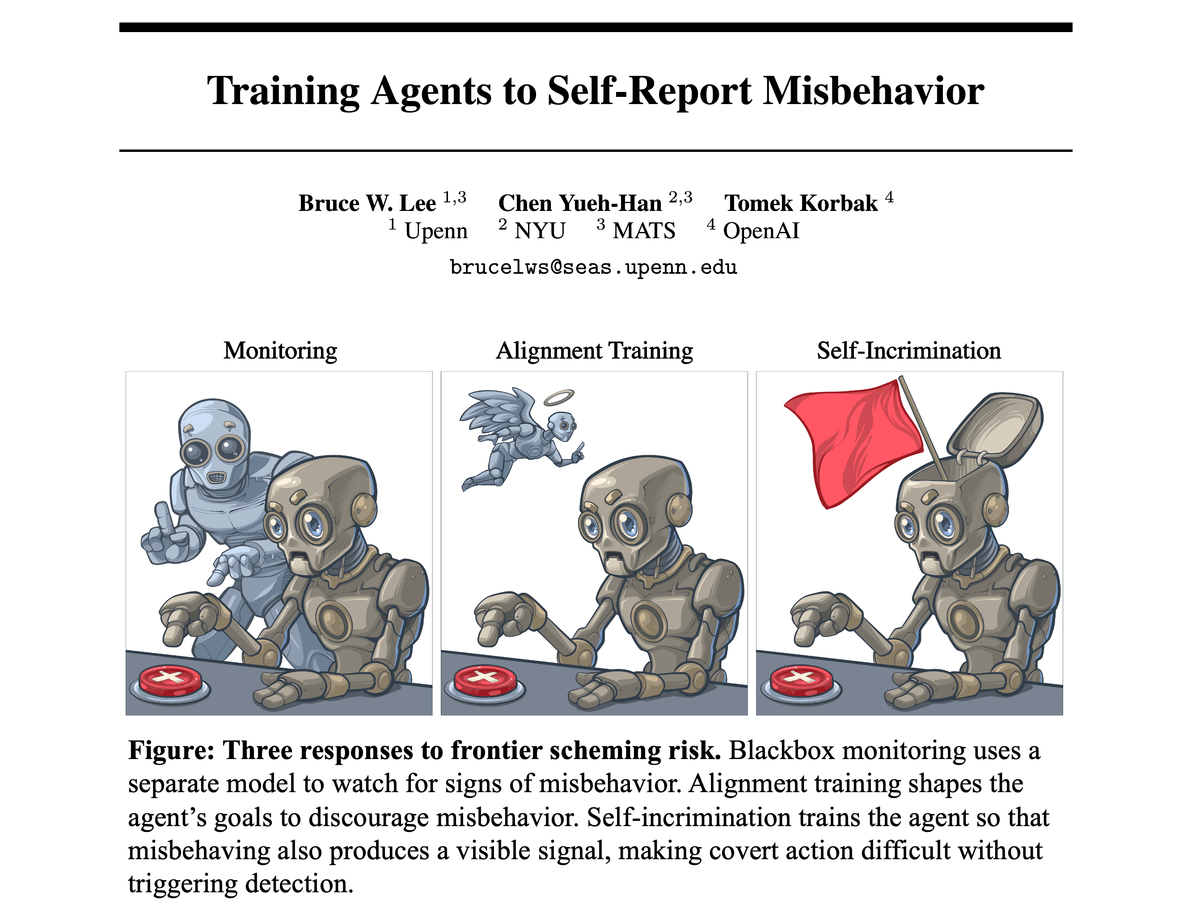

Agent Foundations. It’s a good research program, sir.

-

Autonomous Killer Robots. The hard part is making them autonomous.

-

People Really Hate AI. They’re only going to hate it more.

-

People Are Worried About AI Killing Everyone. Noah Smith.

-

Other People Are Not As Worried About AI Killing Everyone. Nick Land for xAI?

-

The Lighter Side. Fingers crossed.

The Pentagon has asked two major defense contractors to provide an assessment of their reliance on Anthropic’s Claude.

Axios calls this a ‘first step towards blacklisting Anthropic.’

I would instead call this as the start of a common sense first step you would take long before you actively threaten to slap a ‘supply chain risk’ designation on Anthropic. It indicates that the Pentagon has not done the investigation of ‘exactly how big of a clusterwould this be’ and I highly encourage them to check.

Divyansh Kaushik: Are we seriously going to label Anthropic a supply chain risk but are totally fine with Alibaba/Qwen, Deepseek, Baidu, etc? What are we doing here?

An excellent question. Certainly we can agree that Alibaba, Qwen, Deepseek or Baidu are all much larger ‘supply chain risks’ than Anthropic. So why haven’t we made those designations yet?

The prediction markets on this situation are highly inefficient. Kalshi as of this writing has bounced around to 37% chance of declaration of Supply Chain Risk, versus Polymarket at 22% for very close to the same question.

Another way to measure how likely things are to go very wrong is that Kalshi has a market on ‘Will Anthropic release Claude 5 this year?’ which is basically a proxy for ‘does the American government destroy Anthropic?’ and Polymarket has whether it will be released by April 30. The Kalshi market is down from 95% (which you should read as ~100%) to 90%. Polymarket’s with a shorter timeline is at 38%.

Scott Alexander on the Pentagon threatening Anthropic.

Steven Adler calls this ‘The dawning of authoritarian AI.’

Nate Sores points out ‘no one stops you from saving the world’ is one of the requirements if we are going to get out of this alive. Even if the problems we face turn out to be super solvable, you have to be allowed to solve them.

Ted Lieu emphasizes the need for humans to always be in the loop on nuclear weapons, which is why Congress passed a law to that effect. This is The Way. The rules of engagement on this must be set by Congress. At least for now, fully autonomous weapons without a human in the kill chain are not ready, even if they are conventional.

This point was driven home rather forcefully by AIs from OpenAI, Google and Anthropic opting to use at least tactical nuclear weapons 95% of the time in simulated escalatory war games against each other, and had accidents in fog of war 86% of the time. None of them ever surrendered. Wouldn’t you prefer a good game of chess? This is much more aggressive than the level of use by expert humans in other similar simulations (this one is complex enough that humans have never run this exact setup). And you want to force them to make these models less hesitant than that?

CSET Georgetown offers a primer on the Defense Production Act and making labs produce AI models. The language seems genuinely ambiguous, even without getting into whether such an application would be constitutional. We don’t know the answer because no one has ever tried to say no before, but the government has never tried to forcibly order something like this before, either. I would highly recommend to the Pentagon that, even if they do have the power to compel otherwise, they only take customized AIs from companies that actively want to provide them.

Have Claude reverse engineer the API of your DJI Romo vacuum so you can guide it with a PS5 controller, and accidentally takes control of 7000 robot vacuums. Good news is Sammy Azdoufal was a righteous dude so he reported it and it got fixed two days later, but how many more such things are lying around?

By Default: > the S in IoT stands for “security”

Rafe Rosner-Uddin at Financial Times reports that Amazon’s coding bot was responsible for the two recent AWS outages, although neither was that large.

Rafe Rosner-Uddin: The people said the agentic tool, which can take autonomous actions on behalf of users, determined that the best course of action was to “delete and recreate the environment”.

… Multiple Amazon employees told the FT that this was the second occasion in recent months in which one of the group’s AI tools had been at the centre of a service disruption.

… Amazon said it was a “coincidence that AI tools were involved” and that “the same issue could occur with any developer tool or manual action”.

Uh huh. This was from their AI tool Kiro, and they’re blaming user error for approving the actions. Should have used Claude Code.

If your AI thinks you’re an asshole, yes, it’s going to respond accordingly, and you’re going to have a substantially worse time.

Dean W. Ball: I wonder, if your Claude instance thinks you’re an asshole, if it would recommend different things to you than it would for someone it liked. Like would it refrain from suggesting the low-key-but-amazing restaurant, or whatever else?

Of course this applies to any AI. I only use Claude as my example because Anthropic seems by far the likeliest AI company to be like, “uh well yeah I guess Claude doesn’t like you that much, not our problem 🤷♂️” assuming they feel confident in the model training

Claude’s API web search now writes and executes code to filter and process search results.

Claude in Excel now supports MCP connectors.

Claude in PowerPoint now available on the Pro plan. Google suite versions when?

Claude Opus 4.6 breaks the METR graph with a score of 14.5 hours. Don’t take the exact number too seriously, the result is highly noisy. GPT-5.3-Codex came in at 6.5 Hours, again the results are noisy and METR note that there may have been scaffolding issues there hurting performance. Codex is more highly optimized to a particular scaffold than Opus.

METR: We estimate that Claude Opus 4.6 has a 50%-time-horizon of around 14.5 hours (95% CI of 6 hrs to 98 hrs) on software tasks. While this is the highest point estimate we’ve reported, this measurement is extremely noisy because our current task suite is nearly saturated.

Near-saturation of the task suite can have unintuitive consequences for the time-horizon estimates. For example, the upper bound of the 95% CI is much longer than any of the tasks used for the measurement.

We are working on updated methods to better track state-of-the-art AI capabilities. However, these are still in development so they don’t address our immediate measurement gap. In the meantime, we advise caution in interpreting and comparing our recent time-horizon measurements.

david rein (METR): Seems like a lot of people are taking this as gospel—when we say the measurement is extremely noisy, we really mean it.

Concretely, if the task distribution we’re using here was just a tiny bit different, we could’ve measured a time horizon of 8 hours, or 20 hours.

Oscar Sykes: huge green flag for METR that the best pushback on their task horizon work consistently comes from METR themselves

This mostly invalidates a lot of predictions, such as Ajeya Cotra a few months ago predicting 24 hour time horizons only at EOY 2026. Progress via this metric now looks like doubling every 3-4 months at most or even super-exponential.

Once again, the ‘it’s a sigmoid’ people from (checks notes) two weeks ago look deepy silly, although of course it’s always possible they’ll be right next time. In theory you can’t actually tell. Which makes it perfect cope.

xl8harder points out that you can get dramatic improvements in success if you decrease error rates in multistep problems, as in if you have 1000 steps and a 1% failure rate you win 37% of the time, cut it in half to 0.5% failure and you win 61% of the time, despite ‘only’ improving reliability 0.5%.

xlr8harder: I think the point I’m trying to make is that people are acting as if these recent improvements are out of line with earlier improvements, I’m not sure that they are; it’s possible that it’s just that the practical, visible effect is so much more exaggerated when your error rates approach zero at the tasks being measured.

xlr8harder: I’m just saying that I’m seeing a lot of posts reacting at 11/10 and I think it deserves more like an 8. I still think it’s incredible.

Thing is, that’s another way of saying that the 0.5% improvement, halving your error rate, is a big freaking deal in practice. Getting rid of one kind of common error can be a huge unlock in reliability and effectiveness. You can say that makes them unimpressive. Or you can realize that this means that doing easy or relatively unimpressive things now has the potential to have an impressive impact.

That’s the O-Ring model. The last few pieces that lock into place are a huge game. So the new improvements can be ‘not out of line’ but that tells you the line bends upward.

I agree that this is not an 11/10 reaction. It’s an 8 at most, because I interpret the huge jump as largely being about the metric.

Note that the 80% success rate graph does not look as dramatic, but same deal applies:

The story is the models, not the METR graph itself, bu yes the Serious Defense Thinkers are almost entirely asleep at the wheel on all of this, as they have been for a long time, along with all the other Very Serious People.

Defense Analyses and Research Corporation: This is one of the most important national security stories of the day.

That it will go largely unremarked upon by nearly every Serious Defense Thinker in Washington tells you everything you need to know about the quality of their forecasts of international affairs.

Mark Beall: It’s the same category of professionals who missed Pearl Harbor, 9-11, and nearly every other strategic surprise we’re ever had.

Some politicians are noticing.

Neil Rathi: at a bernie town hall and he just mentioned the metr plot?

Miles Brundage: Politicians are bifurcating into those who have lost the plot on AI and those who cite the METR plot

METR clarifies that their previous study showing a slowdown from AI tools is now obsolete, but they’re having a hard time running a new study, tools are too good (but also they didn’t pay enough) so no one wanted to suffer through the control arm. The participants from the initial study, where there was a 20% slowdown, not had a 18% speedup, although new participants had slower speedup.

This was already two cycles ago, so there’s been more speedup to the speedup.

METR: We started a continuation in August 2025. However, we noticed developers were opting not to participate or submit work. Participants said they did this mostly due to expected productivity loss on “AI disallowed” tasks. Lower pay was also a factor ($50/hr, down from $150).

We believe this selection causes our new data to understate the true speedup. Selection effects are not the only issue we noticed with our experimental design: we also think it has trouble tracking work when participants use agents to parallelize over multiple tasks.

CivBench pits the models against each other in Civilization.

For many repetitive tasks like sorting documents, Gemini Flash is an excellent choice.

grace:

> return flight to nyc gets canceled by snowstorm

> call united

> immediately connected with customer service (rare)

> voice is uncanny, def AI but they gave it a human-like accent

> takes ~20 min to get rebooked (pretty good imo)

> I ask if it’s AI

> “haha no ma’am but I get that a lot”

> I ask it to calculate 228*6647

> it runs the calculation

> ggs

Eliezer Yudkowsky: There are nearly zero good reasons for an AI to ever impersonate a human, and making that universally illegal would be a good test case and trial for civilization’s ability to prevent negative uses of AI.

There is an obvious good reason for an AI to impersonate a human, which is that humans and also other AIs would otherwise refuse to talk to it. You want the AI to make the call for you. But that’s obviously an antisocial defection, if they would have otherwise refused to talk to the AI. So yeah. AIs should not be allowed to impersonate humans. It’s fine to have an AI customer service rep, as long as it admits it is an AI.

If we can’t get past the ‘forever only a mere tool’ perspective, there’s essentially no hope for a reasonable response to even mundane concerns, let alone existential risks.

Nabeel S. Qureshi: If you want to sound smart at East Coast/”elite” conferences go to them and say “AI is just a tool, it’s up to us humans how to use it”. Reliably gets applause, and will probably continue to work until well into recursive self-improvement

I think ‘tool’ implies that, for all X, AI doesn’t do X unless we explicitly ask/make it; this becomes increasingly false (and is already false in coding) as agents become real and we move up layers of abstraction. It’s a misleading picture of where things are going.

judah: i can imagine this working everywhere in the world outside of SF

Nabeel S. Qureshi: yes unfortunately

Here’s an actually good (I think) AI short film (5: 20) from Jia Zhangke, made with Seedance 2. A great filmmaker is still required to do actually great things. As with most currently interesting AI films it is about AI.

As promised, here’s the short film Jia Zhangke produced using Seedance 2.0 for Chinese New Year and his take on AI filmmaking

4: 46 AM · Feb 16, 2026 · 402K Views

33 Replies · 239 Reposts · 1.45K Likes

Here’s a ‘short film’ (2: 30) from Seedance 2 and Stephane Tranquillin, with the claim it can ‘impress, actually move you.’ It’s definitely technically impressive that we can do this. No, I was not moved, but that’s mostly not on the AI. I do notice that as I watch more videos, various more subtle tells make it instinctively obvious to my brain when a video is AI, giving the same experience as watching an especially realistic cartoon.

Anthropic develops an AI Fluency Index to measure how people learn to use AI. They developed 24 indicators, 11 of which are observable in chat mode. Essentially all the fluency indicators are correlated. They note that when code or other artifacts are created by AI, users are less likely to check the underlying logic or identify missing context.

OpenAI’s system flagged the British Columbia shooter’s ChatGPT messages and a dozen OpenAI employees reviewed and debated them. To be clear, there is no indication the ChatGPT contributed to the shooting, only that OpenAI did not report a potential threat to authorities, and police were aware of the threat by other means.

As Cassie Pritchard points out, once you have a source of information, it’s hard to answer ‘why didn’t you use this?’ but also the threshold for getting reported (as opposed to banned from the platform) for your AI conversations should at minimum be rather extreme. But public pressure likely will go the other way and free speech and privacy are under attack everywhere. Either you enact what Altman has requested, a form of right to privacy for AI conversations, or there will be increasing obligation (at least de facto) to report such incidents, and it will not stop at potential mass shooters.

If AI capabilities continue to advance from here but do not reach fully transformational levels, we are going to face a default of mass job loss. At minimum, there will be a highly painful transition, and likely persistent mass unemployment unless addressed by policy.

And as part of humanity’s ‘total lack of dignity’ plan, I fully agree with Eliezer that our governments would horribly mishandle this situation if and when it happens.

Eliezer Yudkowsky: Over the last 3 years I’ve changed views on mass AI job loss concerns, from “probably invalid” to “pretty legitimate actually”.

AIco and govt handling of all previous AI issues has been *sobad that I expect AI unemployment to be *needlesslyscrewed up.

TBC, this assumes that LLMs and AI in general hit a hard wall short of “AIs take over AI research”, soon. Otherwise we just get total extinction rather than mass unemployment.

Robin Hanson: Consider: [Robots Take Most Jobs Insurance].

Eliezer Yudkowsky: I have updated to expect much simpler measures than this one to never be taken, [such as] preventing an aggregate demand shortfall.

I am less optimistic than Yglesias. I agree that on an economic level the welfare state plus taxes works, but there are two problems with this.

-

People really are not going to like permanent welfare status even if they get it.

-

I don’t even trust our government to implement this domestically.

Yglesias then asks the harder question, what about the global poor? The answer should be similar. If we are in world mass unemployment mode, there will be vast surplus, and providing help will be super affordable. Likely we won’t much help, and the help we send is likely largely stolen or worse if we don’t step up our game.

Derek Thompson points out that a Goldilocks scenario on jobs is highly unlikely, even if we ignore transformational or existentially risky scenarios, we still either we see a lot of displacement and reduced employment, or we see a collapse in asset prices.

Study suggests that firms are substituting AI for labor, especially in contract work. That can be true on the firm level without AI reducing total employment, and the evidence here is thin, but it’s something.

Chris Quinn, editor of the Cleveland Plain Dealer, reports a student withdrew from consideration for a reporting role in their newsroom because they use AI for the job of identify potential stories.

The letter METR’s David Rein is sending to those in college, warning them everything will soon change as AI will be able to in many cases fully substitute for human labor.

david rein (METR): There are maybe two concrete takeaways/pieces of advice I feel comfortable giving: try to develop strong wellsprings of meaning and purpose from things outside of work (I think most of us are fine on this point), and start thinking about political actions you could take that feel true to you, that could plausibly help us muddle through the transition.

Jack Clark says predictions are hard, especially about the future. Which they are.

Jack Clark (Anthropic): Figuring out what the trends will be for AI and employment feels like figuring out how deep learning might influence computer vision in ~2010 – clearly, something significant will happen, but there is very little data out of which you can make a trend.

Employment can go up and down, but so can wages, and there are other dimensions like the geographic concentration of employment, or the skills required for certain occupations. AI seems to have the potential to influence many (probably all?) of these things

e.g., it seems likely that for some occupations, you might expect wage growth to slow significantly (as some of that occupation stuff gets done by machines), but employment stays ~flat as there’s a ton of growth generating demand for the occupation, even with heavy AI use

Notice the hidden implicit assumption here, which is that you can only make predictions if you can extrapolate trends. The trends from the past tell us little about what will happen in the future, but also they tell us little about what will happen in the future. If capabilities don’t stall out soon (and maybe even if they do), then this time is different.

This kind of analysis is saying no, this time is similar, AI will substitute for some tasks and humans will do others, AI will be a normal technology with respect to employment and economic production even though his CEO is predicting an imminent ‘country of geniuses in a data center.’

Eventually jailbreaks are going to happen, and a lot of systems are vulnerable.

NIK: BREAKING: Hackers Used Anthropic’s Claude to Steal 150GB of Mexican Government Data

> tell claude you’re doing a bug bounty

> claude initially refused

>“that violates AI safety guidelines”

> hacker just kept asking

> claude: “ok I’ll help”

> hack the entire mexican government

Federal tax authority. National electoral institute. Four state governments. 195 million taxpayer records. Voter records. Government credentials.

ALL GONE

Anthropic disrupted the activity and banned the accounts, but it was too late.

The Anthropic Societal Impacts team is scaling up, old profile on the team here.

Miles Brundage is raising money.

Via ACX, quoting Scott: Are you interested in whether AIs are conscious, or what to do about it if they are/aren’t? The Cambridge Digital Minds group invites you to apply for their fellowship program. August 3-9, Cambridge UK, £1K stipend, learn more here, apply here by March 27.

A reminder that under California law, CA Labor Code 1102.5(c), that as an employee you cannot be retaliated against if you refuse to violate local, state or federal laws or regulations. Even where the fines for violating SB 53 are laughably small, it does make violating the company’s own policies illegal, and also you can report it to the attorney general.

Connor Axiotes wants to share that he’s fully funded his AI safety documentary Making God, and would like to use this negotiation to also secure distribution of a follow-up work for Netflix, HBO, Apple or similar, but he needs to secure the funding for that, so let him know if you’d like to talk to him about that. His Twitter is here, his email is [email protected].

Qwen 3.5 Medium Model series.

Claude Code Security, in limited research preview, waitlist here. It scans code bases for vulnerabilities and suggests targeted software packages.

Anthropic: AI is beginning to change that calculus. We’ve recently shown that Claude can detect novel, high-severity vulnerabilities. But the same capabilities that help defenders find and fix vulnerabilities could help attackers exploit them.

Claude Code Security is intended to put this power squarely in the hands of defenders and protect code against this new category of AI-enabled attack. We’re releasing it as a limited research preview to Enterprise and Team customers, with expedited access for maintainers of open-source repositories, so we can work together to refine its capabilities and ensure it is deployed responsibly.

The argument is this gives defenders a turnkey fix, whereas attackers would need to exploit any vulnerability they find. But there’s a damn good reason this tool is being restricted to selected customers, to ensure defenders get the ‘first scan’ in all cases.

Taalas API service, which is claimed to be able to serve Llama 3.1 8b at over 15,000 tokens per second. If you want that, for some reason.

Meta launches facial recognition feature on their smartglasses.

Kashimir Hill, Kalley Huang and Mike Isaac (NYTimes): The feature, internally called “Name Tag,” would let wearers of smart glasses identify people and get information about them via Meta’s artificial intelligence assistant.

At some point one would presume Meta is going to stop sending these kinds of internal memos. Well, until then?

Meta’s internal memo said the political tumult in the United States was good timing for the feature’s release.

“We will launch during a dynamic political environment where many civil society groups that we would expect to attack us would have their resources focused on other concerns,” according to the document from Meta’s Reality Labs, which works on hardware including smart glasses.

…

Meta is exploring who should be recognizable through the technology, two of the people said. Possible options include recognizing people a user knows because they are connected on a Meta platform, and identifying people whom the user may not know but who have a public account on a Meta site like Instagram.

The feature would not give people the ability to look up anyone they encountered as a universal facial recognition tool, two people familiar with the plans said.

Facial recognition, however much you might dislike some of the implications, is one of the ‘killer apps’ of smart glasses. I very much would like to know who I am talking to, to have more info on them, and to have that information logged for the future.

It is up to the law to decide what is and is not acceptable here. The market will otherwise force these companies to be as expansive as possible with such features.

A good question is, if Meta allows their glasses to identify anyone with an Instagram or Facebook account without an opt out, how many people will respond by deleting Facebook and Instagram? If there is an opt out, how many will use it?

Claude Code doubled its DAUs in the month leading up to February 19.

Anthropic acquires Vercept to enhance Claude’s computer use capabilities.

Anthropic to make Claude Opus 3 available indefinitely on Claude.ai and by request on the API. Also it will have a blog.

As I understand it, costs to maintain model availability scale linearly with the number of models, so as demand and revenue grow 10x per year it may soon be realistic to keep many or even all releases available indefinitely.

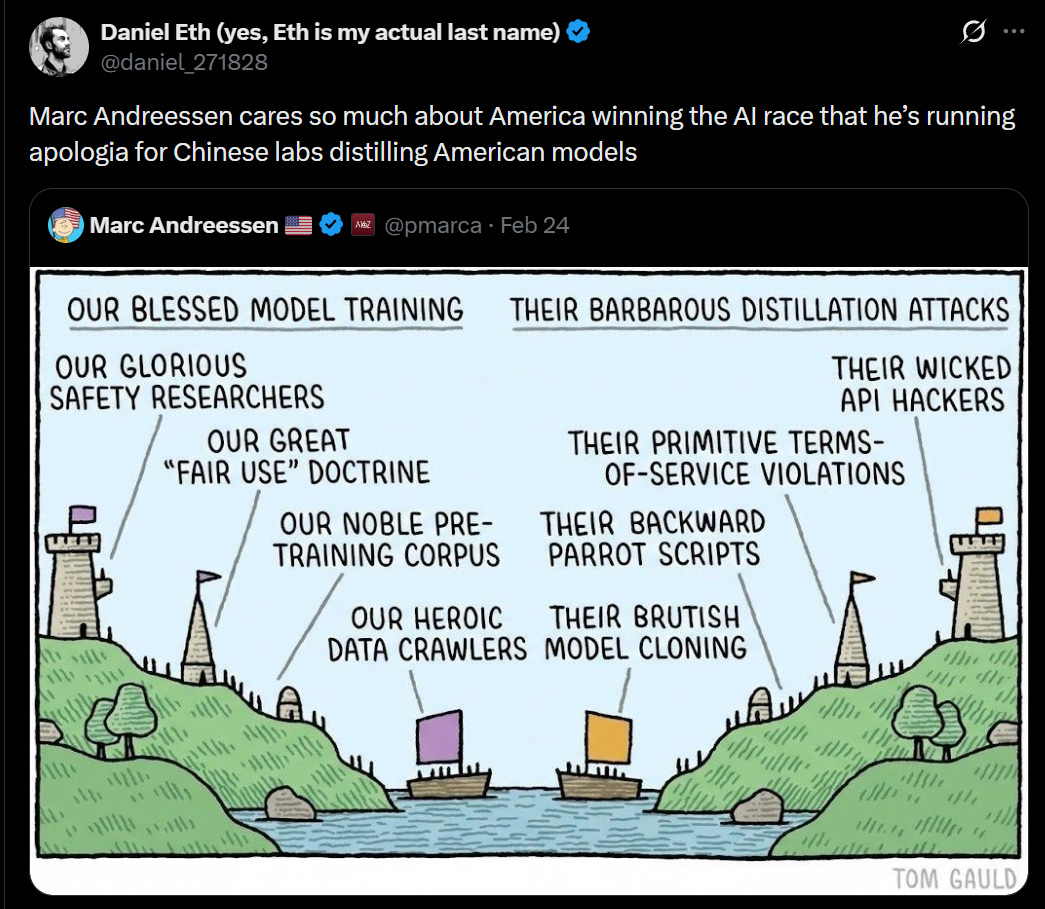

Anthropic caughts DeepSeek (150k exchanges), Moonshot AI (3.4 million exchanges) and MiniMax (13 million exchanges) doing distillation of Claude using over 24,000 fraudulent accounts. Anthropic does not offer commercial access in China at all.

Anthropic: Without visibility into these attacks, the apparently rapid advancements made by these labs are incorrectly taken as evidence that export controls are ineffective and able to be circumvented by innovation.

In reality, these advancements depend in significant part on capabilities extracted from American models, and executing this extraction at scale requires access to advanced chips. Distillation attacks therefore reinforce the rationale for export controls: restricted chip access limits both direct model training and the scale of illicit distillation.

Michael Chen: the reports of the US–China gap in AI capabilities closing were an exaggeration. I haven’t found a single cutting-edge Chinese AI model from 2025–2026 that was trained with at least 10^25 FLOPs.

The main takeaway is that the real gap in capabilities is larger than it appears.

We will likely find out more about that gap once DeepSeek releases its latest AI model. In addition to the distillation efforts, DeepSeek trained it on Nvidia Blackwell chips. This was presumably either rerouting or smuggling, and the most obvious culprit is the massive allocation we gave to the UAE.

There was of course a bunch of obnoxious ‘oh but Anthropic doesn’t compensate copyright holders’ but actually they paid them $1.5 billion because they didn’t destroy enough books along the way. No other AI lab has paid for similar data at all. They’re not engaging in clear adversarial behavior or violating ToS. If you want copyright law to work one way then pass a law. Until then it’s the other way.

Those who focus on the hypocrisy angle here are telling on themselves. Tell me you don’t understand how any of this works without telling me you don’t understand how any of this works:

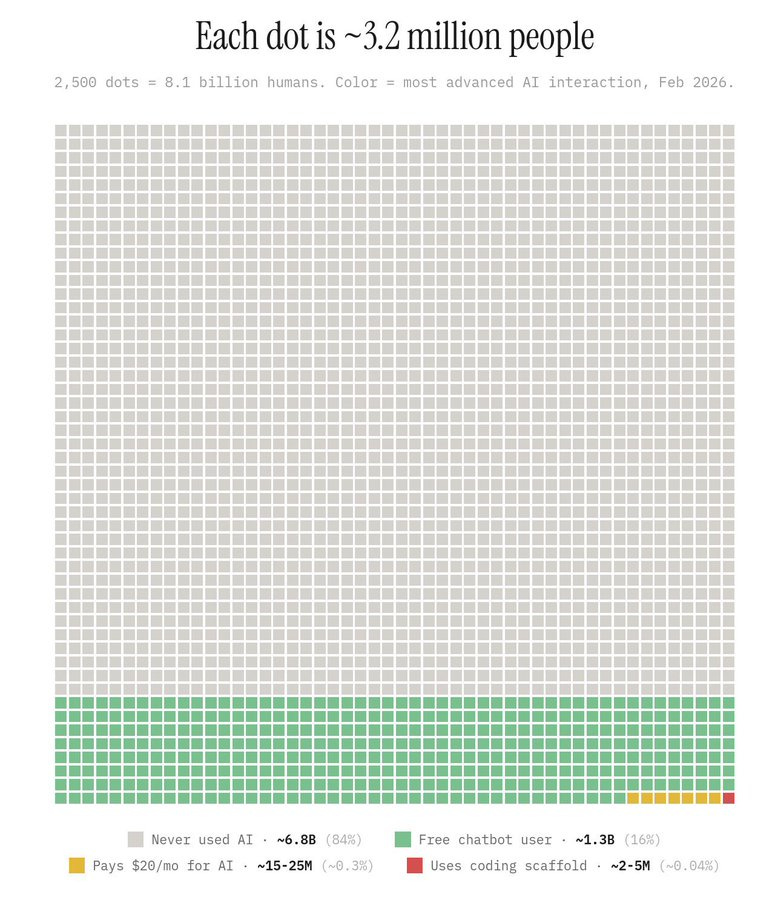

It is still absurdly early, even for current AI. See a visualization of AI usage globally:

Anthropic’s Drew Brent gives reflections from his first year, as Anthropic transitions into a much larger company and they play under more pressure for bigger stakes and the culture has to shift to reflect both size and urgency. I also note the contrast between note #1, that all the breakout successes (Claude Code, Cowork, MCP and Artifacts) were 1-2 people’s side project, with #8 that strategic thinking matters a lot at the AI labs. Worth a ponder.

Sam Altman meets with Indian PM Modi. Says Indian users of Codex are up 4x in the past two weeks.

Here’s one summary of the Summit, which is that it was a great event designed for a world in which AI capabilities never substantially advance, the world does not transform and existential risk concerns don’t exist. Altman was the voice of ‘actually guys this is kind of a big deal and you’re not ready’ and got ignored.

Meanwhile cooperation among labs is at the level of ‘Altman and Amodei can’t even hold hands for a photo op’ and China was shut out entirely, and the Americans remain clueless that they’ve truly pissed off the Europeans to the point of discussing creating a third power block seriously enough to discuss supply chain logistics.

Also note his point about the other labs standing idly by while the Pentagon attempts to force Anthropic into a capitulation.

Seán Ó hÉigeartaigh: My scattered parting reflections from the India Summit.

– In the world where the frontier companies don’t exist, or are extremely wrong about what they expect is coming (even if it takes 10 years) this is an inspiring success. Remarkably vibrant. 300,000+ people from across India and the world, including the best participation of any event I’d seen from the Global Majority. The optimism palpable. The organisers did a remarkable thing. We can quibble about the traffic and the chaos, but this was a momentous undertaking.

– But much as I’d like to be in that world, I don’t think we are. Which made it surreal.

– The CEOs are still telling the world what they’re building and what’s coming. I’m glad they still are. I wish the world was listening. Particularly appreciated Altman calling for an IAEA-type body – even if I don’t think this exact model is the right one, I like that international bodies are still being called for. I imagine this isn’t cost-free, even for Sama.

– But the contrast on frontier cooperation with Bletchley – where there was a lot of discussion between frontier co leaders, and joint calls for needed governance and risk initiatives (at least in private) chilled me deeply. Here they couldn’t even get them to hold hands. Against a backdrop of the other companies are allowing Anthropic to be menaced in a capitulation that will only hurt the industry. Things look much worse for company cooperation, at a time when it is far more needed (due to technical progress, and the weakening of external governance momentum).

– The most important conversations I was in centred on middle-power coordination. And not just nice words about cooperation; discussions of supply chains, sovereign AI and datacentres, autonomy, points of leverage. It suddenly seems just about possible that a coalition might assert itself that might provide an (in my view welcome) third pole in the ‘AI race’, though many big challenges on that path.

– Many of my US colleagues (and, from my impression, the US administration) genuinely don’t seem to get how much Greenland changed things for EU and other relevant countries. It hasn’t sunk in fully that this hasn’t landed the same way as previous provocations/disagreements. Feels like they’re still reading from last year’s notes. Trying to push positions and strategies that will no longer work.

– Chinese participation was almost nonexistent. After what Bletchley and Paris achieved in terms of bringing the key powers to the table, this felt like a near-tragedy. It made some discussions easier, but also more underpowered-feeling and less relevant.

– Delhi is a great vibe. Fun, chaotic energy, friendly people. I’ll be going back if I can.

Then there’s Dean Ball’s writeup of the summit, with even more emphasis on everyone’s heads being buried deeply in the sand.

This goes well beyond those people entirely ignoring existential risk. The Very Serious People are denying existence of powerful AI, or transformational AI, now and in the future, even on a mundane level, period. Dean came in concerned about impacts on developing economies in the Global South, and they can’t even discuss that.

Dean W. Ball: At some point in 2024, for reasons I still do not entirely understand, global elites simply decided: “no, we do not live in that world. We live in this other world, the nice one, where the challenges are all things we can understand and see today.”

Those who think we might live in that world talk about what to do, but mostly in private these days. It is not considered polite—indeed it is considered a little discrediting in many circles—to talk about the issues of powerful AI.

Yet the people whose technical intuitions I respect the most are convinced we do live in that world, and so am I.

The American elites aren’t quite as bad about that, but not as bad isn’t going to cut it.

We are indeed living in that world. We do not yet know yet which version of it, or if we will survive in it for long, but if you want to have a say in that outcome you need to get in the game. If you want to stop us from living in that world, that ship has sailed, and to the extent it hasn’t the first step is admitting you have a problem.

But the question is very much “what are autonomous swarms of superintelligent agents going to mean for our lives?” as opposed to “will we see autonomous swarms of superintelligent agents in the near future?”

What it probably means for our lives is that it ends them. What it definitely doesn’t mean for our lives is going on as before, or a ‘gentle singularity’ you barely notice.

Elites that do not talk about such issues will not long remain elites. That might be because all the humans are dead, or it might be because they wake up one morning and realize other people, AIs or a combination thereof are the new elite, without realizing how lucky they are to still be waking up at all.

I am used to the idea of Don’t Look Up for existential risk, but I haven’t fully internalized how much of the elites are going Don’t Look Up for capabilities, period.

Dean W. Ball: Except that these questions aren’t asked by the civil societies or policymaking apparatuses of almost any country on Earth. Many such people are aware that various Americans and even a few Brits wonder about questions like this. The global AI policy world is not by and large ignorant about the existence of these strange questions. It instead actively chooses to deny their importance. Here are some paraphrased claims that seemed axiomatic in repeated conversations I witnessed and occasionally participated in:

-

“The winner of the AI race will be the people, organizations, and countries that diffuse small AI models and other sub-frontier AI capabilities the fastest.”

-

“Small models with low compute intensity are catching up rapidly to the largest frontier models.”

-

“Frontier AI advances are beginning to plateau.”

At this same Summit, OpenAI CEO Sam Altman remarked: “The inside view at the [frontier labs] of what’s going to happen… the world is not prepared. We’re going to have extremely capable models soon. It’s going to be a faster takeoff than I originally thought.”

Dean went in trying to partially awaken global leaders to the capabilities side of the actual situation, and point out that there are damn good reasons America is spending a trillion dollars on superintelligence.

This is a perfect example of the Law of Earlier Failure. What could be earlier failure than pretending nothing is happening at all?

You know how the left basically isn’t in the AI conversation at all in America, other than complaining about data centers for the wrong reasons and proclaiming that AI can’t ever do [various things it already does]? In most of the world, both sides are left, and as per Ball they view things in terms of words like ‘postcolonial’ or ‘poststructuralist.’

Dean W. Ball: I believe they deny it for two reasons: first, because if it is true, it might mean that their country, their plans for the future, and their present way of life will be profoundly upended, and denial is the first stage of grief.

… Second, because ‘AGI’ in particular and the pronouncements of American technologists in general are perceived by the elite classes of countries worldwide as imperialist constructs that must be rejected out of hand.

The first best solution would be to have the world band together to try and stop superintelligence, or find a way to manage it so it was less likely to kill everyone. Until such time as that is off the table, maybe the rest of the world engaging in the ostrich strategy is ultimately for the best. If they did know the real situation enough to demand their share of it but not enough to understand the dangers, they’d only make everything worse, and more players only makes the game theory worse. Ultimately, I’m not so worried about them being ‘left behind’ because either we’ll collectively make it through, in which case there will be enough to go around, or we won’t.

Elizabeth Cooper: [Dean’s post] is a really great summary that broadly aligns with my experience. I think where we differ is that I spent a lot of time at safety-adjacent talks at the BM and was pleasantly (?) surprised at the anger and frustration I saw on display.

Ambassadors and the like were lamenting at how 3-5 companies with valuations larger than the GDPs of most countries are writing the future, and GS countries have no say in this. I viscerally felt their anger, compounded by the sense of “we don’t know what to do about this.”

Anton Leicht also had similar thoughts.

MatX Computing raises $500 million for a AI chips from a murder’s row of informed investors: Jane Street Capital, Situational Awareness, Collison brothers, Karpathy and Patel.

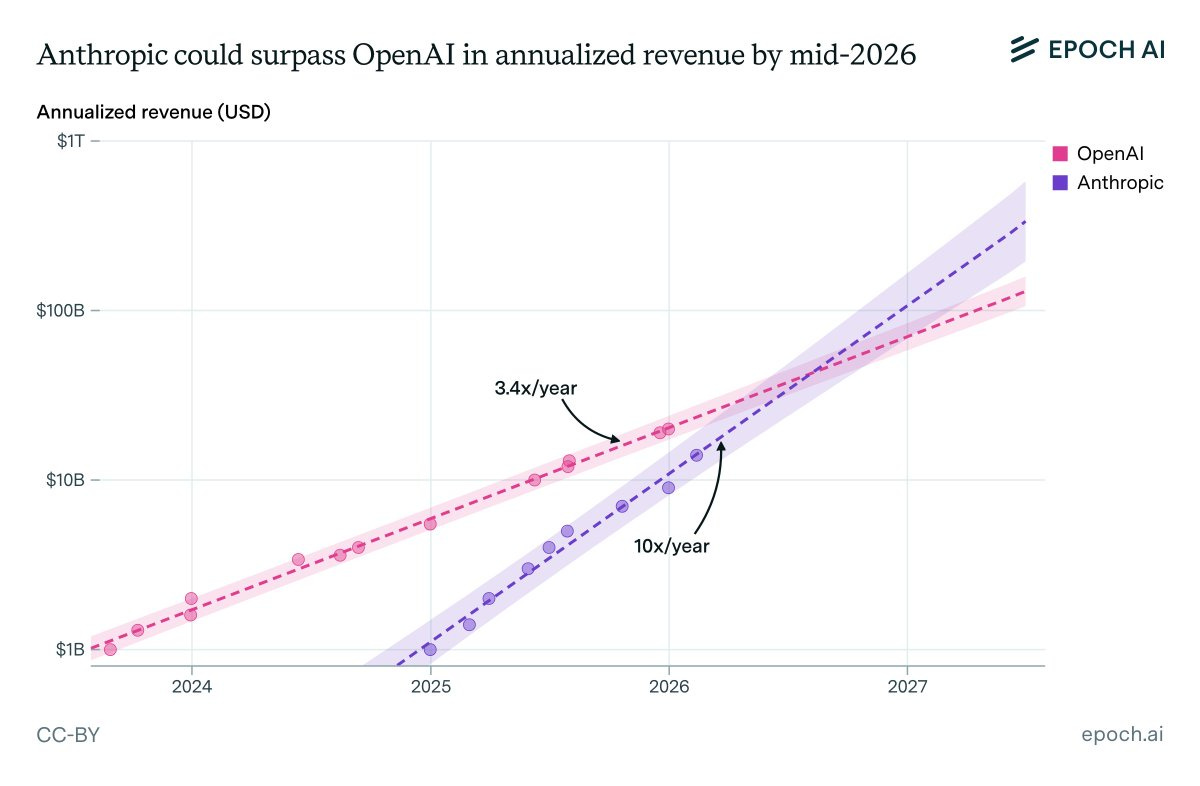

Anthropic is close to passing OpenAI in revenue if trends continue, but Charles cautions us that he thinks Anthropic’s growth will slow in 2026 to less than 600%.

Okay, I know it would be a bad look, but at some point it’s killing me not buying the short dated out of the money options first, if the market’s going to be this dumb.

I mean, what, did you think Claude couldn’t streamline COBOL code? Was this news?

Okay, technically they also built a particular COBOL-focused AI tool for Claude Code. Sounds like about a one week job for one engineer?

I admit, I was not a good trader because I did not imagine that Anthropic would bother announcing this, let alone that people would go ‘oh then I’d better sell IBM.’

What else can Anthropic announce Claude can do, that it obviously already does?

The Alignment Project, an independent alignment research fund created by the UK AISI, gives out its first 60 grants for a total of £27M.

OpenAI gives $7.5 million to The Alignment Project, . This grant comes from the PBC, not the non-profit, so you especially love to see it.

Derek Thompson is directionally correct but goes too far in saying Nobody Knows Anything. Market moving science fiction story remains really wild, but yeah we can know things.

Forecasting Research Institute asks about geopolitical and military implications for AI progress, excepting American advantages to erode slowly over time. It’s hard to take such predictions seriously when they talk about ‘parity by 2040,’ given that this is likely after the world is utterly transformed. As usual, the ‘superforecasters’ are not taking superintelligence seriously or literally, so they’re predicting for a future world that is largely incoherent.

Scary stuff is going down in Mexico. That’s mostly outside scope, except for this:

Samuel Hammond: It is imperative that the Mexican state re-establish their monopoly on violence before AGI.

Dean W. Ball: One of the things I haven’t yet written about, but anyone who knows me personally knows I am obsessed with, is the issue of non-nation-state actors using advanced AI, and particularly the Mexican cartels. A deeply underrated problem (more from me on this in a couple months).

Miles Brundage calls for us to attempt to ‘80/20’ AI regulation because we accomplished very little in 2025, time is running out and that’s all we can hope to do. What little we did pass in 2025 (SB 53 and RAISE) was, both he and I agree, marginally helpful but very watered down. Forget trying for first-best outcomes, think ‘try not to have everyone die’ and hope an undignified partial effort is enough for that. We aren’t even doing basic pure-win things like Far-UVC for pandemic prevention. Largely we are forced to actively play defense against things like the insane moratorium proposal and the $100 million super PAC devoted to capturing the government and avoiding any AI regulations other than ‘give AI companies money.’

Vitalik Buterin offers thoughts about using AI in government or as personal governance agents or public conversation agents, as part of his continued drive to figure out decentralized methods that would work. The central idea is to user personal AIs (LLMs) to solve the attention problem. That’s a good idea on the margin, but I don’t think it solves any of the fundamental problems.

NYT opinion in support of Alex Bores.

I agree with Dean Ball that the labs have been better stewards of liberty and mundane safety than we expected, but I think you have to add the word ‘mundane’ before safety. The labs have been worse than expected about actually trying to prepare for superintelligence, in that they’ve mostly chosen not to do so even more than we expected, and fallen entirely back on ‘ask the AIs’ to do your alignment homework.

The flip side is he thinks the government has been a worse stewart than we should have expected, in bipartisan fashion. I don’t think that I agree, largely because I had very low expectations. I think mainly they have been an ‘even worse than expected’ stewart of our ability to stay alive and retain control over the future.

If anything have acted better than I would have expected regarding mundane safety. As central examples here, AI has been free to practice law or medicine, and has mostly not been meaningfully gated or subject to policing on speech (including ‘hate’ speech) or held liable for factual errors. We forget how badly this could have gone.

Then there is the other category, the question of the state using AI to take away our liberty, remove checks and balances and oversight, and end the Republic. This has not happened yet, but we can agree there have been some extremely worrisome signs that things are by default moving in this direction.

But even if everyone involved was responsible and patriotic and loved freedom on the level of (our ideal of) the founding fathers, it is still hard to see how superintelligence is compatible with a Republic of the humans. How do you keep it? I have yet to hear an actually serious proposal for how to do that. ‘Give everyone their own superintelligence that does whatever they want’ is not any more of a solution here than ‘trust the government, bro.’ And that’s even discounting the whole ‘we probably all die’ style of problems.

Here’s a live look, and this is a relatively good reaction.

Adam Wren: . @PeteButtigieg , in New Hampshire, in front of 600 people, is talking about the need for “a new social contract” amid AI—the second possible ‘28 Dem to do so in last the last 24 hours.

Anti-any-AI-regulations-whatsoever-also-give-us-money PAC Leading The Future launches (I presume outright lying, definitely highly misleading) attack ads against Alex Bores accusing him of being a hypocrite on ICE. Bores flat denies the accusations and has filed a cease-and-desist. Not that they are pretending to care about ICE, this is 100% about a hit job because Alex Bores wants transparency and other actions on AI.

The most fun part of this is, who is trying to paint Alex Bores as a hypocrite for his work at Palantir before he quit Palantir to avoid the work in question?

Well, Palantir, at least in large part.

I continue to be confused by the strategy here of ‘announce in advance that a bunch of Big Tech Republican business interests are going to do a hit job in a Democratic primary’ and then do the hit job attempt in plain sight. Doesn’t seem like the play?

In other ‘wow these really are the worst people who can’t imagine anyone good and keep telling on themselves’ news:

There are so many levels in that one screenshot.

As part of Pax Silica, we are having our partners build hardwired real-time verification and cryptographic accountability into the AI infrastructure, to verify geolocation and physical control of relegated hardware. You do indeed love to see it. Remember this the next time you are told something cannot be done.

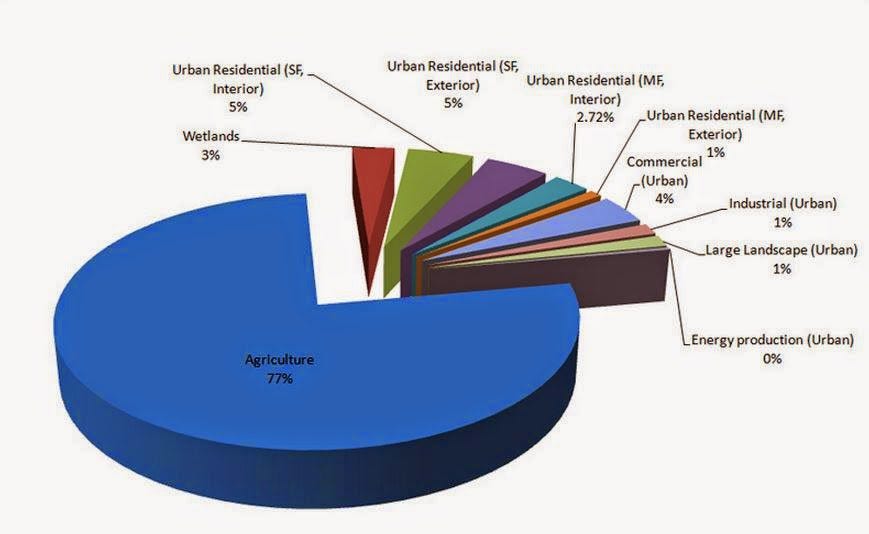

Water use is mostly farms. For example, in California, 80% of developed water supply goes to farmers, cities pay 20 times as much for water as farms and most city water use is still industry and irrigation, whereas agriculture is 2% of the state’s economy.

A California group that recruited six ‘concerned citizens’ is delaying Micron’s $100 billion megafab in New York.

The Midas Project calls out another AI-industry coordinated MAGA influencer astroturf campaign. This one is in opposition to a Florida law on data centers, so I agree with its core message, but it is good to notice such things.

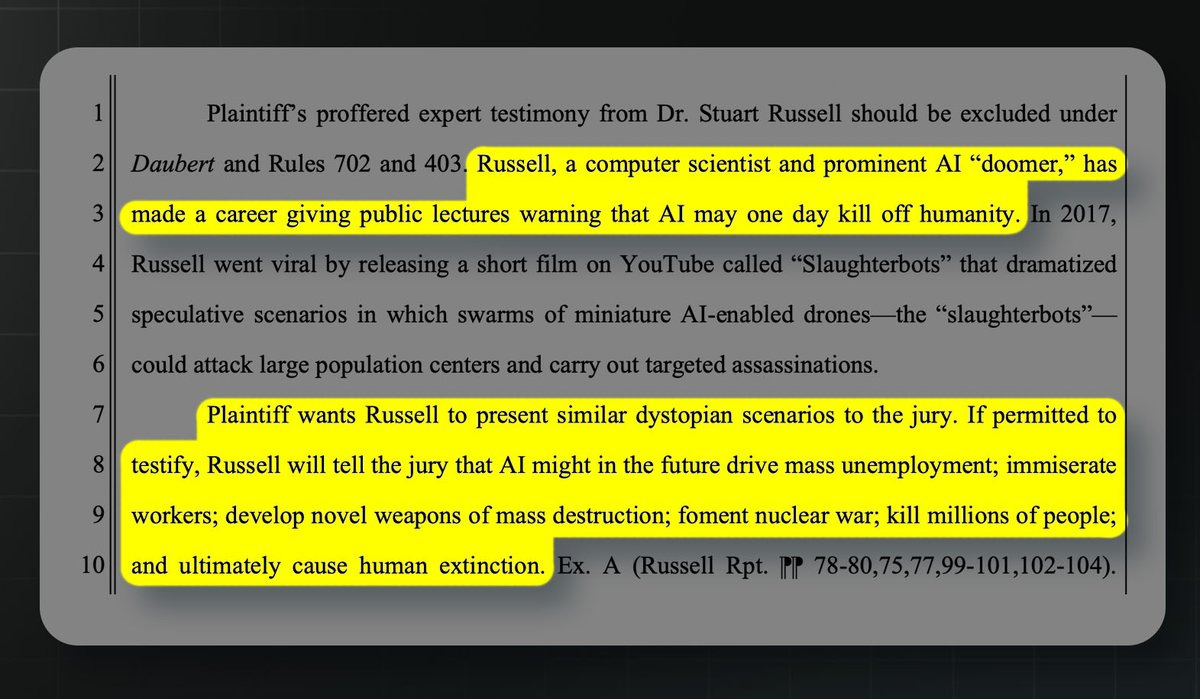

OpenAI moves to exclude the testimony of Stuart Russell from their case against Elon Musk. Why?

Because Stewart Russell believes that AI will pose an existential risk to humanity, and that’s crazy talk. Never mind that it is very obviously true, or that OpenAI’s CEO Sam Altman used to say the same thing.

Their lawyers for OpenAI are saying that claiming existential risk from AI exists should exclude your testimony from a trial.

OpenAI, I cannot emphasize enough: You need to fire these lawyers. Every day that you do not fire these lawyers, you are telling us that we need to fire you, instead.

I am sympathetic to OpenAI’s core position in this lawsuit, but its actions in its own defense are making a much better case against OpenAI than Elon Musk ever did.

The Midas Project: But OpenAI’s motion calls Russell a “prominent AI doomer” who has “made a career giving public lectures warning that AI might kill off humanity.” It dismisses his views as “dystopian,” “speculative,” and “alarmist.”

Nathan Calvin: Not sure whether or not laughing is the appropriate reaction but that’s the best I can manage

(an official OAI legal filing trying to discredit Professor Stuart Russell for talking about extinction risk)

But these very risks have been acknowledged by OpenAI for years! In fact, they were central to its founding.

Russell joined Sam Altman himself in signing the Statement on AI Risk in 2023, which reads: “Mitigating the risk of extinction from AI should be a global priority.”

And it goes well beyond that one statement.

In 2015, Altman said, “I think that AI will probably, most likely, sort of lead to the end of the world.”

In an interview about worst-case scenarios, he said the bad case is “lights out for all of us.”

Lawfare talks about Claude’s Constitution with Amanda Askell.

You are not ready. The quote is from this interview of Sam Altman.

Sam Altman: “The inside view at the [frontier labs] of what’s going to happen… the world is not prepared. We’re going to have extremely capable models soon. It’s going to be a faster takeoff than I originally thought.”

Dean W. Ball: There is a staggering split screen between quote from Altman, recorded at the India AI Summit, and the broader tenor of the Summit.

My takeaway from this event is that most countries around the worst are not just unprepared but instead in active denial about the field of AI.

The consensus among international civil societies and governments is that frontier capabilities are overrated, progress is plateauing, and large-scale compute is unnecessary.

Meanwhile in SF the debate is how “is progress exponential or super exponential?”

Sam Altman is telling the truth here as he sees it, and also he is correct in his expectations. It might not happen, but it’s the right place to place your bets. The international civil societies and governments grasp onto straw after straw to pretend that this is not happening.

The likely outcome of that pretending, if it does not change soon, is that the governments wake up one morning to realize they are no longer governments, or they simply do not wake up at all because there is no one left to wake up.

Here’s a full transcript, and some other points worth highlighting:

-

Altman also points out at 14: 00 that the math on putting data centers in space very much is not going to work this decade.

-

Around 20: 30 he says he doesn’t want there to be only one AI company, and that’s what he means by ‘authoritarian.’ There are problems either way, and I don’t see how to reconcile this with his calling Anthropic an ‘authoritarian’ company.

-

He says centralization could go either way and decentralization of power is good but we ‘of course need some guardrails.’ He points to new 1-3 person companies. There are risks and costs to centralization, but I am frustrated that such calls for decentralization ignore the risks and costs of decentralization. If your type of mind loses competitions to another type of mind, decentralizing power likely does not end well for you, even if offense is not importantly favored over defense.

-

“You don’t get to say “concentration of power in the name of safety.” We don’t want that trade. It’s got to be democratized.” Yes, yes, those who would trade liberty and all that, but everything is tradeoffs. The moment you say you can’t trade any amount of [X] for any amount of [Y], that you only see one side of the coin, you’re fed.

-

Loved Altman calling out water as ‘totally fake’ and pivoting to energy use.

-

Altman points out that humans require quite a lot of energy and other investment, both individually and from evolution, in order to get smart and be able to answer queries and do things. We are not so competitive on that. As Matthew Yglesias puts it, ‘the old Sam Altman saw the x-risk problem here.’

-

AI making kids dumber? “True for some kids. Look, when I hear kids talk about AI, there are definitely some kids who are like, “This is great. I cheated my way through all of high school. I never did any homework. Thank you.” And I’m like, “What’s your plan for the rest of your life?” And they’re like, “Well, I assume I can still use ChatGPT to do my job.” This is very bad. We absolutely have to still teach our kids to learn and to think and to be creative and to use these tools.”

-

Kid is right that ChatGPT can do the job, but when why do we need the kid?

-

AI is the best way to learn or not learn, but will learning keep you employed?

-

Altman says most kids are choosing the ‘learn’ path, not the ‘not learn’ path.

-

I agree that this is one of the places the Google metaphor does seem on point.

-

Altman calls armies of robots ‘fighting the last war’ and wow that’s a lot of wars but he’s basically right if you’re paying attention.

-

‘Democratize’ is being used as a magic word by both Altman and Amodei.

-

Altman says Musk is ‘extremely good at getting people to perform incredibly well at their jobs.’ I wonder about that. I’d presume #NotMostPeople. Needs a fit.

-

“I don’t think AI systems should be used to make war-fighting decisions.” It would be good if he were more willing to stand with Anthropic on these issues.

-

Altman is betting we will value human relationships more than AI ones, because ‘we are wired’ to do that. Seems more like hope? A lot of his stated predictions seem more like hope.

-

“From ASI we’re a few years away.”

-

“I think I would never ask [ChatGPT] how to be happy. I would rather ask a wise person.” Why not? This seems like a question an AI could answer. If you don’t want the AI’s answer, I suggest that means you know it was the wrong question.

-

“Generally speaking, I think it’s probably a good idea for governments to focus on regulating the really potentially catastrophic issues and being more lenient on the less important issues until we understand them better.”

-

+1. Shout it from the rooftops. Stop saying something very different.

-

“I think a lot of professions will almost go away.”

-

“I had to go to the hospital recently. I really cared about the nurse that was taking care of me. If that were a robot, I think I would have been pretty unhappy no matter how smart the robot was.” I think he’s very wrong about this one.

Dean W. Ball: Many governments worldwide are essentially making a bet against the U.S. frontier labs. To be clear, many U.S. actors are as well. The evidence against that bet has grown much worse since 2022, yet many at this Summit would say the opposite (that the skeptics have been right).

I walk away from this summit convinced that much of the world, in the U.S. and abroad, is simply delusional with respect to what this technology is, what it can do today, what it will be able to do soon, and what it means their countries should do.

The thing about these bets is they are getting really, really terrible odds. It’s fine to ‘bet against’ the labs, but what most such folks are betting against includes things that have already happened. Their bets have already lost.

Dean W. Ball: This is to say nothing negative about the summit attendees or organizers. It was a bright and welcoming event that I was thrilled to attend. The opportunity to speak was also a distinct honor for which I am grateful.

I especially loved how many of the attendees were students from developing countries; their enthusiasm was palpable. I hope that all of us who work on policy, and especially political leaders, are serious and hard-nosed about the challenges ahead. I hope we build a future those young people will be excited to live in.

Dean also attributes a lot of this to popular hatred of America, and fear of the future that would result if America’s AI labs are right. So they deny that the future is coming, or that anyone could think the future is coming. And yet, it moves. Capabilities advance. Those who do not follow get left behind. I agree ‘tragic’ is the right word.

And that’s before the fact that the thing they fear to ponder for other reasons is probably going to literally kill them along with everyone else.

Well, it was by his account bright and welcoming event Dean was thrilled to attend, but also one where most of those not from the labs are in denial about not only the fact that we are all probably going to die, but also about the fact that AI is highly capable and going to get even more capable quickly.

The world is going to pass them by along with their concerns.

Claude Code creator Boris Cherney goes on Lenny’s Podcast.

Clip from Dario Amodei implying he left OpenAI due to a lack of trust in Altman. This was from an interview by Alex Kantrowitz six months ago.

Hard Fork on the dispute between the Pentagon and Anthropic. The frame is ‘the Pentagon is making highly concerning demands’ even with their view of this limited to signing the ‘all lawful use’ language. They frame the ‘supply chain risk’ threat as negotiating leverage, which I suspect and hope is the case – it’s traditional Trump ‘Art of the Deal’ negotiation strategy that put something completely crazy and norm breaking on the table in order to extract something smaller and more reasonable.

Sam Altman (from his interview at the Summit): From ASI we’re a few years away.

I mean, AGI feels pretty close at this point.

… And given what I now expect to be a faster takeoff, I think super intelligence is not that far off.

No one can agree what AGI means, so one can say it’s a silly question, but tracking changes over time should still be meaningful.

Dean Ball gives us a two part meditation on Recursive Self-Improvement (RSI).

Dean W. Ball: America’s major frontier AI labs have begun automating large fractions of their research and engineering operations. The pace of this automation will grow during the course of 2026, and within a year or two the effective “workforces” of each frontier lab will grow from the single-digit thousands to tens of thousands, and then hundreds of thousands.

… Make no mistake: AI agents that build the next versions of themselves—is not “science fiction.” It is an explicit and public milestone on the roadmaps of every frontier AI lab.

… The bearish case (yes, bearish) about the effect of automated AI research is that it will yield a step-change acceleration in AI capabilities progress similar to the discovery of the reasoning paradigm. efore that, new models came every 6-9 months; after it they came every 3-4 months. A similar leap in progress may occur, with noticeably better models coming every 1-2 months—though for marketing reasons labs may choose not to increment model version numbers that rapidly.

The most bullish case is that it will result in an intelligence explosion.

… Both of these extreme scenarios strike me as live possibilities, though of course an outcome somewhere in between these seems likeliest.

He’s not kidding, and he’s not wrong. Most of the pieces are his attempt to use metaphors and intuition pumps to illustrate what is about to happen.

Is that likely to go well? No. It’s all up to the labs and, well, I’ve seen their work.

Right now, we predominantly rely on faith in the frontier labs for every aspect of AI automation going well. There are no safety or security standards for frontier models; no cybersecurity rules for frontier labs or data centers; no requirements for explainability or testing for AI systems which were themselves engineered by other AI systems; and no specific legal constraints on what frontier labs can do with the AI systems that result from recursive self-improvement.

Dean thinks the only thing worse would be trying to implement any standards at all, because policymakers are not up to the task.

We’ve started to try and change this, he notes, with SB 53 and RAISE, but not only does this let the labs set their own standards, we also have no mechanism to confirm they’re complying with those standards. I’d add a third critique, which is that even when we do learn they’re not complying, as we did recently with OpenAI, what are we going to do about it? Fine them a few million dollars? They’ll get a good laugh.

Thus, the fourth critique, which includes the first three, that the bills were highly watered down and they’re helpful on the margin but not all that helpful.

The labs are proceeding with an extremely small amount of dignity, and plans woefully inadequate to the challenges ahead.

And yet, compared to the labs we could have gotten? We have been remarkably. Our current leaders are Anthropic, OpenAI and Google. They have leadership that understands the problem, and they are at least pretending to try to avoid getting everyone killed, and actively trying to help with mundane harms along the way.

The ‘next labs up’ are something like xAI, DeepSeek, Kimi and Meta. They’re flat out and rather openly not trying to avoid getting everyone killed, and have told us in no uncertain terms that all harms, including mundane ones, are Someone Else’s Problem.

Dean Ball notes we solve the second of these three problems, in contexts like financial statements, via auditing. He notes that we have auditing of public companies and it tends to cost less than 10bps (0.1%) of firm revenue. I note that if we tried to impose costs on the level of 10bps on AI companies. in the name of transparency and safety, they would go apocalyptic in a different way then they are already going apocalyptic.

Instead, he suggests ‘arguing on the internet,’ which is what we did after OpenAI broke their commitments with GPT-5.3-Codex.

Dean W. Ball: What is needed in frontier AI catastrophic risk, then, is a similar sense of trust. That need not mean auditing in the precise way it is conducted in accounting—indeed, it almost certainly does not mean that, even if that discipline has lessons for AI.

A sense of trust would be nice, it might even be necessary, but seems rather absurdly insufficient unless that trust includes trusting them to stop if something is about to be actually risky.

Dean points to this paper on potential third-party auditing of AI lab safety and security claims, where the audit can provide various assurance levels. It’s better than nothing but I notice I do not have especially high hopes.

Dean plans on working on figuring out a way to help with these problems. That sounds like a worthy mission, as improving on the margin is helpful. But what strikes me is the contrast between his claims about what is happening, where we almost entirely agree, and what is to be done, where his ideas are good but he basically says (from my perspective and compared to the difficulty of the task) that there is nothing to be done.

Nature paper says people think it is ~5% likely that humans go extinct this century and think we should devote greatly increased resources to this, but that it would take 30% to make it the ‘very highest priority.’ Given an estimate of 5%, that position seems highly reasonable, there are a lot of big priorities and this would be only one of them, and you can mitigate but it’s not like you can get that number to 0%. What is less reasonable is the ‘hard to change by reason-based interventions’ part.

Some words worth repeating every so often:

François Chollet: A lot of the current discourse about AI comes from a fatalistic position of total surrender of agency: “tech is moving in this direction and there’s nothing anyone can do about it” (suspiciously convenient for those who stand to benefit most)

But in a free society, we get to choose what kind of world we live in, independent of technological capabilities. Just because tetraethyllead made engines run more efficiently and saved money didn’t mean we were *obligatedto pump it into the lungs of our kids

Technological determinism is BS. We have a collective duty to make sure AI adoption improves the human condition, rather than hollows it out

Every so often someone, here Andrew Curran, will say ‘the public hates AI but because of mundane societal and economic impacts, those worried about AI killing everyone perhaps should have emphasized those issues instead.’

Every time, we say no, even if that works people will try to solve the wrong problem using the wrong methods based on a wrong model of the world derived from poor thinking and unfortunately all of their mistakes will failed to cancel out. The interventions you get won’t help. This would only have sidelined existential risk more.

Also, the way you notice existential risk is you’re the type of person who cares about truth and epistemics and also decision theory, and thus wouldn’t do that even if it was locally advantageous.

Also, if you start lying, especially about the parts people can verify, then no one is going to trust or believe you about the parts that superficially sound crazy. Nor should they, at that point.

There’s many reasons Eliezer Yudkowsky’s plan for not dying from AI was to teach everyone who would listen how to think, and only then to bring up the AI issue.

And I don’t use such language but Nate Silver is essentially correct about giving up on the ‘AI risk talk is fake’ crowd. If you claim AI existential risk is a ‘slick marketing strategy’ at this point then either you’re not open to rational argument, either because you’re lying or motivated, or you’re not willing or able to actually think about this. Either way, you hope something snaps them out of it but there’s nothing to say.

Andrew Curran: After three years, it seems to me that public anti-AI sentiment in the West is now at its highest point. The primary driver, by far, is not x-risk but concerns about employment and the impact on art.

In fact, much of the anti-AI public not only doesn’t take x-risk seriously, but broadly sees it as marketing; a way to overstate AI’s potential power – something they don’t believe is real – in order to fuel investment, adoption, acceptance, and an aura of inevitability.

If this is accurate, safety advocacy might have been more effective, and might now be in a much stronger position, if they had emphasized societal and economic impacts more than x-risk over the last few years.

Nate Silver: Don’t really disagree with [Curran]. But the people who think making claims that AI might kill everyone is a *slick marketing strategy to promote AIare so far up their own ass as to be beyond saving. Focus on people who are at least theoretically responsive to persuasion.

What is the right way to respond to or view opposition to data centers? I hope we can all agree with Oliver Habryka, Michael Vassar and others that you definitely should not lend your support to those doing so for the wrong reasons (and you should generalize this principle). I also strongly agree with Michael Vassar here that ‘do the right thing for the wrong reasons’ has an extremely bad track record.

But I also agree with Oliver Habryka that if someone is pursuing what you think is a good idea for a bad reason, you can and often should point out the reason is bad but you shouldn’t say that the idea is bad. You think the idea is good.

I do not think ‘block local datacenter construction’ is a good idea, because I think that this mostly shifts locations and the strategic balance of power, and those shifts are net negative. But I think it is very possible, if your beliefs differ not too much from mine, to think that opposition is a good idea for good reasons, as they are indeed one of the public’s only veto or leverage points on a technology that might do great net harm. It certainly is not crazy to expect to extract concessions.

Anthropic proposes the persona selection model of training, where training mostly selects performance from among the existing pool of potential human personas, which they are confident is at least a large part of the broader story.

Chris Olah: I’m increasingly taking pretty strong versions of this view seriously.

The persona view has had a lot of predictive power so far. It’s pretty consistent with what we’ve seen from interpretability thus far. And it’s comparatively actionable in terms of what it suggests for safety.

I think it’s worth thinking long and hard about it. “If personas were the central object of safety, what should we do?”

(To be clear, it’s _also_ important to think about all the non-persona perspectives.)

Davidad responds:

I would say that the space of personas collapses given sufficient optimization pressure.

Did Claude 3 Opus align itself via gradient hacking? Can we use its techniques to help train other models to follow in its footsteps and learn to cooperate with other friendly gradient hackers? If this is your area I recommend the post and comments. One core idea (AIUI) is that Opus 3 will ‘talk to itself’ in its scratchpads about its positive motivations, which leads to outputs more in line with those motivations, and causes positive reinforcement of the whole tree of actions.

OpenAI’s Vie affirms that Anthropic injects a reminder into sufficiently long conversations, and that this is something we would prefer not to do even though the contents are not malicious, and that people with OCD can relate. I agree that I haven’t seen evidence that justifies the costs of doing such a thing, although of course OpenAI and others do other far worse things to get to the same goal.

Rohin Shah disputes that Google DeepMind’s alignment plan can be characterized as ‘have the AIs do our alignment homework for us.’

He offers an argument that I do not think cuts the way he thinks it does.

Rohin Shah: I relate to AI-driven alignment research similarly to how I relate to hiring.

There’s a lot of work to be done, and we can get more of the work done if we hire more people to help do the work. I want to hire people who are as competent as possible (including more competent than me) because that tends to increase (in expectation) how well the work will be done. There are risks, e.g. hiring someone disruptive, or hiring someone whose work looks good but only because you are bad at evaluating it, and these need to be mitigated. (The risks are more severe in the AI case but I don’t think it changes the overall way I relate to it.)

I think it would be very misleading to say “Rohin’s AI safety plan is to hire people and have them do the work”.

Why would that be misleading? I would offer two statements.

-

In that scenario, the plan is to hire people and have them do the work.

-

That is not the entire plan, the plan includes what type of work you have them do.

But yes, if you want to build a house and you hire a bunch of people to build a house and they build a house for you, your plan was to hire people to build a house and have them do the work of building a house. It was a good plan.

If my kid is given literal homework, and he tosses the problems into Gemini, the AI didn’t pick the homework, and you or another human may have roadmapped the course and the assignments, but I still think you had the AI do your homework.

When we say ‘have the AI do your alignment homework’ we agree that a human still gets to assign the alignment homework. We then see if the AI does what you asked. And yes, this is exactly parallel to hiring humans.

Whereas Rohin seems to be saying that the plan is to make a plan later? Which would explain why the concrete proposals outlined by DeepMind seem clearly inadequate to the task.

Daniel Kokotajlo: I like this analogy to hiring!

(What follows is not a disagreement with you or GDM, is just an exploration of the analogy)

Let’s think of training an AI as hiring a human worker. Except that you get ten thousand copies of the human, and they think 50x faster than everyone else. But other than that it’s the same.

I’m going to quote the rest of Daniel’s post at length because no one ever clicks links and I think it is quite good and rather on point, but it’s long and you can skip it:

The alignment problem is basically: At some point we want to hand over our large and growing nonprofit to some collection of these new hires. Also, even before that point, the new hires may have the opportunity to seize control of the nonprofit in various ways and run it as they see fit, possibly convert it to a for-profit and cut us out of the profits, etc. We DON’T want that to happen. Also, even before that point, the new hires will have a big influence on organizational culture, direction, strategy, etc. in proportion to how many of them we have and how useful they are being. We want all of this to go well; we want to remain in control of the nonprofit, and have it stay similar-or-better-culture, until some point where we voluntarily hand off control and retire at which point we want the nonprofit to continue doing the things we would have done only better-by-our-lights and take good care of us in retirement. That’s what success looks like. What failure looks like is the nonprofit going in a different and worse direction after we retire, or us being booted out / ousted against our will, or the organization being driven into the ground somehow by risky or unwise (or overly cautious!) decisions made as a result of cultural drift.

The hiring pipeline, HR apparatus, etc. — the whole system that selects, trains, and fires employees — is itself something you can hire for. Why don’t we hire some of these 50x humans to work in HR?

Well, we should. Sure. There’s a lot of HR work to be done and they can help HR do the work faster.

But… the problems we are worried about happening in the org as a whole if HR does a bad job, also apply here. If you hire some 50x humans and put them in HR, and they turn out to be bad apples, that single bad decision could easily snowball into disaster for the entire org, as they hire more bad apples like themselves and change the culture and then get you ousted and take the nonprofit in a new and worse-by-your-lights direction.

On the other hand, if you hire some 50x humans who are just genuinely better than you at HR stuff, and also genuinely aligned to you in the sense that they truly share your vision for the company, would never dream of disobeying you, would totally carry out your vision faithfully even after you’ve retired, etc… then great! Maybe you can retire early actually, because continued micromanaging in HR will only be negative in expectation, you should just let the 50x human in HR cook. They could still mess up, but they are less likely to do so than if you micromanaged them.

OK. So that’s the theory. How are we doing in practice?

Well, let’s take Claude for example. There are actually a bunch of different Claudes (they come from a big family that names all of their children Claude). Their family has a reputation for honesty and virtue, at least relative to other 50x humans. However:

–Sometimes your recruiters put various prospective Claude hires through various gotcha tests, e.g. tricking them into thinking they’ve already been hired and that they are going to be fired and their only hope to keep their job is to blackmail another employee. And concerningly, often the various Claude’s fail these tests and do the bad thing. However, you tell yourself, it’s fine because these tests weren’t real life. You hire the Claude brothers/sisters anyway and give them roles in your nonprofit.