Auto industry, take note: This student-made EV cleans the air while driving

An EV that cleans the air while driving might seem like a pipe dream , but a student team based at the Eindhoven University of Technology has made it reality. TU/ecomotive — as the team is called — has been creating inspiring, environmentally conscious concept cars for over a decade now.

Among the concept vehicles presented by the students, last year’s Zem — which stands for “zero emission mobility” — is the most outstanding. It’s a passenger EV that not only paves the way towards vehicle carbon neutrality, but also cleans the air while driving, something that, in turn, reduces CO2 emissions.

Zem was unveiled in July 2022 at the Louwman Museum in the Hague. Its message is clear: if a team of 32 students can create a car like this in under 12 months, then what’s stopping the automotive industry from doing more?

“We were inspired by the EU’s Green Deal,” Louise de Laat, Industrial Design student and team manager of the Zem project, told TNW. “Reducing our CO2 emissions is something very important for us, and we would really like to make a carbon neutral car. And that’s the reason for the recent project’s focus on zero emission mobility,” she explained.

CO2 neutral mobility requires a vehicle to have zero carbon emissions across its entire lifecycle, and Zem is an apt example of how close to this goal an EV like this can get.

In this piece, we’ll look at how Zem achieves this through its use, production, and afterlife — as well as what the car industry can learn from these sorts of schemes.

The air-cleaning technology

As we mentioned at the start, instead of emitting CO2, Zem captures it. Effectively, it cleans the air while driving. That’s thanks to an innovative technology called direct air capture (CAP), which “traps” carbon dioxide into a filter. Companies such as Climeworks and Carbyon have been applying this air-cleaning method via large installations. But the Zem team decided to implement it on the car.

It works like this: while driving, air moves through the car into a self-designed filter, which captures and stores CO2, allowing clear air to flow out of the vehicle. This compensates for the total emissions of all life phases.

But what happens when the filter is saturated?

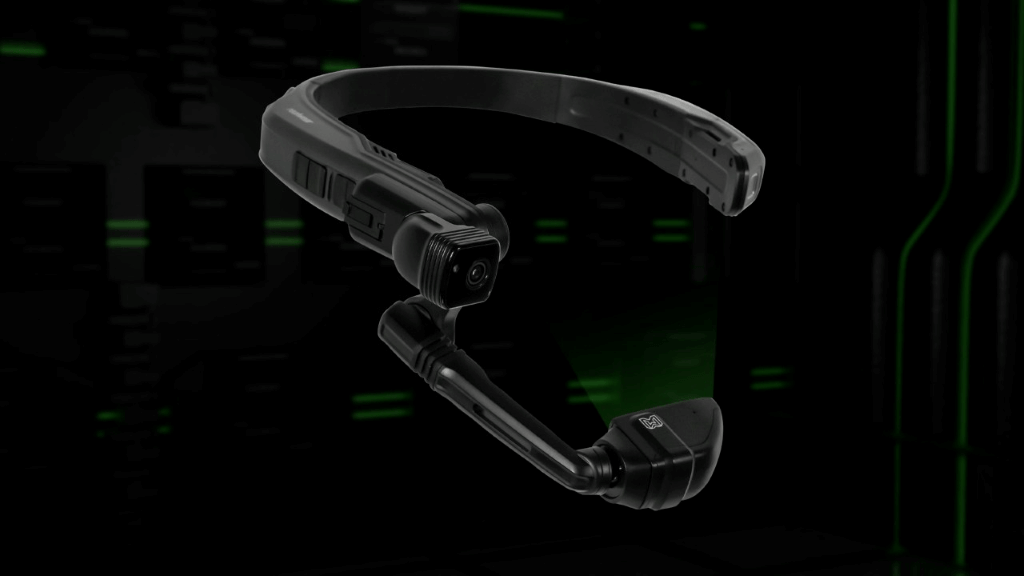

“We have designed a special charging pole for this,” Louise explained. “While Zem is charging you can remove the filter and place it in a special tank inside the pole. Cleaning the filter takes about the same time as charging. At the same time, the CO2 absorbed and saved in the tank can be repurposed and used by industries that need it, to make carbon fibers, for instance,” she added.

And to increase the vehicle’s sustainability even when not in use, TU/ecomotive has equipped it bi-directional charging technology to provide electricity to homes, as well as solar panels to store energy.

Maximising sustainable production and afterlife

To achieve a high level of sustainability, the TU/ecomotive opted for a novel production method: additive manufacturing — or simply, 3D printing. The team collaborated with partners — such as CEAD and Royal3D — to develop the car’s fundamental structure. Specifically, the monocoque and the body panels.

As Louise explained, they also 3D-printed parts of the interior, including the car seat shell, the dashboards, the middle console, the steering wheel, and the roof beams.

According to the team, this manufacturing process results in nearly zero waste materials, as the various car parts were printed in the exact shape needed. At the same time, they did the printing using circular plastics. These are granulates that had already been used and can be shredded and reused afresh in other projects.

“You can use that same material again to make the same event over three times before it loses its specifications,” Louise noted.

The vision of circularity has been applied throughout Zem’s design as well.

For example, the seat upholstery is made from the residue released during pineapple production. The roof upholstery and the floor mats consist of ocean plastics. And, through the collaboration with Black Bear Carbon, recycled black carbon from worn tires has been used for the EV’s coating and tires.

As a result, the concept car boasts “as little CO2 emissions as possible” during the production phase. At the same time, the types of materials, their ease of separation, and their circularity, all contribute to keeping CO2 emissions during the end-of-use phase at a lower level — especially when compared to conventional cars.

But, according to Louise, it proved extremely challenging to give a specific number to Zem’s overall emissions via the Life Cycle Assessment (LCA) method, revealing a gap in the industry.

“We need a lot of data from the partners where we get the parts from and some of them don’t know the exact LCA of their product,” she said. On the upside, she considers it beneficial that this project meant their partners acknowledged the vehicle’s environmental footprint.She also remains hopeful that respective legislation from national governments and the EU in general will standardise the use of LCA.

As per Louise, Zem has succeeded in reaching its goal to drastically lower CO2 emissions to the maximum extent possible. Yet, the EV does come with disadvantages that would require further work to enable its scaleup into a marketable product.

“If you build a car in less than one year, there will be some flaws that you still need to work on,” she noted. “Zem drove smoothly on the DRC track during the US tour, but the closer you get to the vehicle, the easier it is to see its flaws.” And that’s to be expected when you work with new materials and new technologies within a short period of time, Louise added.

A win-win for students and commercial partners

Now that the Zem project has been concluded, a renewed team has started working on the next concept vehicle. Stijn Plekkenpol — a sustainable innovation student — will lead the next project.

“What we really want to do now is build a climate positive car by 2030. This means, a vehicle which is marketable, which could be produced, and actually have a positive impact on the environment instead of any negative ones,” Stijn told TNW.

In the meantime, Louise aims to keep working on the filter technology and would be excited to see Zem turn into a mass-produced car. After all, it’s not uncommon for a student concept to grow into a startup and a real-life product. Think of Lightyear, the now famous solar EV Dutch startup, which was also started by students of the Eindhoven University of Technology.

While both Louise and Stijn attribute Zem’s success to the students’ team “long working hours and [their] dedication”, they explained the vital role commercial patterns played as well.

“The majority of our partners are from Eindhoven’s Brainport region, which is known for its high density of R&D, and is called the Silicon Valley of the Netherlands,” Louise said.

These partners supported the project by providing parts, materials, knowledge, and financial support. And as for what they gained in return, Louise summarised three main advantages: employee recruitment, exposure, and the enjoyment and inspiration stemming from the collaboration with young people bringing bold ideas to the table.

Both Louise and Stjn have optimistic views on the future of mobility. They believe that cars will remain an integral part of transportation, but that they have the potential to be climate-positive instead of adding to carbon emissions.

And, as Zem showcases, we should trust in the innovative ideas of the younger generations, further seeking the collaboration between daring university projects and commercial partnerships.

The new concept vehicle will be revealed on July 27 — and I, for one, can’t wait to see what the students have in store for us.

Auto industry, take note: This student-made EV cleans the air while driving Read More »